Translation from Spanish to English with Voice: Translation

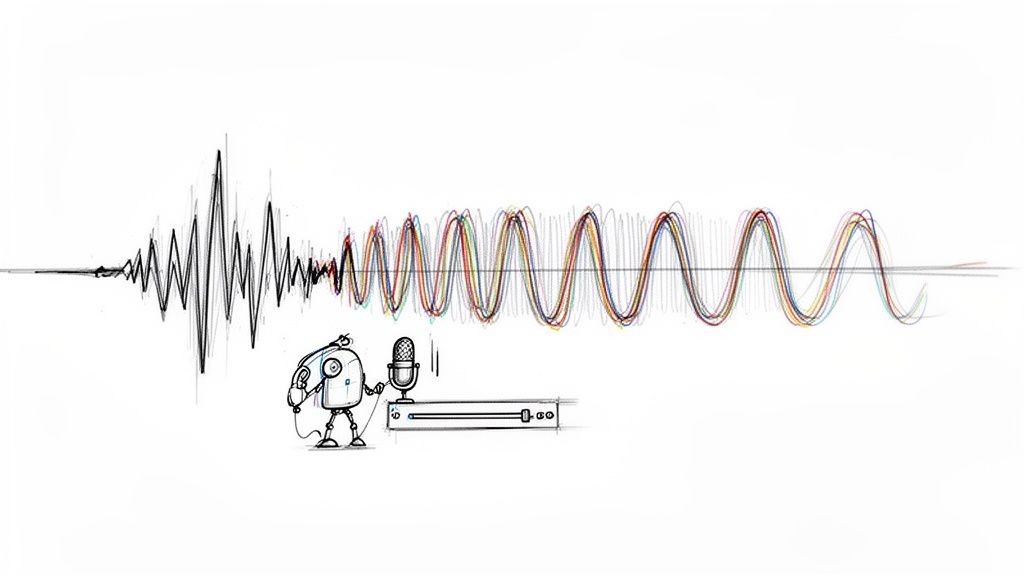

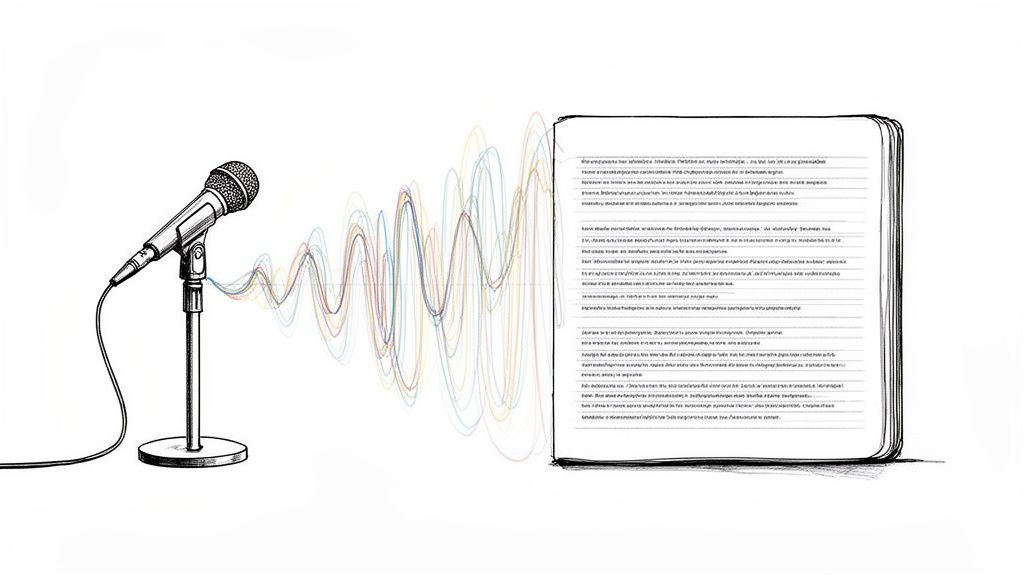

You’re probably here because you have Spanish audio and need English output that people can use. Maybe it’s a client interview, a Zoom recording, a WhatsApp voice note, a webinar, or a product demo from a teammate in Mexico, Spain, or Argentina. The hard part isn’t just converting sound into words. It’s preserving meaning, handling accent differences, and ending up with English audio that doesn’t sound stiff or obviously machine-made.

That’s where translation from spanish to english with voice gets practical instead of theoretical. A good workflow helps you avoid the two failures I see most often: trusting a rough auto-translation too early, and skipping the transcript review step that would have caught the biggest mistakes. If the Spanish source is messy, the English voice output will magnify every error.

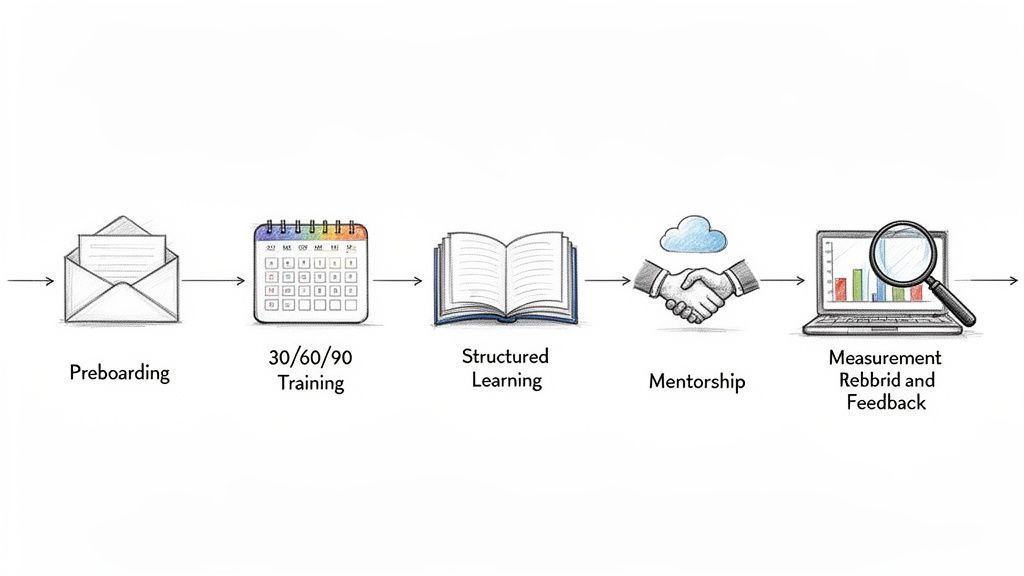

From Spanish Speech to English Voice A Step-by-Step Process

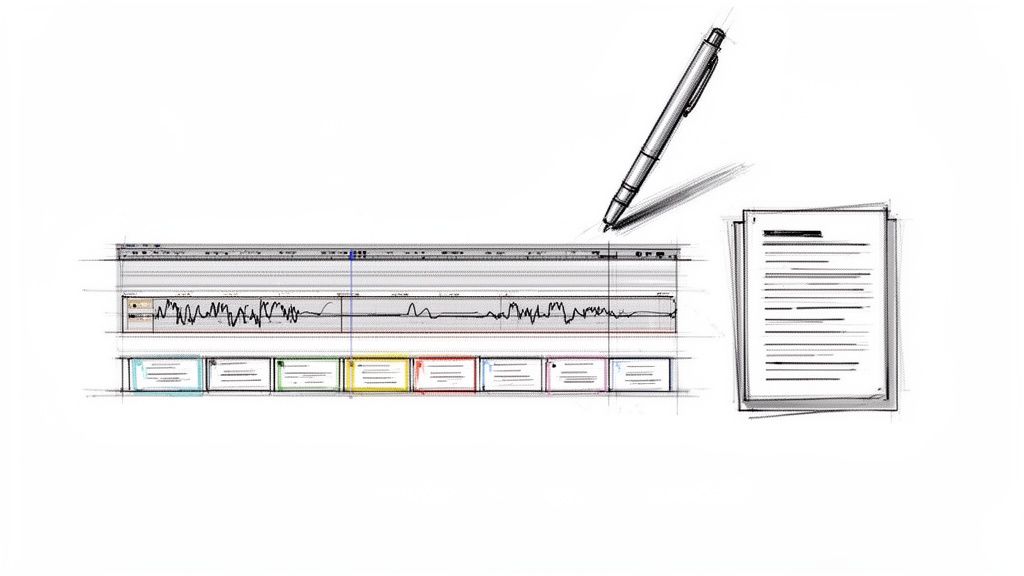

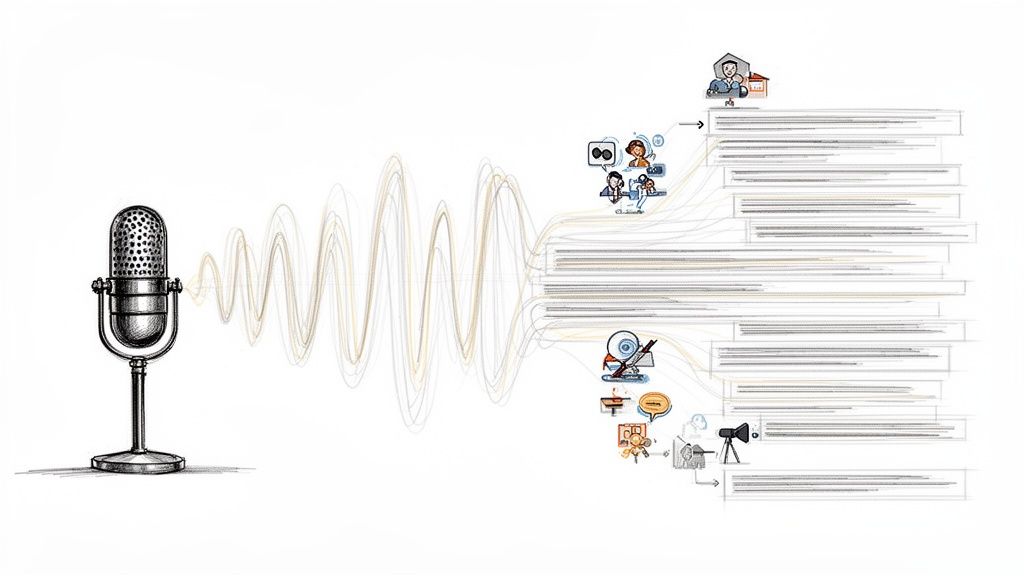

The cleanest workflow has five stages. Capture the Spanish audio, transcribe it, review the transcript, translate the text, and generate the English voice. People often try to jump from spoken Spanish straight to spoken English in one click. That can work for casual use, but for anything client-facing, I treat the transcript as the quality checkpoint.

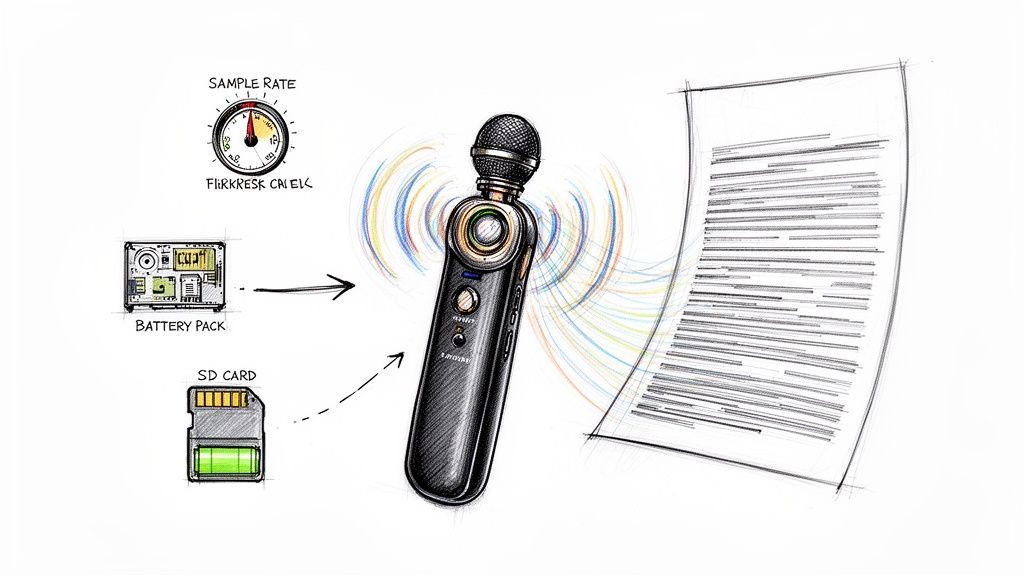

Start with the cleanest source audio you can get

If you’re recording live speech, use a decent mic and reduce room echo. If you’re uploading an existing file, listen to the first minute before doing anything else. You’re checking for cross-talk, clipped words, loud background music, and whether the speaker switches between Spanish and English mid-sentence.

A simple rule helps here.

Practical rule: Bad audio doesn’t become good translation. It becomes confident-looking wrong output.

For a platform workflow, I usually upload the original file first rather than trimming it outside the tool unless there’s obvious dead air at the beginning or end. Keeping the source intact makes it easier to compare the transcript to the original timing later.

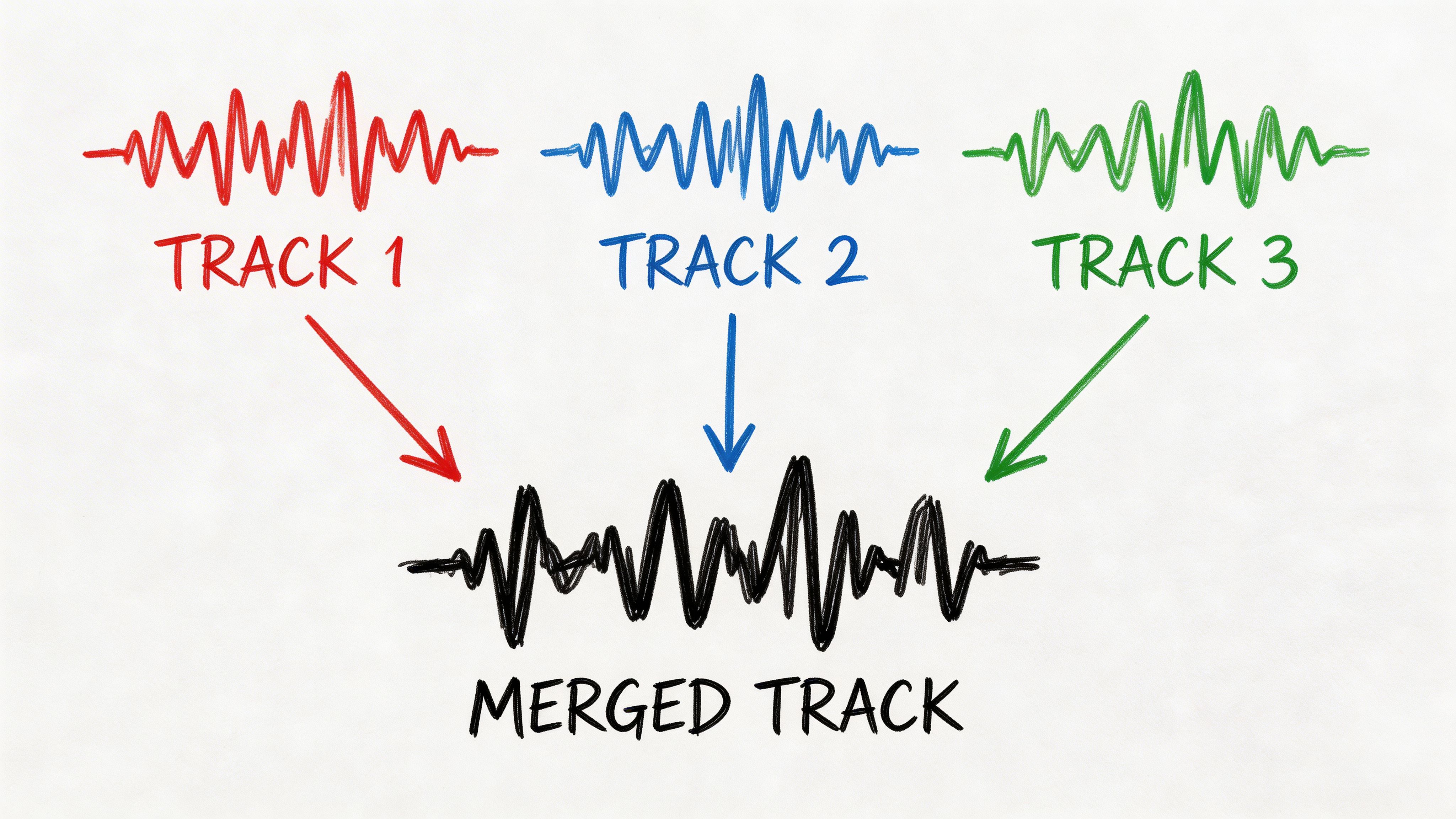

Generate the Spanish transcript first

Accuracy is paramount. The system first runs speech-to-text on the Spanish audio, turning spoken language into editable text. That transcript becomes the foundation for everything after it.

If the audio includes slang, names, acronyms, or region-specific vocabulary, inspect those manually. For example, a sales call might include product names, internal team shorthand, or place names that generic transcription models often mangle. Fixing them at the Spanish transcript stage is faster than trying to repair them after translation and voice synthesis.

I use this checklist before moving on:

- Speaker identity: Separate speakers if the conversation includes interruptions or overlapping turns.

- Named terms: Correct company names, people’s names, tools, and brand terms.

- Filler cleanup: Remove repeated false starts only if the final English audio needs to sound polished.

- Meaning check: Flag any sentence that reads oddly even in Spanish. Translation won’t rescue it.

Translate for meaning, not for word order

Once the Spanish transcript is solid, translate the text into English. Many tools, however, look good on simple sentences but struggle on speech that was never meant to be read. Spoken Spanish often includes restarts, implied subjects, regional phrasing, and compressed ideas that need to be rewritten slightly in English to sound natural.

A practical example helps. If a speaker says something informal and fragmented in Spanish, the strongest result usually isn’t a literal line-by-line conversion. It’s an English version that preserves intent, tone, and action.

Here’s the working sequence I recommend:

| Stage | What you do | Why it matters |

|---|---|---|

| Audio intake | Upload or record Spanish speech | Preserves original context and timing |

| Transcript pass | Create and review Spanish text | Catches recognition errors early |

| Translation pass | Convert Spanish text into English | Protects meaning before voice generation |

| Voice synthesis | Turn English text into speech | Produces usable final audio |

| Final QA | Compare output with original intent | Prevents polished but inaccurate delivery |

Turn the English text into natural voice output

Now you can generate the English voiceover. This is the stage people notice first, but it’s the stage that depends most on all the previous work. If the translated text is awkward, even a very natural voice will sound wrong.

Choose a voice that fits the use case. For internal training, neutral and clear usually beats dramatic. For marketing clips, pacing and warmth matter more. For interviews or documentary-style content, slightly slower delivery often improves comprehension.

If the English script looks like written translation instead of spoken English, regenerate the script before you regenerate the voice.

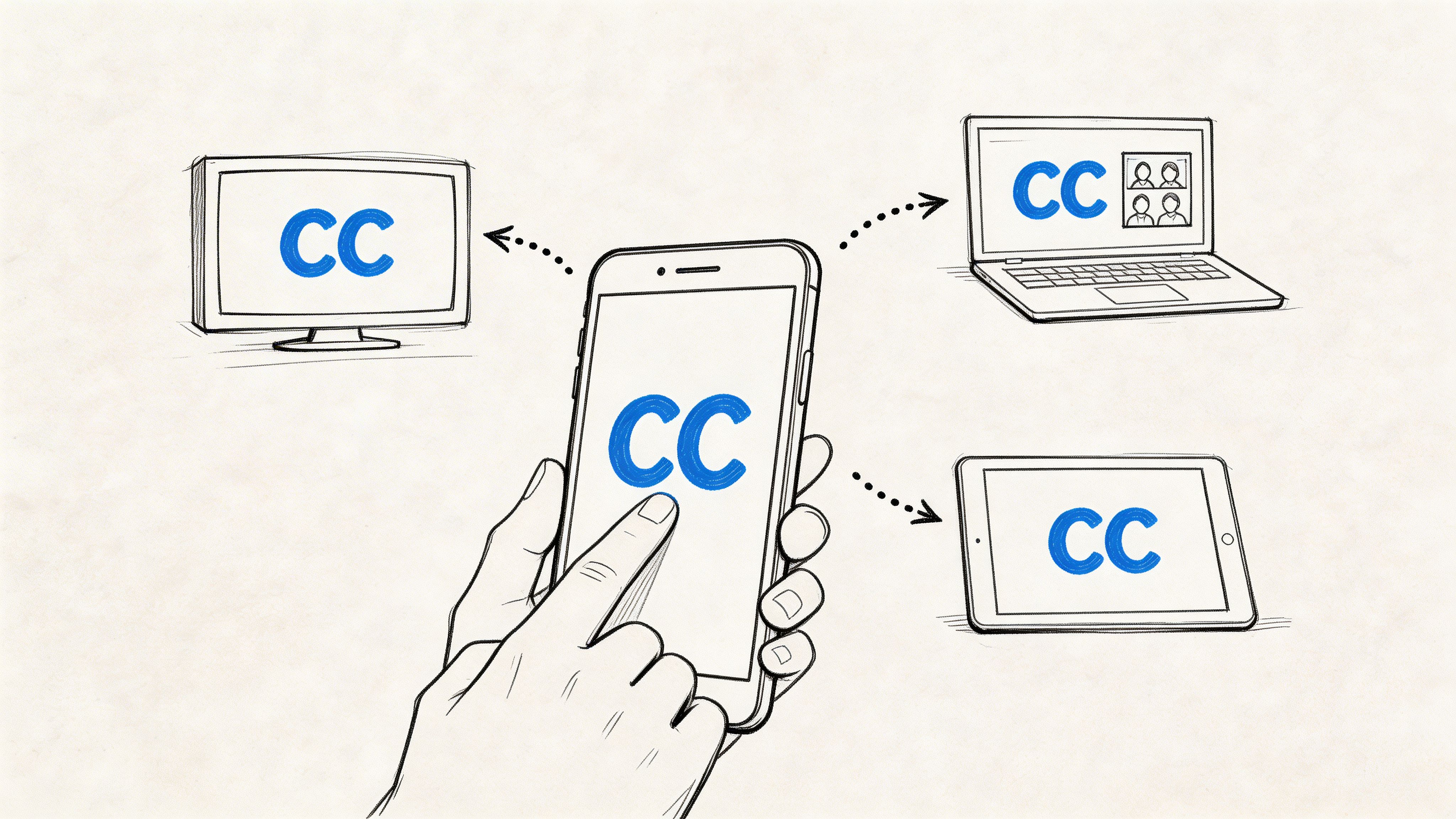

With an integrated workflow such as HypeScribe, the practical value is that you can handle upload, transcription, text cleanup, and export in one place instead of bouncing between disconnected apps. The key isn’t the interface. It’s that every stage leaves you with something editable.

Do a final listen before delivery

Never export the English audio without listening through the beginning, middle, and end. I’m checking for three things:

- Pronunciation errors: Names and borrowed Spanish words often need manual correction.

- Timing drift: Some voice outputs run too fast when the translated English is denser than the original.

- Tone mismatch: A serious interview shouldn’t sound like an upbeat ad read.

For client work, I also keep the reviewed Spanish transcript and the English text alongside the final audio. When someone asks why a phrase was translated a certain way, that review trail saves time.

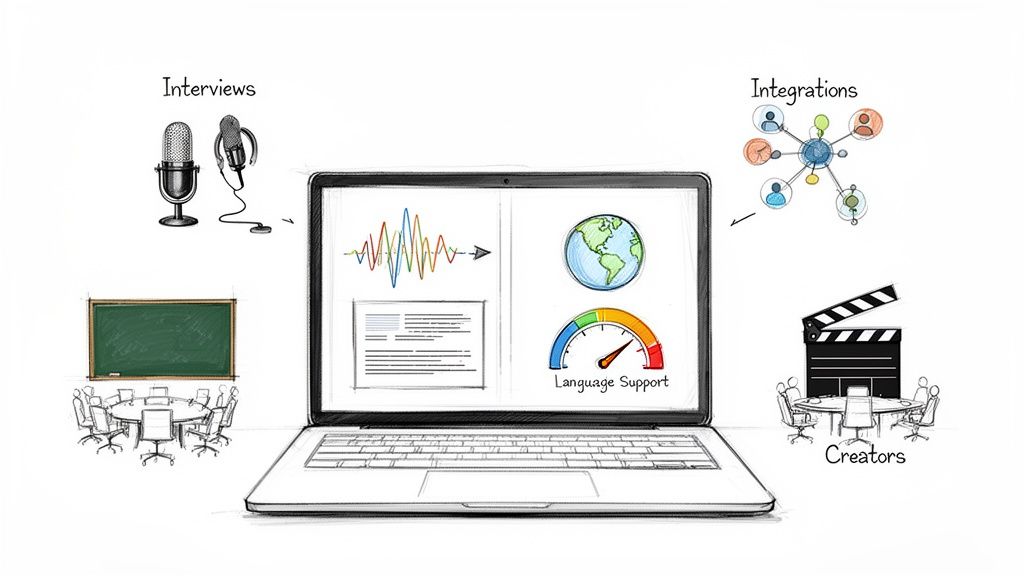

Choosing Your Spanish Voice Translation Toolkit

A bad tool choice usually shows up after the work starts. The transcript looks fine until it hits a Puerto Rican speaker, the live app keeps flattening idioms into generic English, or the dubbing platform gives you polished audio built from a shaky transcript. Tool selection matters because Spanish voice translation is really a chain of tasks, and different products are strong at different links in that chain.

For quick everyday translation

For short spoken phrases, travel use, or rough first-pass understanding, Google Translate is still the fast option. Google Translate's product page describes support across many languages and voice input, which is enough for basic comprehension and quick checks.

I use tools in this category to answer one question fast: "What did they probably say?" I do not use them to produce client-facing English audio.

For real-time conversation apps

Live conversation tools matter when there is no time to clean a transcript first. The best fit is usually a speech-first app built for turn-taking, not a file-based editor with a microphone button added later.

For meetings, interviews, front-desk interactions, and support calls, compare three things before anything else:

- Latency: Can both speakers keep a natural rhythm, or does the app force awkward pauses?

- Transcript visibility: Can you see what the system heard before you trust the translation?

- Accent behavior: Does it hold up with fast Latin American speech, dropped consonants, and local vocabulary?

Teams that handle recurring Spanish communication sometimes pair software with human review. For customer support queues, intake calls, or admin work, Spanish-speaking Virtual Assistants can catch the context errors automation misses, especially when the speaker is using regional shorthand.

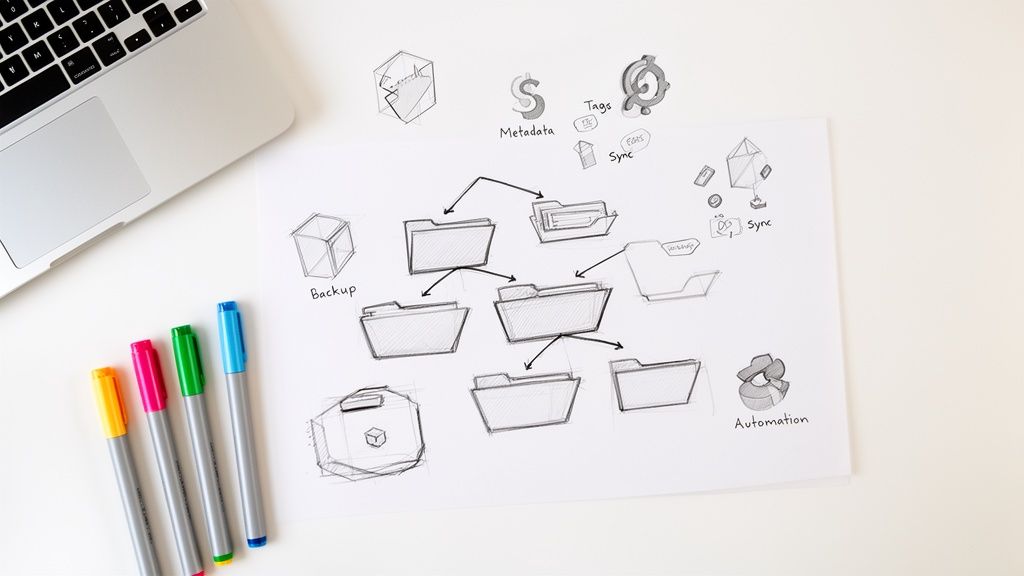

For professional translation and multilingual production

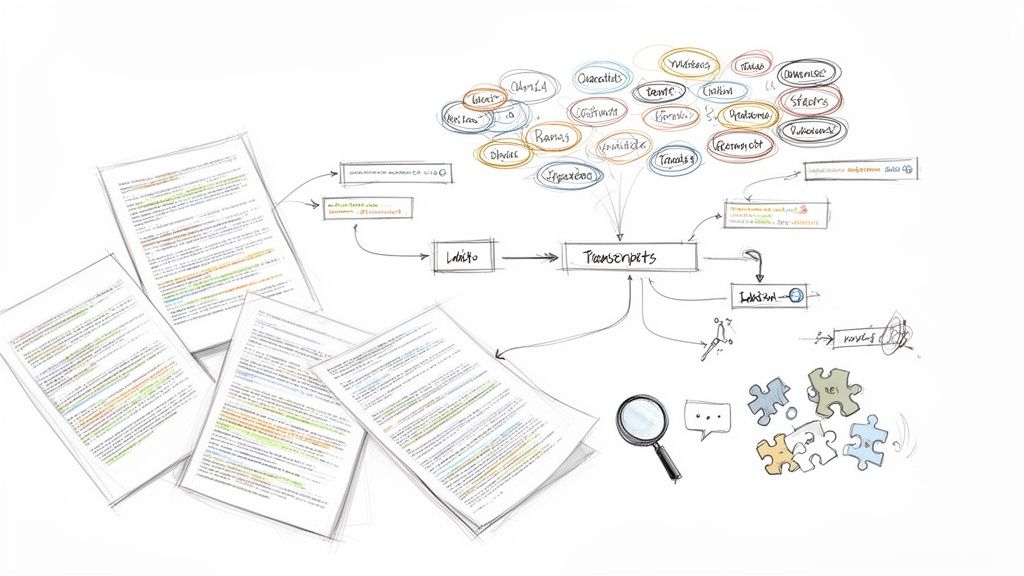

Long-form content needs a different stack. Here, I separate tools into three buckets: transcript-first editors, dubbing platforms, and translation management systems.

Trados fits the translation management side. RWS lists Trados products and licensing options on its official pricing and plans pages, which is the right place to check current costs and team features instead of relying on roundup posts. It makes sense for structured translation work, terminology control, and multi-review workflows. It is usually more system than a solo creator needs for one-off audio jobs.

Maestra is better known for dubbing, subtitles, and video localization. Maestra's features pages outline its language support and media workflow. That type of platform is useful when your final deliverable is a voiced English version, not just translated text.

Sonix is strong on transcription and subtitle editing. Sonix's site is the place to verify current language coverage, export formats, and collaboration options. In practice, Sonix is useful when editorial control matters more than flashy voice output.

One practical middle ground is HypeScribe, which handles uploaded audio and video, transcript creation, and export for later editing. That setup is useful if your work includes interviews, internal recordings, repurposed content, and multilingual publishing in the same pipeline. The same transcript-first logic also applies in other language pairs, including this guide to English to German translation with audio workflows.

What I compare before I choose

I start with failure points, not brand names.

- Source format: Live speech, uploaded audio, screen recording, or finished video.

- Transcript control: Can I correct the Spanish before the English voice is generated?

- Dialect tolerance: Does the system give me confidence with Mexican, Caribbean, Rioplatense, or Peninsular speech?

- Voice output quality: Can I adjust pacing, pronunciation, and speaker style?

- Exports: Do I need captions, dubbed audio, plain text, timecodes, or all of them?

- Review workflow: Can an editor or bilingual reviewer step in without rebuilding the project?

If the project involves dialect-heavy Spanish, I choose the tool that lets me inspect and fix the source transcript early. That one decision prevents more English voice errors than any text-to-speech setting I can change later.

Pro Tips for Crystal-Clear Translation Accuracy

A clean recording can still produce a weak English voice track if the speaker uses regional Spanish. I see this constantly with interviews, team updates, and customer calls. The audio is usable, but the system misreads Mexican slang, Caribbean rhythm, Rioplatense pronunciation, or compressed speech from southern Spain.

Dialect handling decides whether the output sounds right

Many tools perform well on neutral, slow Spanish and lose accuracy once the speaker shifts into local phrasing. Reviews discussed in VEED's overview of Spanish-to-English audio translation repeatedly point to trouble with accent-heavy and idiomatic speech. That matches real production work. Standard models often miss intent before they miss words.

The mistake usually starts upstream. If the transcript mishears "ahorita," drops a subject, or translates a regional phrase word-for-word, the English voice will sound polished but wrong.

Edit the Spanish transcript before generating English audio

This is the habit that improves results fastest.

I correct the Spanish transcript first, then translate, then review the English script before text-to-speech. If your platform supports transcript revision, use it. If not, export the text, fix it manually, and bring the corrected version back into your workflow. Teams that need tighter review control often pair this step with real-time transcription software for live and recorded speech so they can catch errors before the English voice is generated.

Use a short review pass like this:

- Standardize local phrasing: Replace highly regional expressions with clearer Spanish if the meaning is certain.

- Clarify references: Fix pronouns or vague nouns that could point to multiple people or actions.

- Restore missing connectors: Fast speech drops words that English syntax usually needs.

- Flag uncertain segments: Recheck the audio instead of forcing a translation guess.

A natural English voice does not fix a weak source transcript.

Add context that narrows the meaning

Spanish-to-English voice translation improves when the system knows the setting. A procurement call, onboarding session, medical explainer, and classroom lecture all use different terms and different levels of formality. Speaker labels help too, especially when two people use overlapping short phrases such as "claro," "dale," or "ya."

This matters for distributed teams working with coordinators, support staff, or Spanish-speaking Virtual Assistants across multiple countries. The same word can shift meaning by role, region, and task. "Aplicar" might mean apply, submit, or put on. "Factura" may point to an invoice, a bill, or a tax document depending on the workflow.

Recurring failure patterns to catch early

Some errors show up often enough that I check for them on every project:

| Failure pattern | What it sounds like | Better move |

|---|---|---|

| Regional slang | English turns literal and loses intent | Rewrite the Spanish line in standard Spanish first |

| Overlapping speakers | One sentence absorbs another speaker's meaning | Separate speakers before translation |

| Brand names and proper nouns | TTS mispronounces or transcript mangles them | Add a manual glossary or correction list |

| Register mismatch | English sounds too formal or too casual for the audience | Edit the English script before voice generation |

The practical rule is simple. Treat dialect review as part of quality control from the first transcript pass. That is what keeps Spanish voice translation accurate at the sentence level, not just readable in broad strokes.

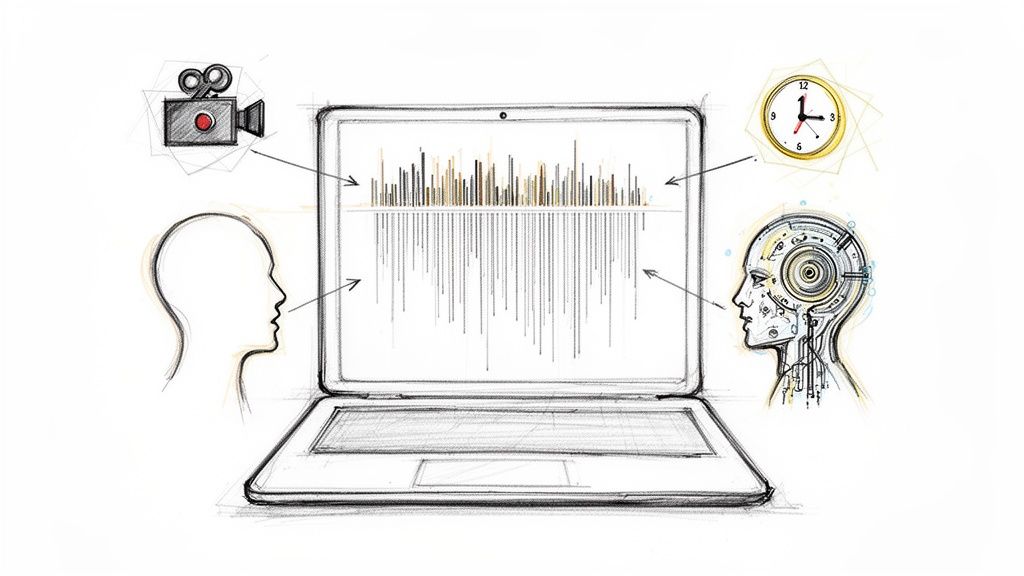

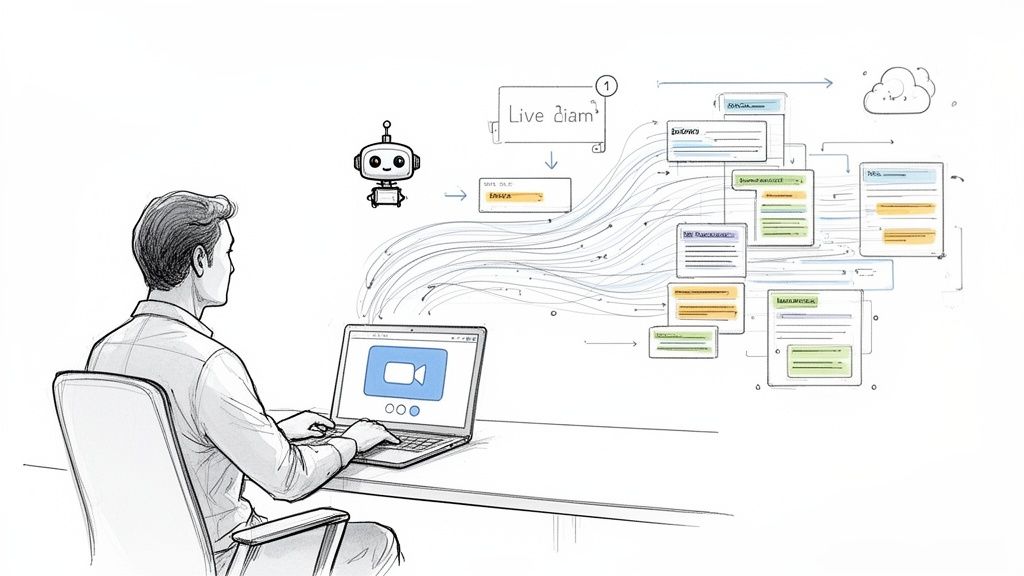

Using Voice Translation in Real-Time Meetings

A Monday operations call starts, and the problem shows up in the first two minutes. The account manager from Mexico City says something in fast, regional Spanish, the product lead in Chicago catches half of it, and the deadline changes before everyone is aligned. In live meetings, voice translation is not a nice extra. It is what keeps decisions from drifting.

The workable setup is straightforward. A meeting assistant joins the call, captures the Spanish audio, creates a live transcript, and sends English output fast enough for participants to respond in the same discussion, not ten minutes later. That speed matters, but so does dialect handling. A tool can be fast and still fail if it treats Colombian, Mexican, Rioplatense, and Caribbean Spanish as interchangeable.

What makes live translation usable in meetings

Meeting translation succeeds when delay stays low and meaning stays stable across accents, speaking speeds, and regional wording. If captions lag too far behind, people stop waiting for them. If the wording sounds polished but misses the intent, the team makes the wrong call with confidence, which is worse than asking for clarification.

Transync AI says current tools have reached strong real-time performance for Spanish-to-English meeting use, including high reported accuracy and very fast processing in supported setups, according to Transync AI's 2026 overview of Spanish-to-English AI translation tools. I still treat those numbers as a starting point, not a guarantee. Actual results change fast when a speaker switches dialect mid-call, uses local shorthand, or talks over someone else.

A meeting setup that holds up under pressure

The teams that get reliable results usually follow a few habits before the call starts:

- Set one speaking rule: pause before jumping in on decisions, handoffs, and budget points

- Tell the tool what language mix to expect: Spanish only, mostly Spanish, or bilingual switching

- Add names, products, and client terms in advance: this reduces transcript drift on proper nouns

- Assign one person to catch meaning errors live: not grammar edits, meaning errors

- Confirm decisions out loud before ending the topic: this gives the translator a cleaner final pass on the line that matters most

I also recommend a short audio check before high-stakes meetings. Bad microphones create more errors than people expect, and the system cannot recover context it never heard clearly.

If your team already records meetings, it is usually easier to run translation alongside a live transcription layer than to patch together separate apps during the call. For teams comparing options, this guide to real-time transcription software for meetings gives a useful starting point.

Here’s a quick demo format that shows the live experience in action:

In live meetings, the job is accurate enough understanding at the moment a decision is made.

Where real-time translation earns its keep

I use it most often in standups, onboarding sessions, client check-ins, support review calls, and cross-border project updates. Those are the meetings where timing matters more than polished phrasing.

The common failure point is not basic vocabulary. It is intent. A phrase that sounds harmless in one country can signal urgency, disagreement, or approval in another. Good real-time translation helps teams act on what was meant, not just what was said word for word.

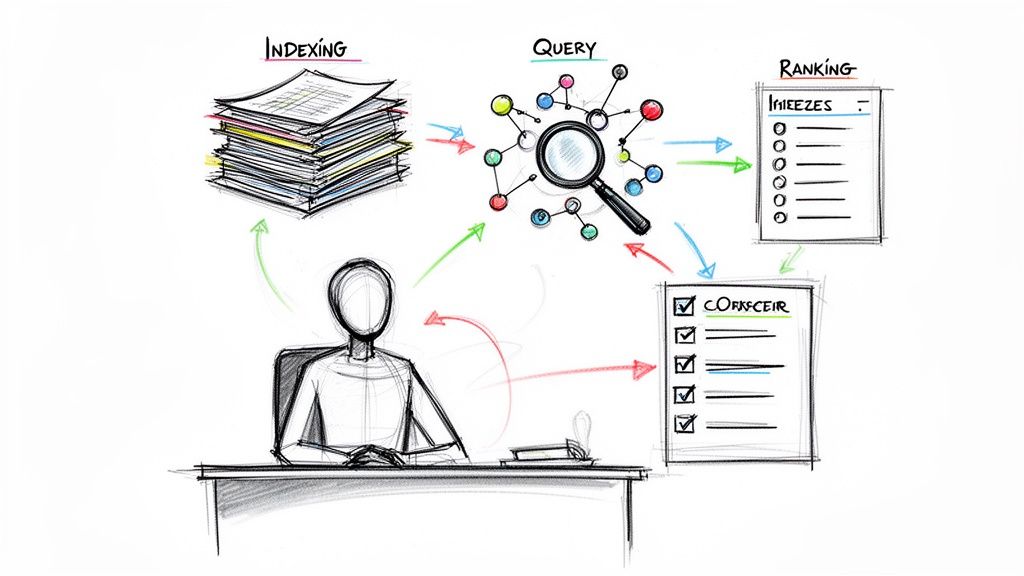

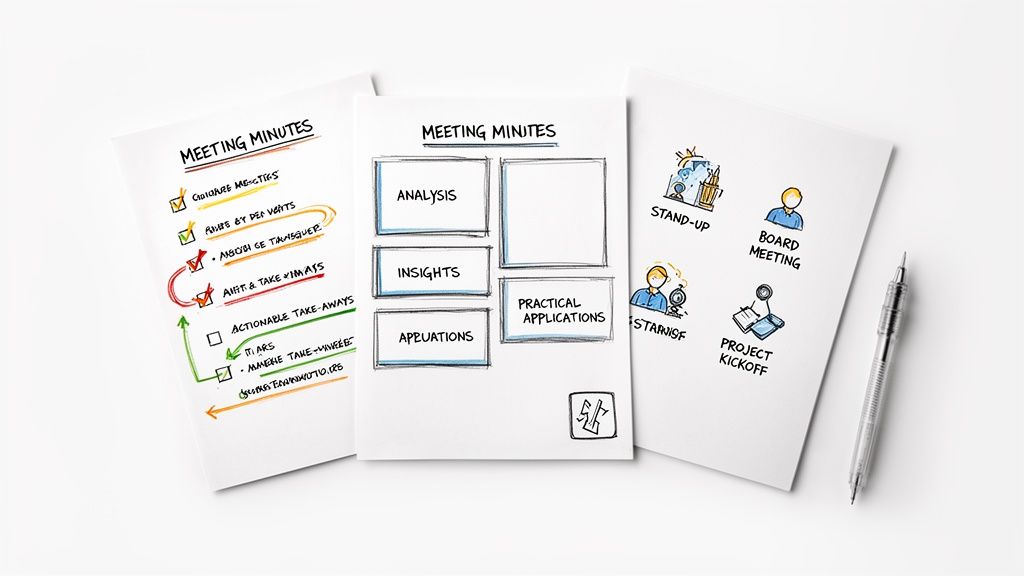

Beyond Translation Exporting and Using Your Content

Once the English audio is ready, the job usually shifts from language conversion to content handling. Teams need files they can search, share, summarize, and reuse. If you stop at the translated voice file, you leave a lot of value on the table.

Export the right version for the actual audience

Different stakeholders want different outputs. A manager may want a readable summary, an editor may want the transcript, and a client may only want the final audio. That’s why I keep the translated text and reviewed transcript organized alongside the voice export.

Useful export paths usually include:

- Readable documents: Word, PDF, or TXT for review and circulation

- Collaborative editing: Google Docs when multiple people need to revise language

- Production handoff: Markdown or clean text for CMS, subtitles, or script reuse

Turn translated material into something actionable

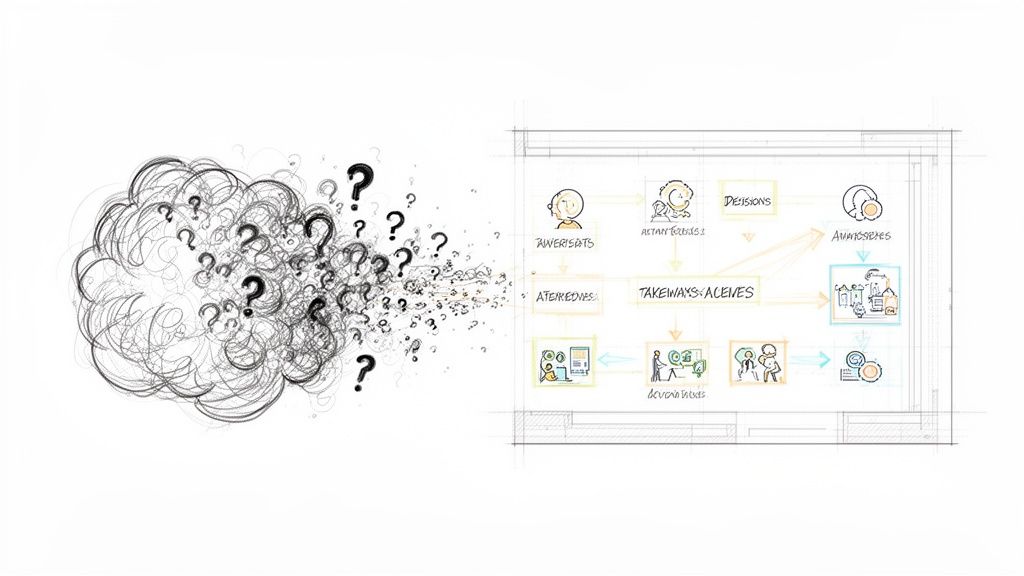

AI tools offer substantial time savings. Instead of handing over a raw transcript, generate a concise summary, list of key takeaways, and explicit action items from the translated text. For meeting recordings, that turns language output into operational output.

A good post-translation package often includes:

- Short summary: What was discussed, in plain English

- Key decisions: What changed during the conversation

- Action items: Who owns what next

- Reference transcript: For audit, review, or legal context when needed

Keep one reviewed source of truth

The biggest workflow mess happens when people edit the English version in one place, the transcript in another, and the audio script somewhere else. Pick one reviewed text version as the source of truth and derive exports from that.

The final audio gets attention. The reviewed transcript is what protects the work later.

That matters for teams building training libraries, interview archives, multilingual customer support records, or repurposed content from webinars and podcasts. The more often the material gets reused, the more valuable that clean text layer becomes.

FAQ on Spanish to English Voice Translation

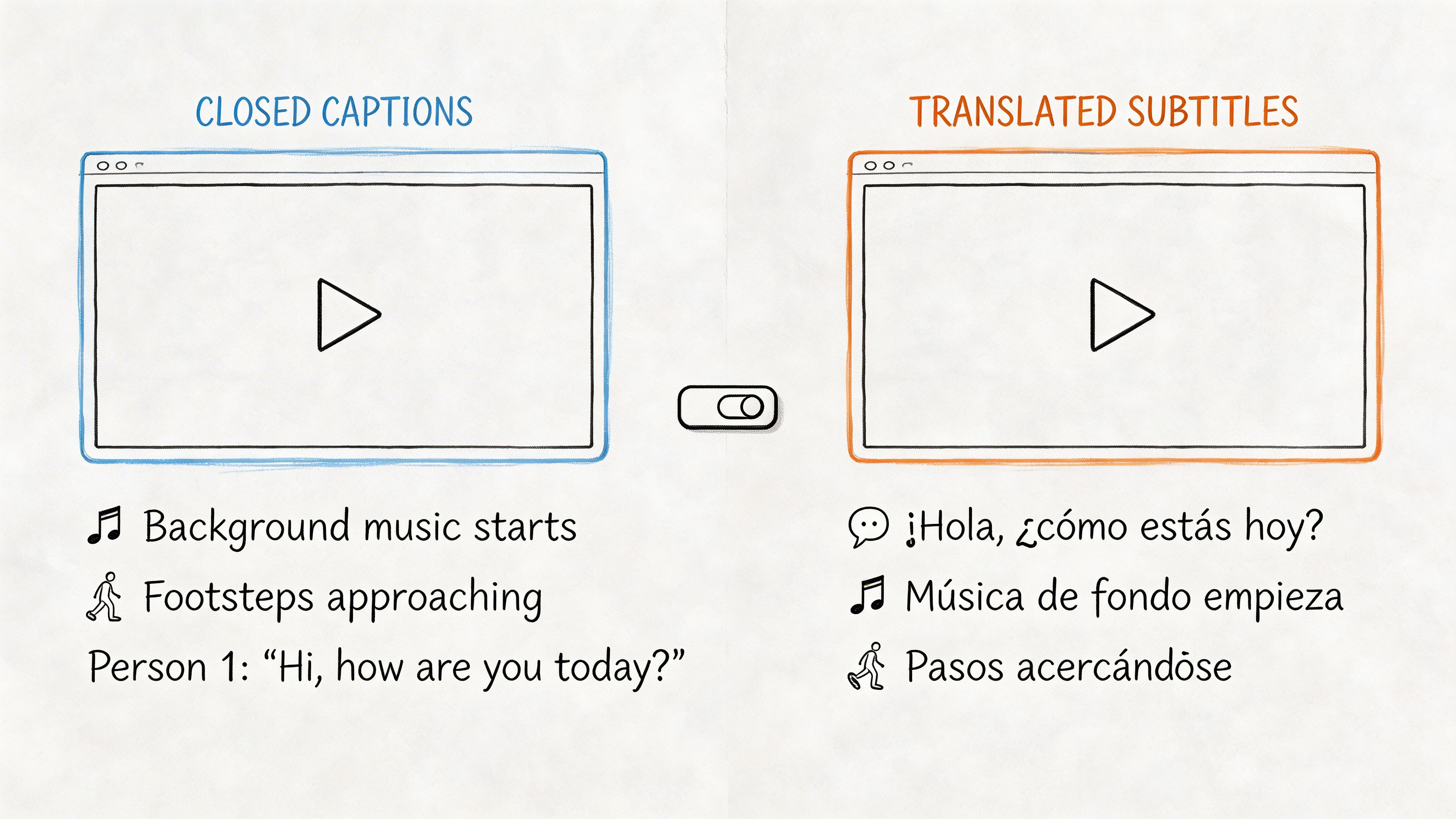

What’s the difference between transcription and translation

They’re related, but they’re not the same task. Transcription converts spoken Spanish into written Spanish. Translation converts that meaning into English. If you want voice output in English, the workflow usually starts with transcription first. For a deeper breakdown, see this guide on the difference between transcription and translation.

Can I translate a full video from Spanish to English with voice

Yes, but the workflow is heavier than translating a short audio clip. You’ll usually need accurate transcription, reviewed translation, and then English voice generation layered back onto the video. If the video includes captions, on-screen terms, or speaker changes, review takes longer because the language has to stay aligned with visuals.

Are free tools good enough

They’re often good enough for rough comprehension, quick travel use, or internal first passes. They’re usually not what I’d trust for polished training material, published video, legal context, or client-facing narration without manual review. The dividing line isn’t whether the tool is free. It’s whether you can inspect and correct the transcript before the final English voice is created.

How do I improve results when the audio has background noise

Start with source cleanup if you can. Lower music, reduce echo, and cut dead sections before upload. If the file is already fixed and you can’t re-record, review the transcript closely around transitions, names, and places where multiple people speak at once. Those are the spots where noise causes the most damaging translation mistakes.

Which matters more, the translation engine or the voice quality

For understandable output, the translation engine and transcript quality matter more. A smooth voice can make flawed translation sound convincing, which is risky. I’d rather have plain but accurate English audio than elegant delivery with the wrong meaning.

If you need a practical workflow for multilingual audio, HypeScribe is worth a look. It handles spoken content capture, transcription, searchable text, summaries, and exports in one web app, which makes it useful when translation work is part of a larger meeting, content, or documentation process.