What Is an Information Retrieval System and How Does It Work?

Imagine you have a personal librarian who has read every book, document, and website in the world. When you ask a question, they don't just point you to a shelf; they instantly hand you the exact pages that best answer your query. That, in essence, is an information retrieval (IR) system.

These systems are the unsung heroes of the digital age, working behind the scenes to make massive collections of data—from the entire web to your company's internal documents or even audio transcripts—findable and useful.

Understanding the Core of an Information Retrieval System

At its heart, an information retrieval system is all about bridging the gap between a human question and a mountain of unstructured data. You use one every time you type a query into Google, search for a product on Amazon, or look for a specific moment in a meeting recording. They are the engines that turn chaos into clarity.

An IR system is engineered to handle ambiguity. Unlike a rigid database where you need to know the exact "employee ID" to find a record, an IR system thrives on natural language. It’s built to understand what you mean, not just what you type.

From Simple Searches to Smart Systems

The earliest systems were a far cry from what we have today. Back in the mid-1960s, IBM's STAIRS was one of the first major attempts to manage large text datasets. This foundational work paved the way for the powerful enterprise tools we rely on now, including modern apps like HypeScribe, which can generate 99% accurate and fully searchable transcripts from spoken words. The history of information retrieval shows just how far we've come.

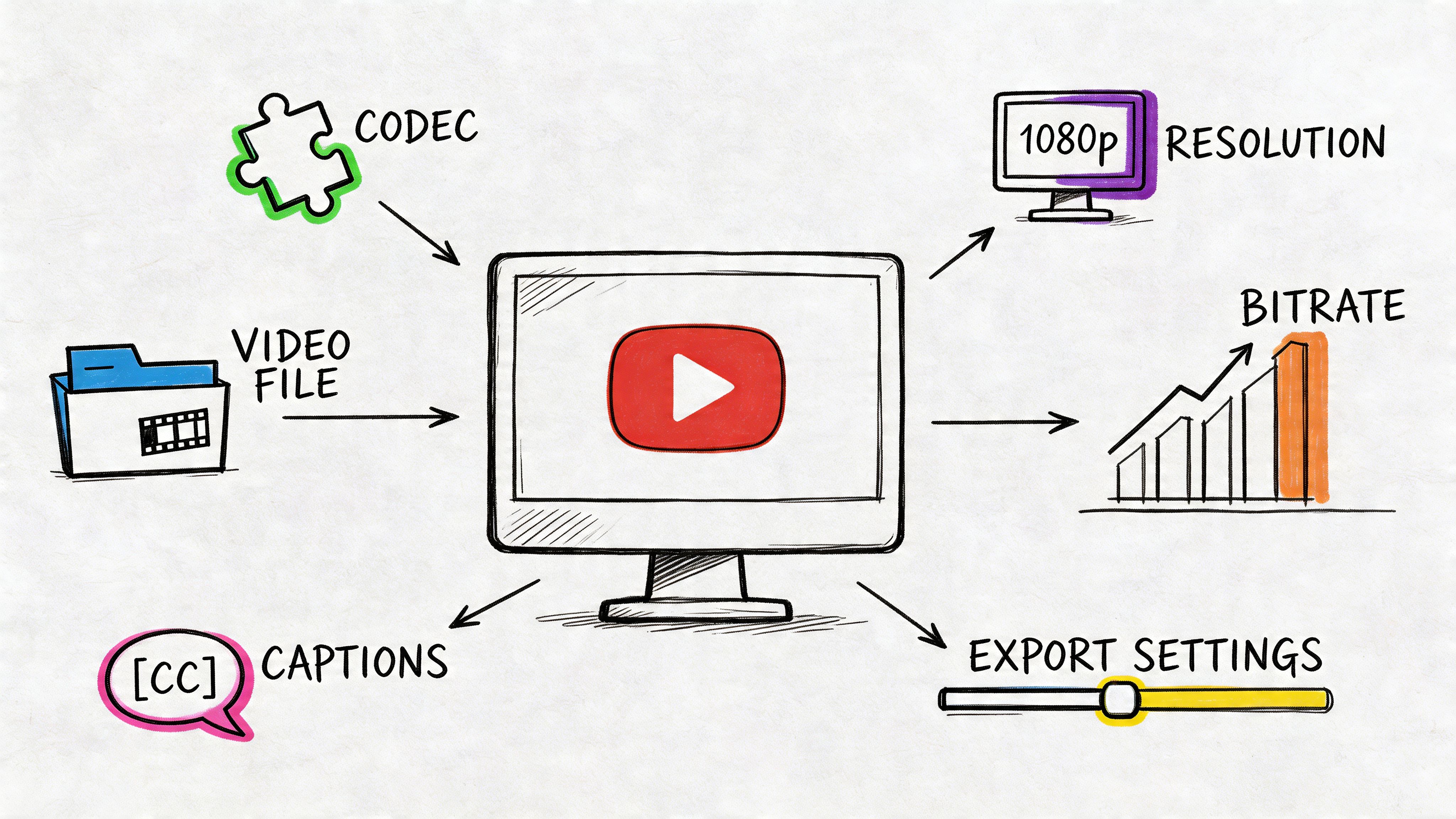

Today’s systems have moved far beyond simple keyword matching to focus on genuine understanding. A modern IR system is expected to:

- Interpret User Intent: It works to figure out the real goal behind your search, even if you use vague terms or synonyms.

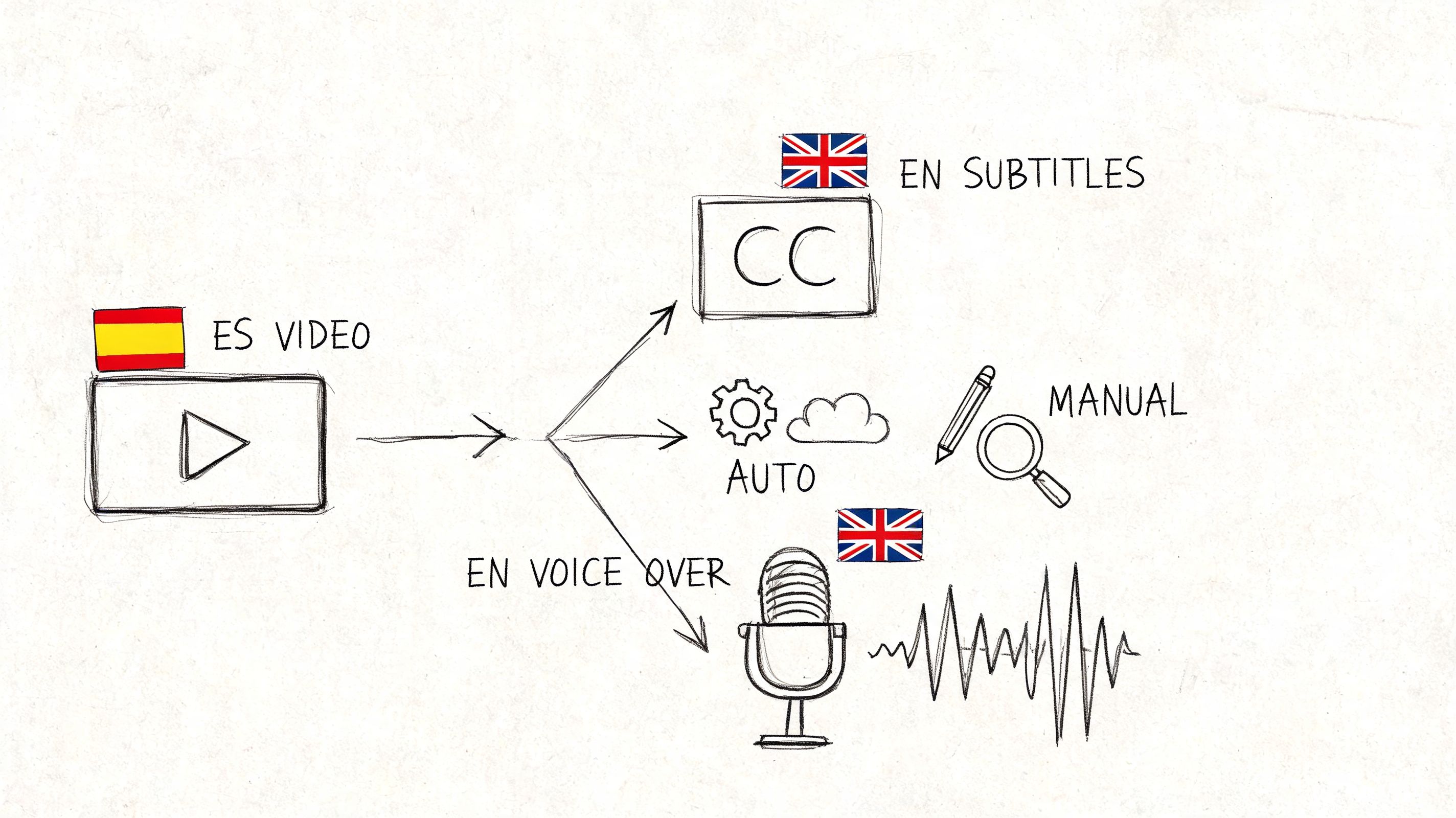

- Search Diverse Data: It can sift through practically anything—web pages, text documents, emails, and even audio files.

- Rank for Relevance: This is the most critical part. Instead of just dumping every document that contains your keyword, it intelligently ranks the results, putting the most useful ones at the top.

An information retrieval system doesn't just find documents; it finds the right documents. Its job is to prioritize relevance and context, turning raw data into knowledge you can actually use.

To give you a clearer picture, the table below breaks down the main jobs an IR system juggles every time you hit "search."

Core Functions of an Information Retrieval System

Ultimately, these four functions work in concert to deliver a seamless experience.

This ability to wrangle unstructured information and deliver ranked, relevant results is what makes IR systems so indispensable. They are the reason you can pinpoint a key decision from hours of meeting recordings or find that one critical file buried deep within a company-wide server.

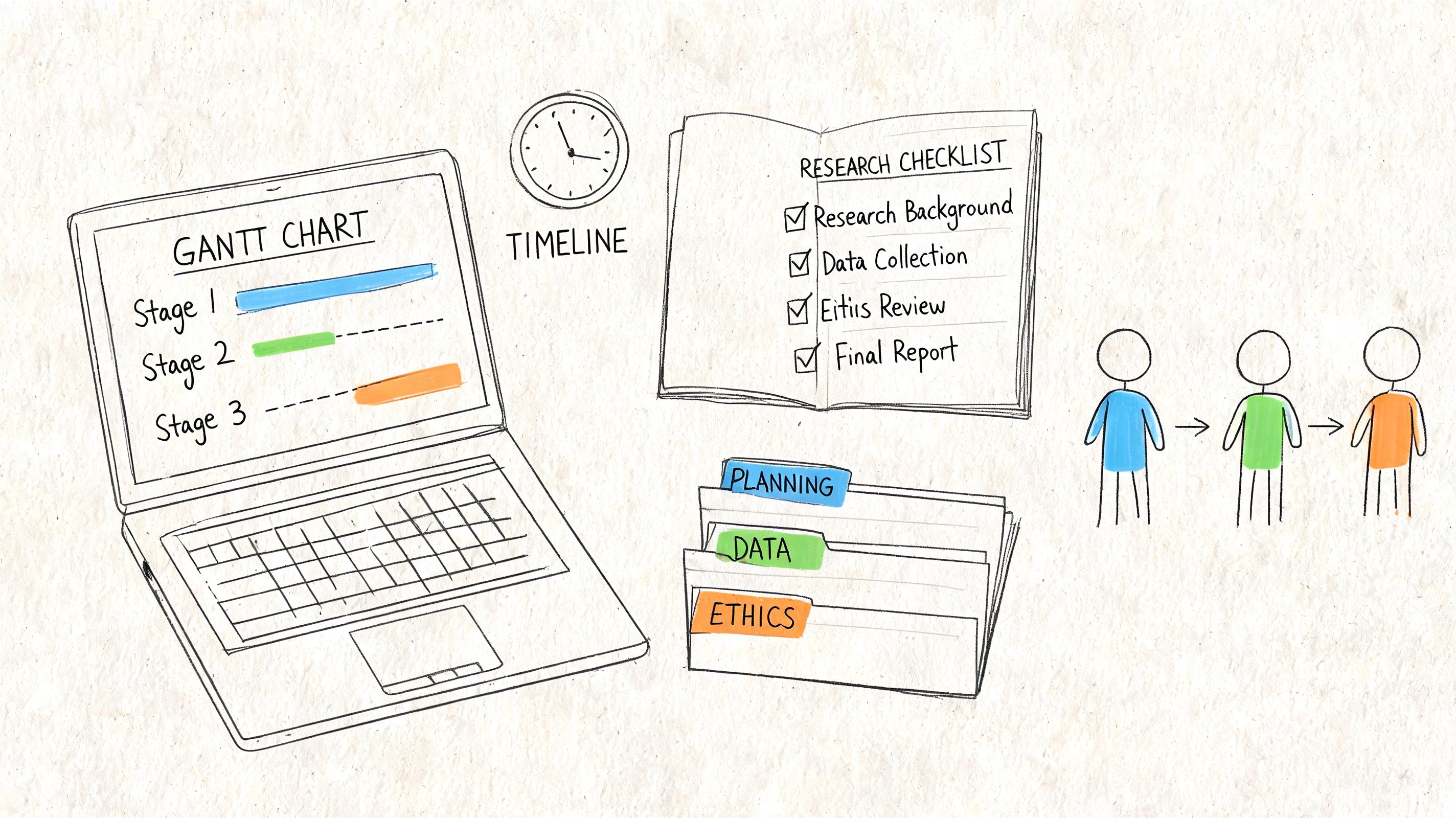

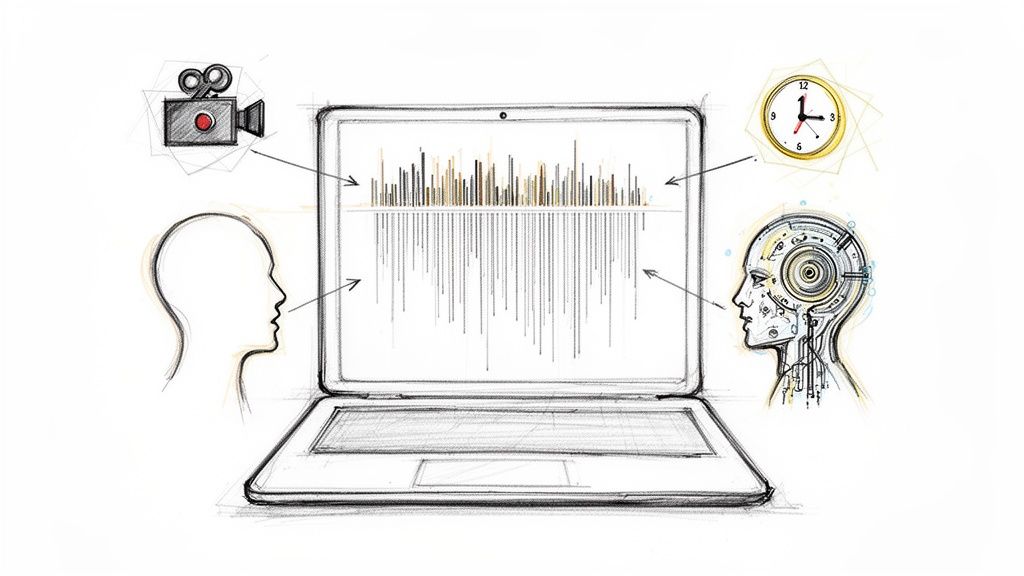

The 4 Core Stages of Information Retrieval

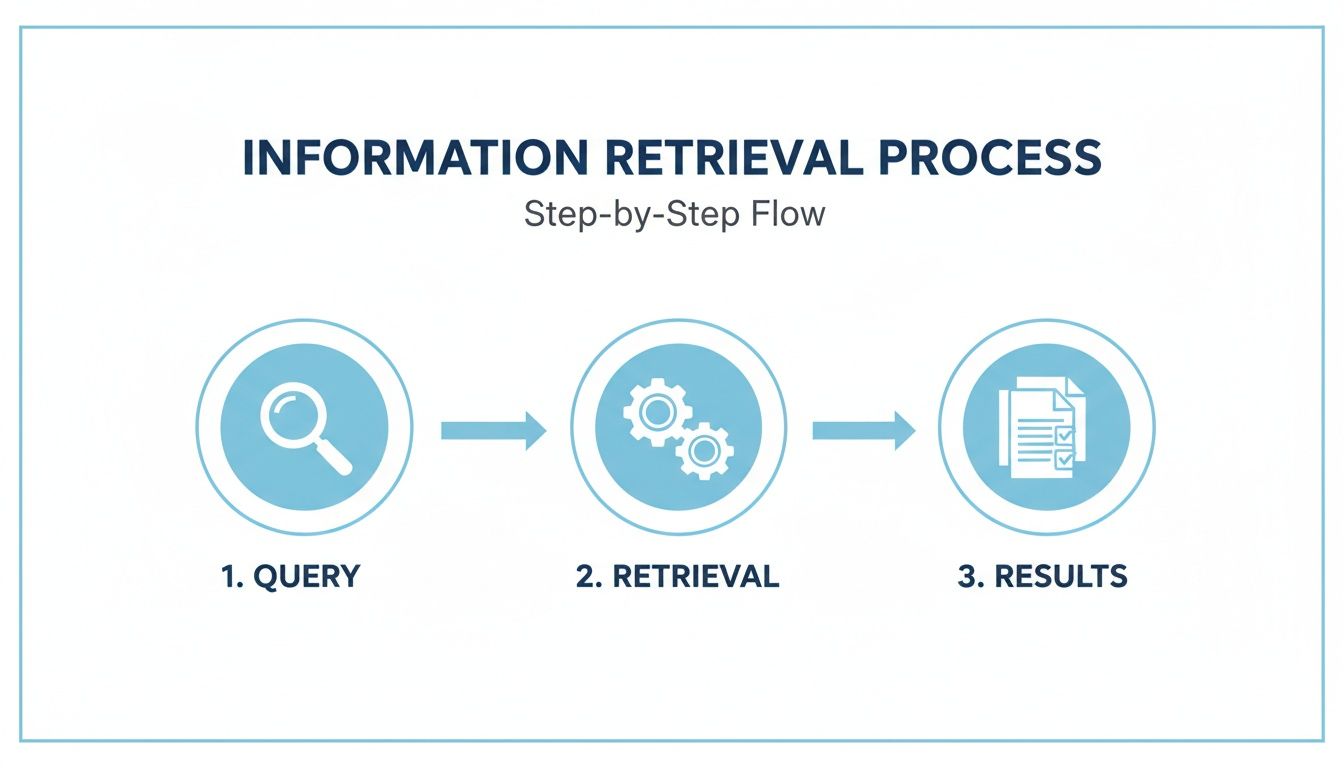

Ever wonder what happens in the milliseconds between you hitting "search" and getting a list of results? It’s not magic. At its core, every information retrieval system follows a logical, four-stage process that turns a simple question into a ranked list of useful answers.

Think of it like a super-fast librarian. Each stage builds on the last to make sure you find exactly what you need without having to hunt for it.

While this diagram looks simple, there’s a lot of heavy lifting happening behind the scenes. Let's break down the four key stages that make it all possible.

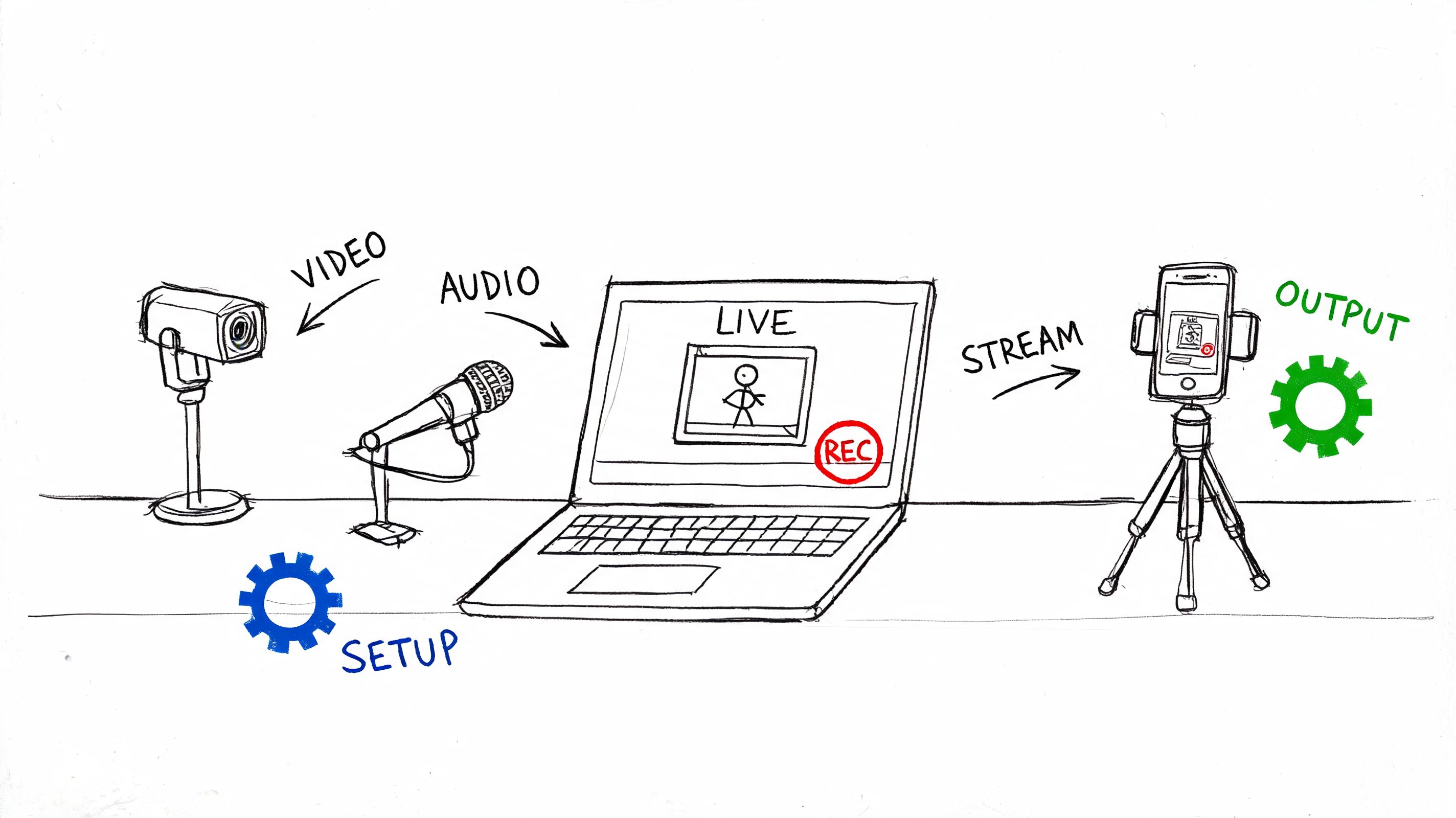

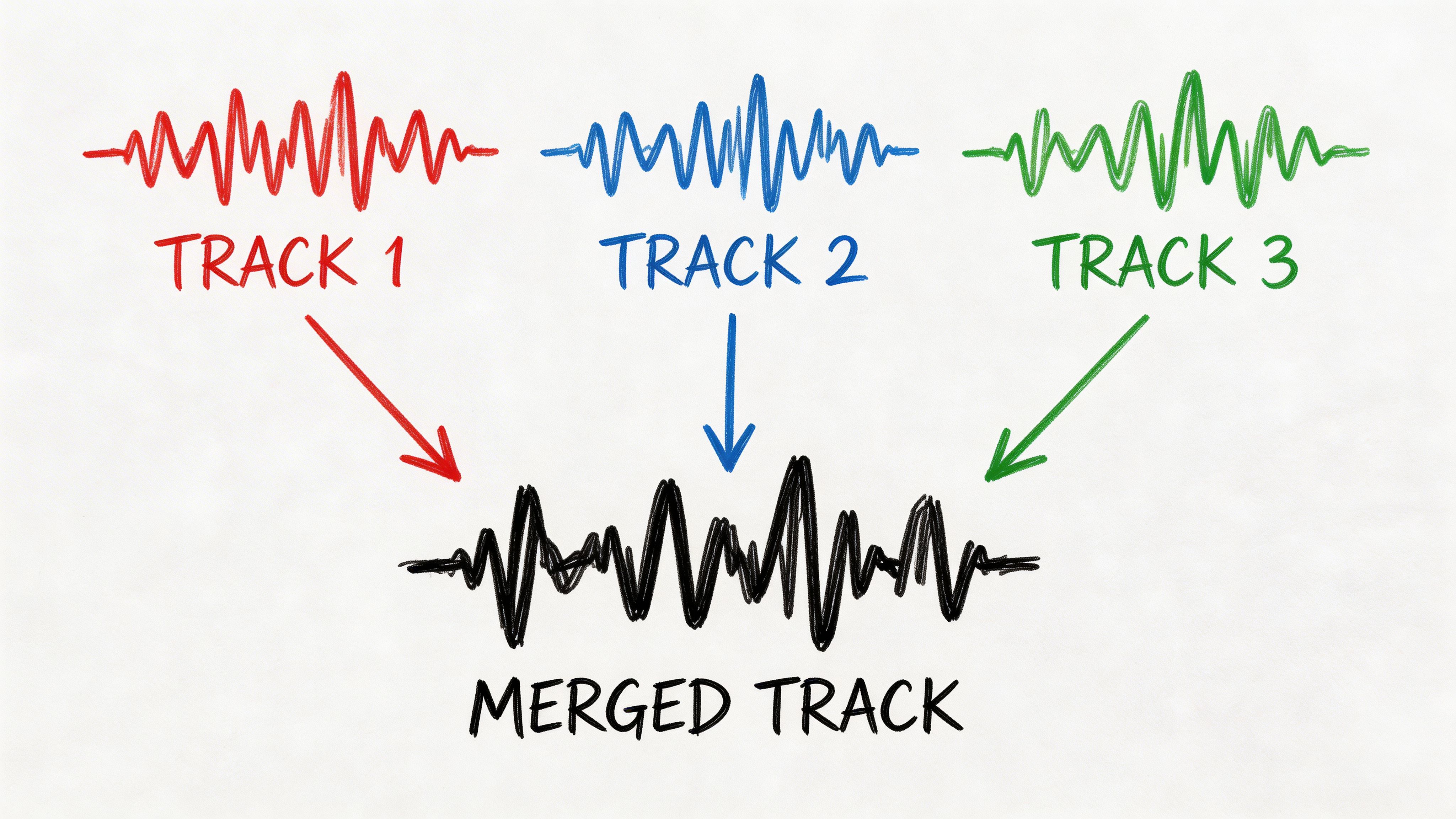

Stage 1: Data Acquisition (Crawling)

First things first, a search system has to collect the information it's going to search. This is the crawling phase—our librarian’s mission to gather every book, journal, and paper to fill the library's shelves.

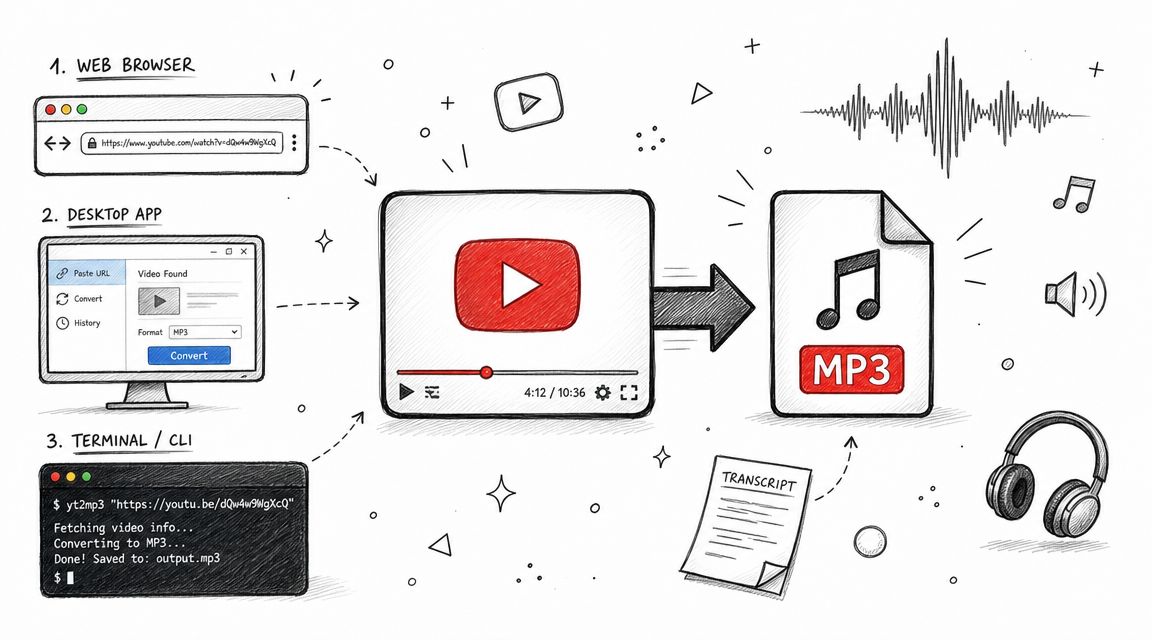

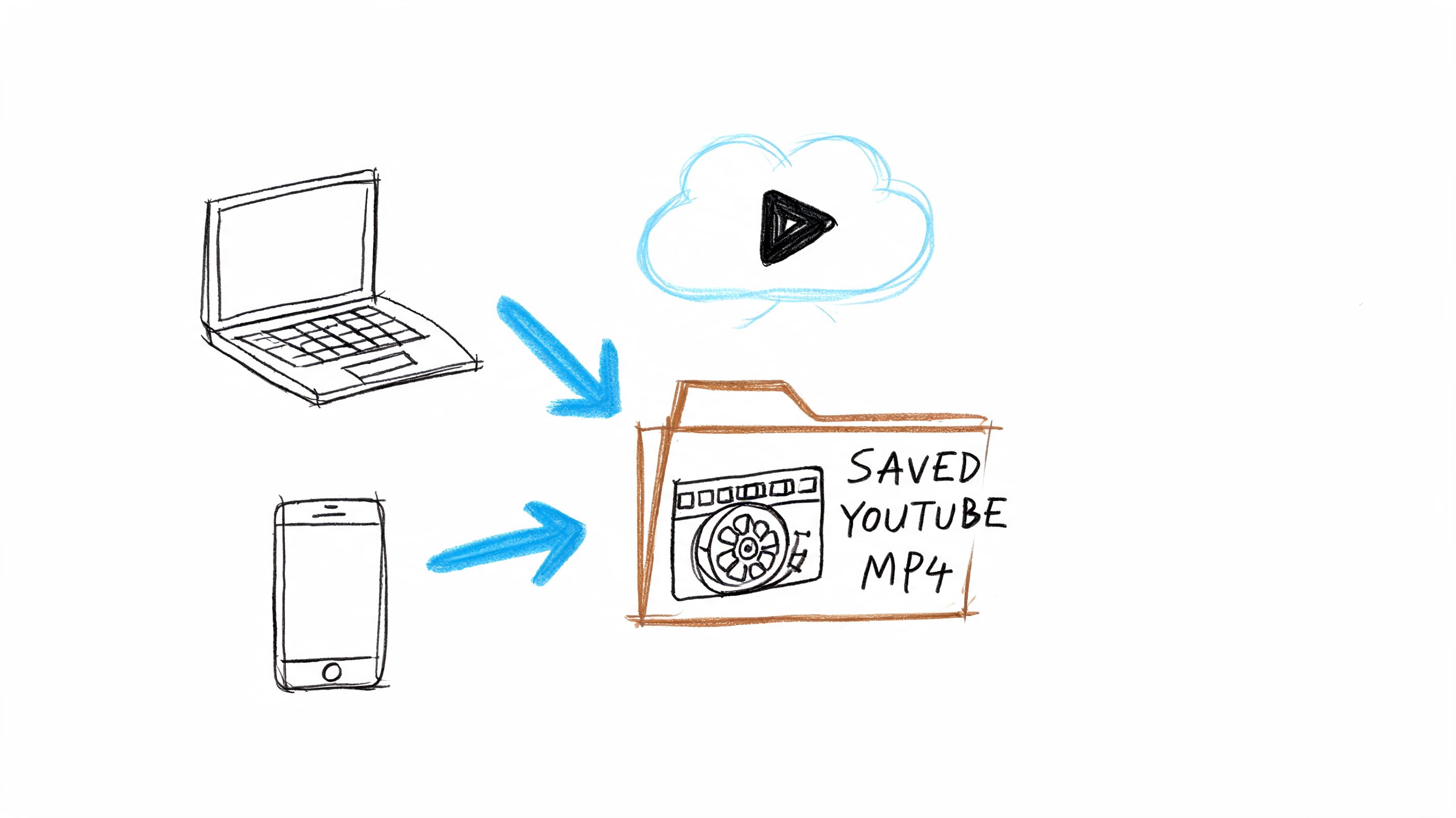

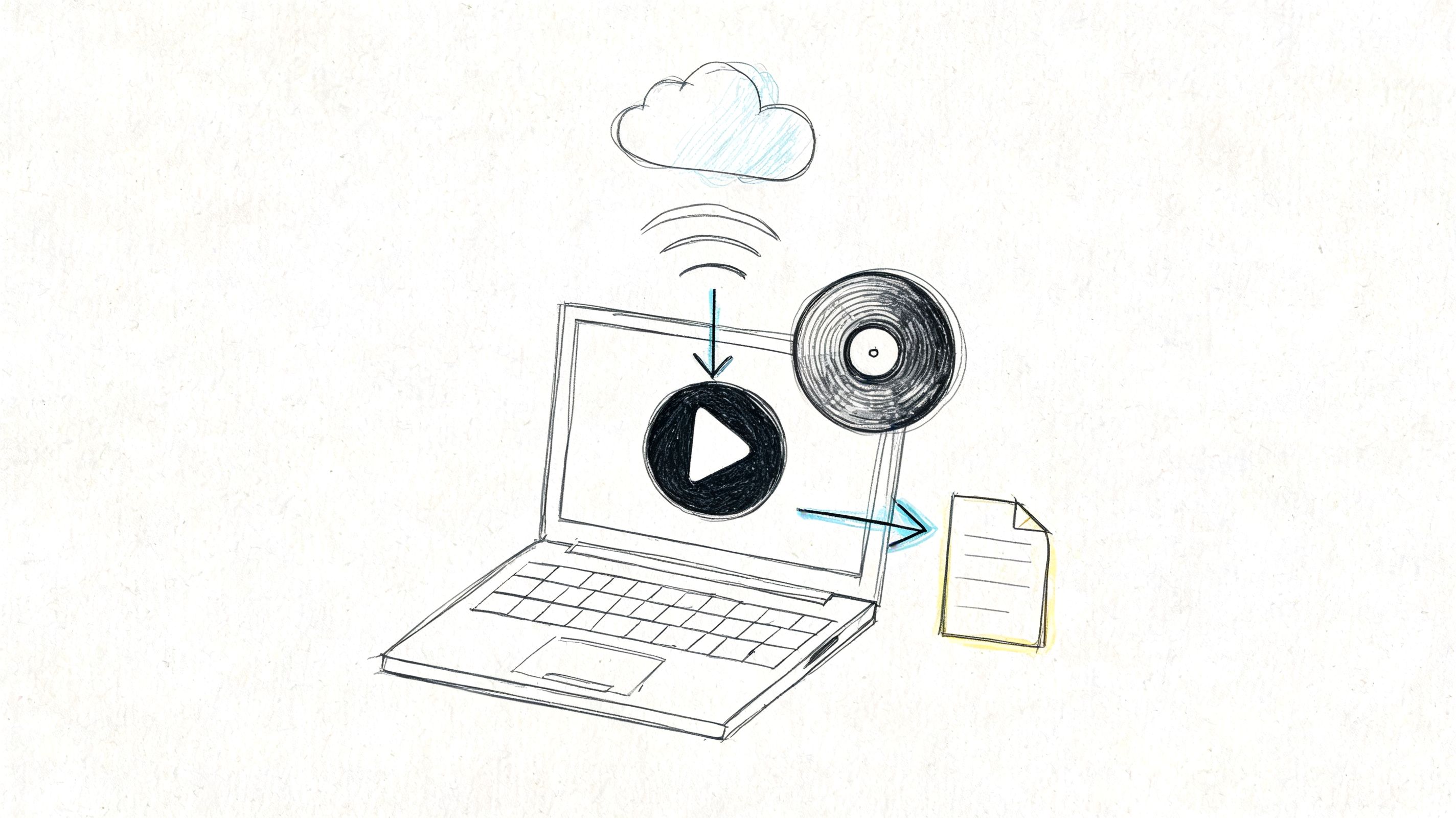

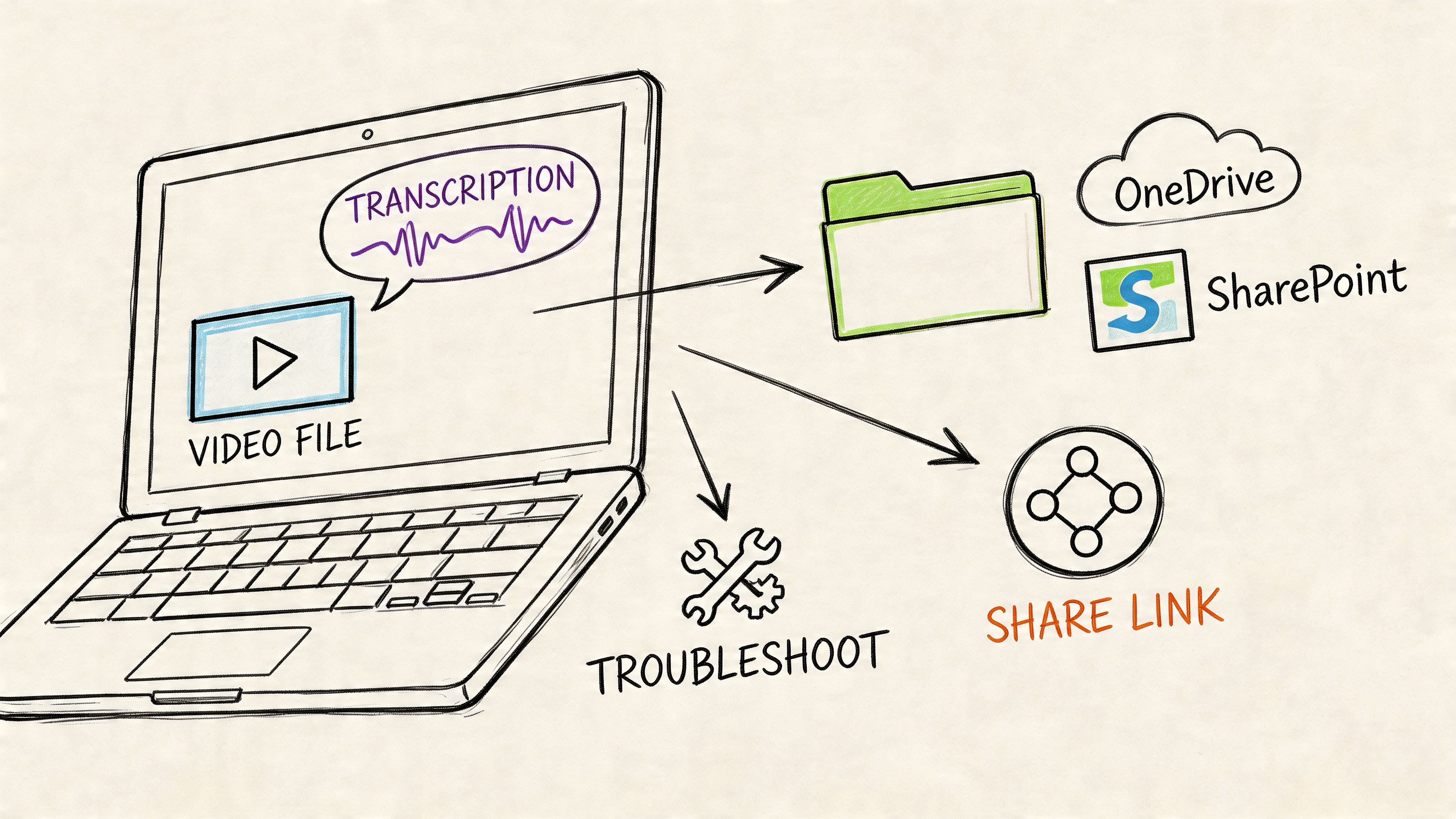

For a web search engine, this means using programs called crawlers (or spiders) to constantly browse the internet, discovering and downloading web pages. In a business setting, the system might connect to internal drives, cloud storage, or databases to pull in all the company's documents. For a tool like HypeScribe, it means ingesting the audio and video files from your meetings.

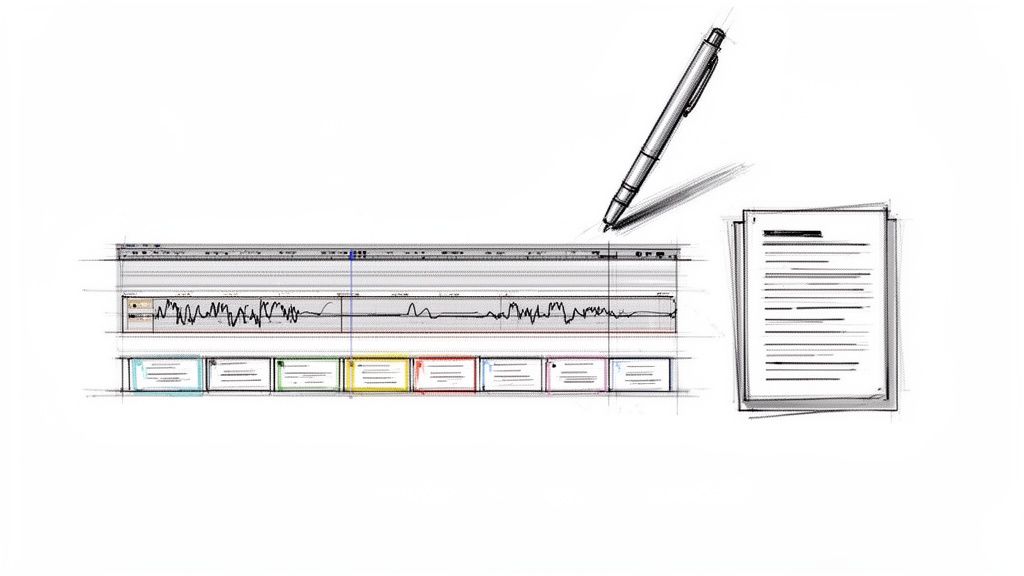

Stage 2: Indexing

Once all the data is collected, it's a chaotic mess. The next step, indexing, is to organize it all so it can be searched in an instant. This is where the real speed of modern search comes from.

Think of the index at the back of a textbook. Instead of reading the whole book to find a specific term, you just look it up in the index and jump straight to the right page. An IR system's index does the same thing on a massive scale, mapping every significant word back to the exact documents where it appears. This allows it to find what you're looking for in milliseconds.

Indexing is what turns a messy pile of digital documents into a neatly organized, searchable library. Without a good index, even the most powerful system would be too slow to be useful.

This process is critical for any tool that needs to find information quickly. For example, if you’re trying to recall a specific detail from a past project, an indexed archive of your meeting minutes can be a lifesaver, letting you pinpoint information instantly.

Stage 3: Query Processing

Now that the library is built and organized, the system is ready for your question. When you type in a search, the query processing stage begins. This is our librarian figuring out what you really mean, even if your query is a little vague, has a typo, or uses different phrasing than the source documents.

The system refines your search term before it even starts looking. It typically performs a few key actions:

- Tokenization: Breaking your search phrase down into individual words or "tokens."

- Stemming & Lemmatization: Reducing words to their root form (so "running," "ran," and "runs" all become "run").

- Synonym Expansion: Adding related terms to broaden the search (so a query for "car" might also look for "automobile").

This step ensures the system is searching for the intent behind your words, not just the literal characters you typed.

Stage 4: Ranking

Finally, the system has a list of all the documents that match your processed query. But just dumping them on you would be useless. The last and most important stage is ranking.

This is where the system acts like a great librarian who doesn't just point you to the right aisle but hands you the single most helpful book first.

Ranking algorithms sort the results from most to least relevant, saving you the effort of digging through noise. They analyze hundreds of signals—like how often your keyword appears, the authority of the document, and even how other users have interacted with that result—to give each item a score.

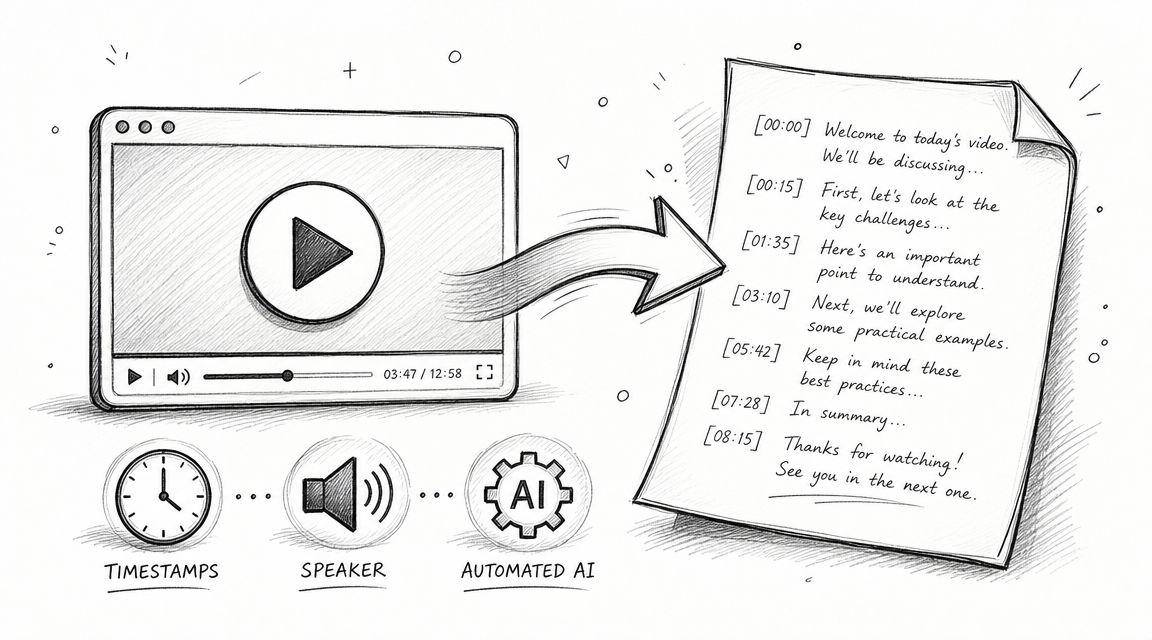

For HypeScribe, this means when you search for "Q3 budget," the system doesn't just show you every single time those words were spoken. It ranks the results to show you the exact moments in meetings where the budget was discussed in the most detail, putting the most important conversations right at the top.

A Look Inside Different Information Retrieval Models

If an information retrieval system is like a digital librarian, then the retrieval model is its brain. It's the core logic—the specific method of thinking—that the system uses to connect your query to the right documents. This is what separates a search that feels uncannily smart from one that completely misses the point.

These models aren't one-size-fits-all. Each has its own way of defining "relevance," with unique strengths and trade-offs. The best model for a legal database, for instance, is totally different from the one that powers a semantic search tool like HypeScribe.

Let's unpack four of the most common models to see how they shape your search experience.

The Boolean Model: The Strict Rule-Follower

The Boolean model is the oldest and most literal of the group. It operates on a simple, black-and-white logic using operators like AND, OR, and NOT. It finds documents that match your keywords exactly as you’ve specified.

Imagine telling a librarian, "Bring me every book with 'marketing' AND 'budget' in the title, but NOT if it mentions '2023'." They would follow your command precisely, with no room for interpretation. If a book was titled "Financial Planning for Marketing Teams," it wouldn't make the cut.

This all-or-nothing approach is its biggest weakness. It can't grasp context or synonyms. But for expert users who need absolute precision, like lawyers or researchers, that rigid control is exactly what they want.

The Vector Space Model: The Conceptual Thinker

The Vector Space Model (VSM) is where things get much more interesting. Instead of just matching keywords, it tries to understand the concepts behind the words. It does this by converting both your query and the documents into numerical representations, called vectors, and plotting them in a high-dimensional space.

The closer two vectors are in that space, the more conceptually similar they are. This is how a search for "managing project finances" can find documents about "budgeting," "cost control," and "financial oversight," even if your exact words aren't there. It understands the idea, not just the terms.

This leap forward was driven by necessity. As smartphones and social media created zettabytes of new data, simple keyword matching failed on an estimated 60-80% of more complex queries. The core ideas for VSM, which originated in the 1960s with the SMART system, were refined with modern techniques. Today's systems use powerful term-weighting algorithms like TF-IDF, which can boost precision by up to 25% by simply figuring out which words are important and which are just noise. You can dive deeper into the history of information retrieval models to see how these ideas evolved.

A Comparison of Information Retrieval Models

To help you see the differences at a glance, here’s a quick breakdown of how these models stack up against each other.

Each model represents a different philosophy on how to find the right information, from rigid rules to sophisticated guesswork.

The Probabilistic Model: The Smart Guesser

The Probabilistic Model works by playing the odds. It attempts to calculate the probability that any given document will be relevant to a user's query. It's a "smart guesser" that gets better over time by learning from what people actually find useful.

The system starts by making an initial guess about which documents are relevant. Then, as you and others interact with the search results—clicking some links, spending time on a page, and ignoring others—the model updates its understanding.

It’s constantly asking: "Based on this query, what's the likelihood that a person will find this specific document helpful?"

This feedback loop makes it a dynamic system that adapts to user needs. BM25, a famous probabilistic algorithm, is a workhorse in many modern search engines because it provides a strong relevance baseline by balancing term frequency with document length.

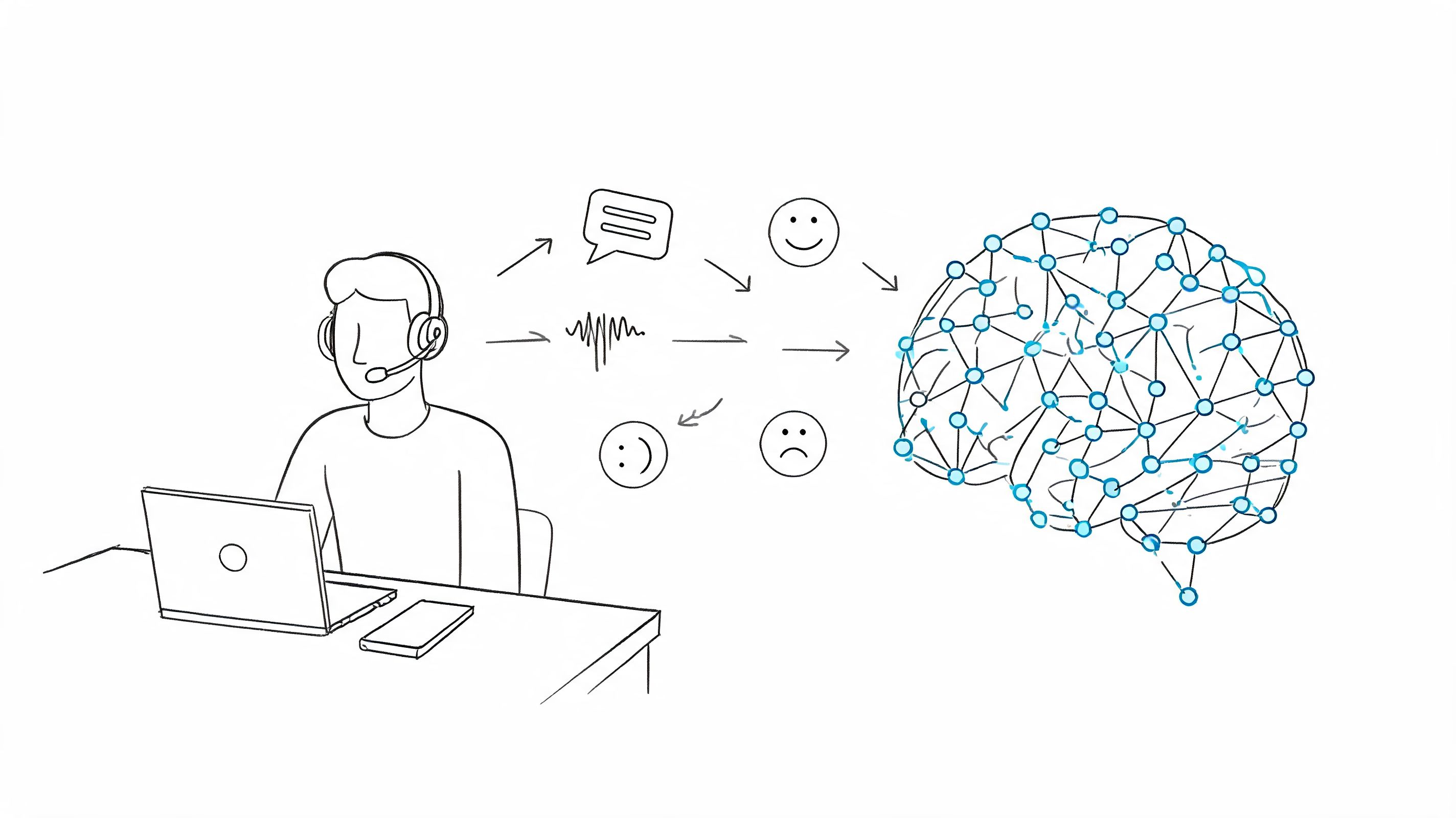

Neural and Learning-to-Rank Models: The AI-Powered Expert

At the top of the pyramid are Learning-to-Rank (LTR) and other neural models. These systems use machine learning—specifically deep learning—to build a sophisticated, nuanced understanding of what "relevance" truly means to a human.

Instead of relying on a single formula, an LTR model is trained on massive amounts of data to weigh hundreds of different signals at once. These can include:

- User Engagement: Clicks, dwell time, bounce rates, and other behavioral signals.

- Document Authority: How fresh, credible, or important the source is.

- Semantic Match: How closely the concepts in the query and document align.

This is the tech that powers the incredibly accurate results you get from Google or specialized tools like HypeScribe. When you search for "key takeaways from Q3 kickoff" in HypeScribe, an LTR model doesn't just look for those words. It analyzes past searches and user behavior to predict which moments in a meeting transcript represent important decisions or action items, delivering an answer that understands your true intent.

How Do We Know if an Information Retrieval System is Any Good?

You can build an information retrieval system with elegant models and a lightning-fast index, but if the results it delivers are irrelevant, none of that matters. A system is only as good as its answers. This is why measuring performance isn't just some technical box-checking exercise—it's how we find out if the system is actually working for the people using it.

To do this, we rely on a handful of core metrics that judge the quality and relevance of search results. These are the very same measurements that engineers at places like Google and HypeScribe use to constantly tune their algorithms and make search better.

Precision: Are the Results on Point?

The first and most intuitive metric is precision. It answers a straightforward question: Of all the results the system gave me, how many were actually relevant?

Think of it like fishing for salmon. You cast your net and pull up 10 fish. Inside, you find 8 salmon and 2 trout. Your precision is 80%. You mostly got what you wanted, but you also caught some things you had to throw back.

In an information retrieval system, high precision means users aren't wasting their time sifting through noise. The results are clean and on-topic, which is absolutely critical for building trust.

This focus on accuracy is fundamental. When you search your company's meeting archive for "Q3 marketing budget" using a tool like HypeScribe, you need to find the specific moments where that budget was discussed—not every meeting that happened to mention the word "marketing." High precision ensures the top results are genuinely the ones you're looking for.

Recall: Did We Miss Anything Important?

While precision is all about the quality of what you found, recall is about quantity and completeness. It answers a different but equally crucial question: Of all the relevant documents that exist in the entire collection, what percentage did the system actually find?

Let's go back to our fishing trip. Suppose you know for a fact there are 16 salmon in that lake. If you caught 8 of them, your recall is 50%. You found half of the available salmon, which means you completely missed the other half.

These concepts have been around for a long time. The challenge of measuring relevance dates back to the very beginning of machine-based retrieval. Back in 1955, a team led by Allen Kent formally introduced precision and recall as evaluation standards. This idea was a game-changer, paving the way for systems like MEDLARS, which by 1960 had cut down medical literature search times at the National Library of Medicine from days to hours. You can read more about the early days of these metrics and their impact on information retrieval history.

A system with high recall is comprehensive. It gives you confidence that you haven't missed a critical piece of information. But there's almost always a trade-off:

- High Precision, Low Recall: You get very few results, but they are all spot-on.

- Low Precision, High Recall: You get a flood of results that includes everything relevant, but it's buried in a lot of junk.

The sweet spot, of course, is a balance of both—giving users a high number of relevant results without making them dig for the good stuff.

Beyond Precision and Recall: Advanced Metrics

Simply knowing if a result is relevant isn't enough in a modern information retrieval system. The order of the results is tremendously important. Let's be honest, most of us rarely click past the first page. This is where more advanced metrics come into play.

Two of the most important are:

Mean Average Precision (MAP): This metric looks at the average precision across a set of queries, but with a clever twist. It heavily penalizes a system for putting relevant documents further down the list. A high MAP score tells you that the system consistently puts the good stuff right at the top where users can see it.

Normalized Discounted Cumulative Gain (NDCG): This is one of the most sophisticated and widely used metrics today. NDCG not only rewards a system for returning relevant documents but also understands that some results are more relevant than others. It knows some documents are "perfect" answers, while others are just "good." A system gets the highest NDCG score by putting the perfect answers at the very top of the list.

These advanced metrics give developers a much more nuanced picture of performance. They move beyond asking, "Did we find relevant information?" to asking, "Did we find the most relevant information and put it right at the top?" For any serious application, from web search to enterprise tools, this level of detailed measurement is what separates a decent system from a truly great one.

Real-World Examples of Information Retrieval Systems

Theory is one thing, but seeing information retrieval systems in action is where it all clicks. You probably use these systems dozens of times a day without a second thought. They're the invisible machinery that helps us find what we need, whether we’re searching the entire web or a single conversation.

From Google's global index to your company’s internal wiki, IR systems are incredibly versatile. Let's look at a few examples you’re already familiar with.

Web Search Engines: The Global Library

This is the one everyone knows. Search engines like Google are information retrieval systems operating on a planetary scale. Their job is staggering: crawl, index, and rank trillions of web pages, images, and videos.

When you search for something, you're tapping into a sophisticated mix of vector space models and modern neural networks. The system works to figure out what you really mean, not just the words you typed. It then sifts through the results, weighing hundreds of factors like page authority and content relevance, all to deliver the best answer in a fraction of a second.

Enterprise Search: Unlocking Company Knowledge

Inside any organization, crucial information gets buried in shared drives, chat apps, emails, and intranets. An enterprise search system acts like a master key, providing a single search bar to unlock all of it.

Crucially, these systems are built to respect security. They understand access permissions and ensure employees only find information they’re authorized to see. This is a massive productivity booster. Instead of pinging a coworker for a file you know exists somewhere, you can just find it yourself. To get a better sense of how this works in practice, this article on Enterprise Search with Sitecore Search offers some great insights.

A good enterprise search platform can make a huge difference. If you're looking to centralize your company’s intelligence, our guide on what a knowledge management system is is a great next step.

An effective enterprise search platform can reduce the time employees spend searching for information by up to 35%, directly boosting productivity and preventing duplicate work.

E-commerce Search: Guiding Shoppers to Products

Go to Amazon or eBay, and what’s the first thing you do? You use the search bar. E-commerce search is a highly specialized IR system with a clear mission: connect a shopper’s intent with the right product.

These systems lean heavily on structured data—things like brand, color, size, and user ratings. When you search for "red running shoes size 10," the system isn't just matching keywords. It's filtering by specific attributes and then ranking the results based on sales history, reviews, and relevance to get you exactly what you’re looking for.

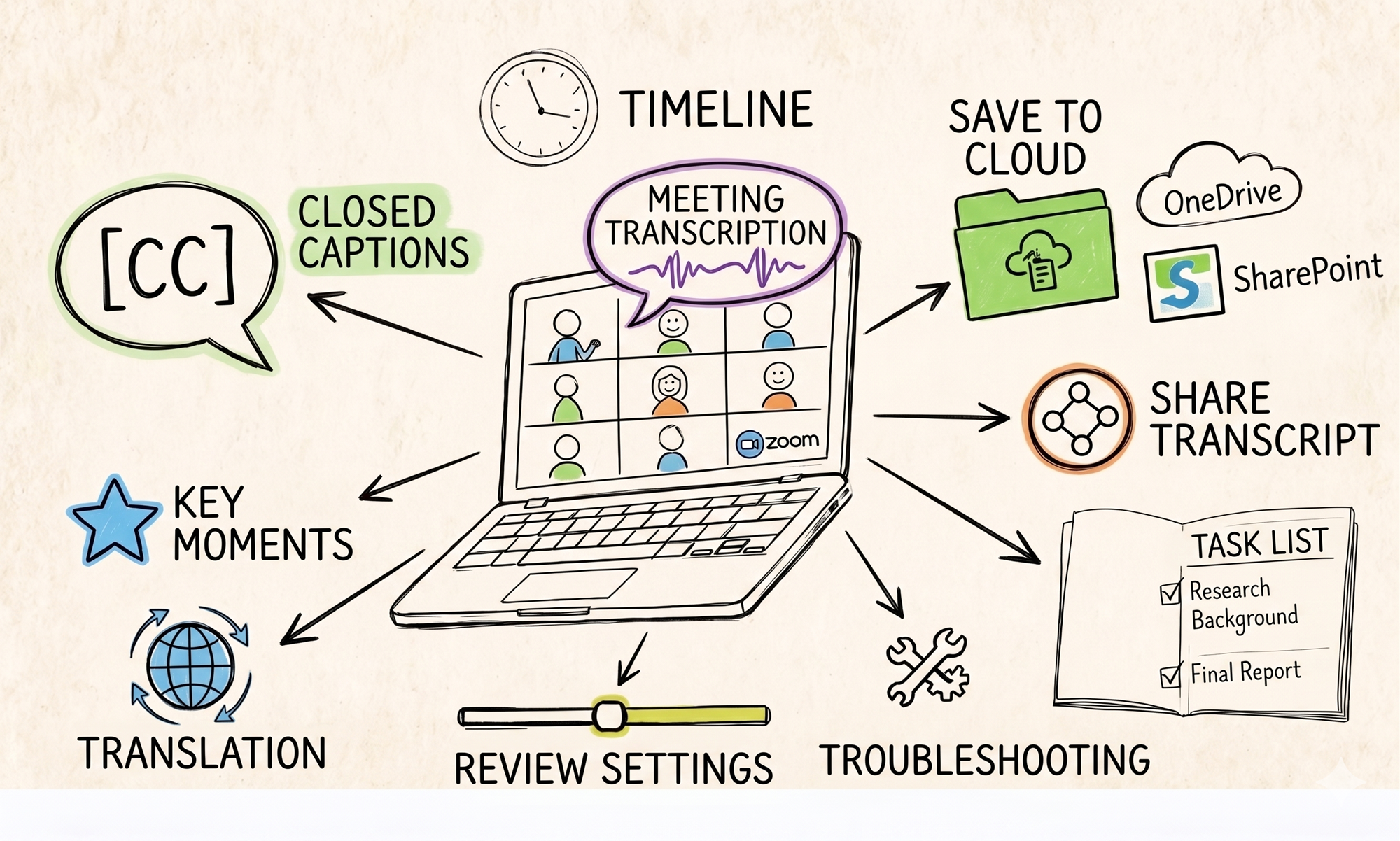

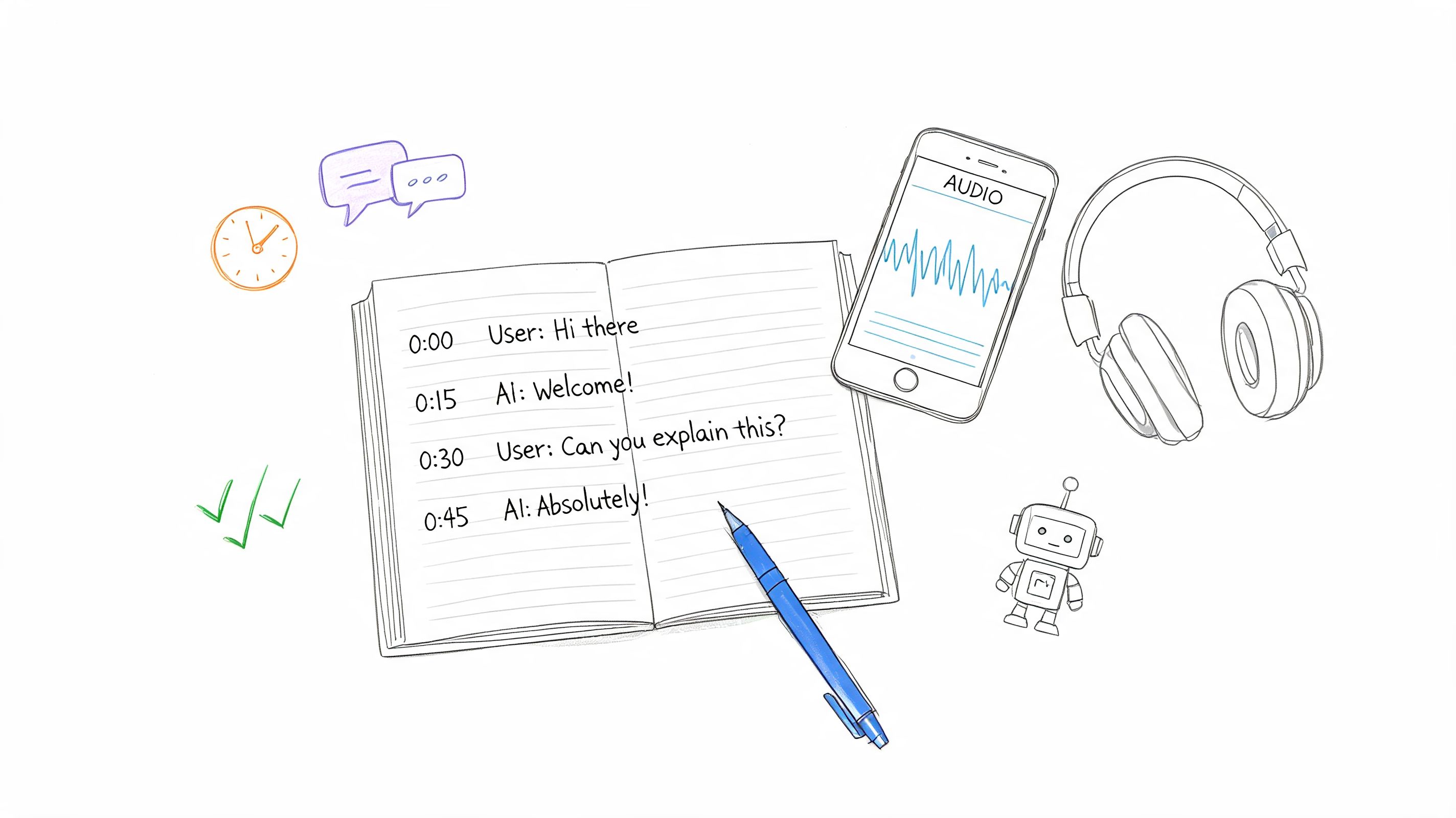

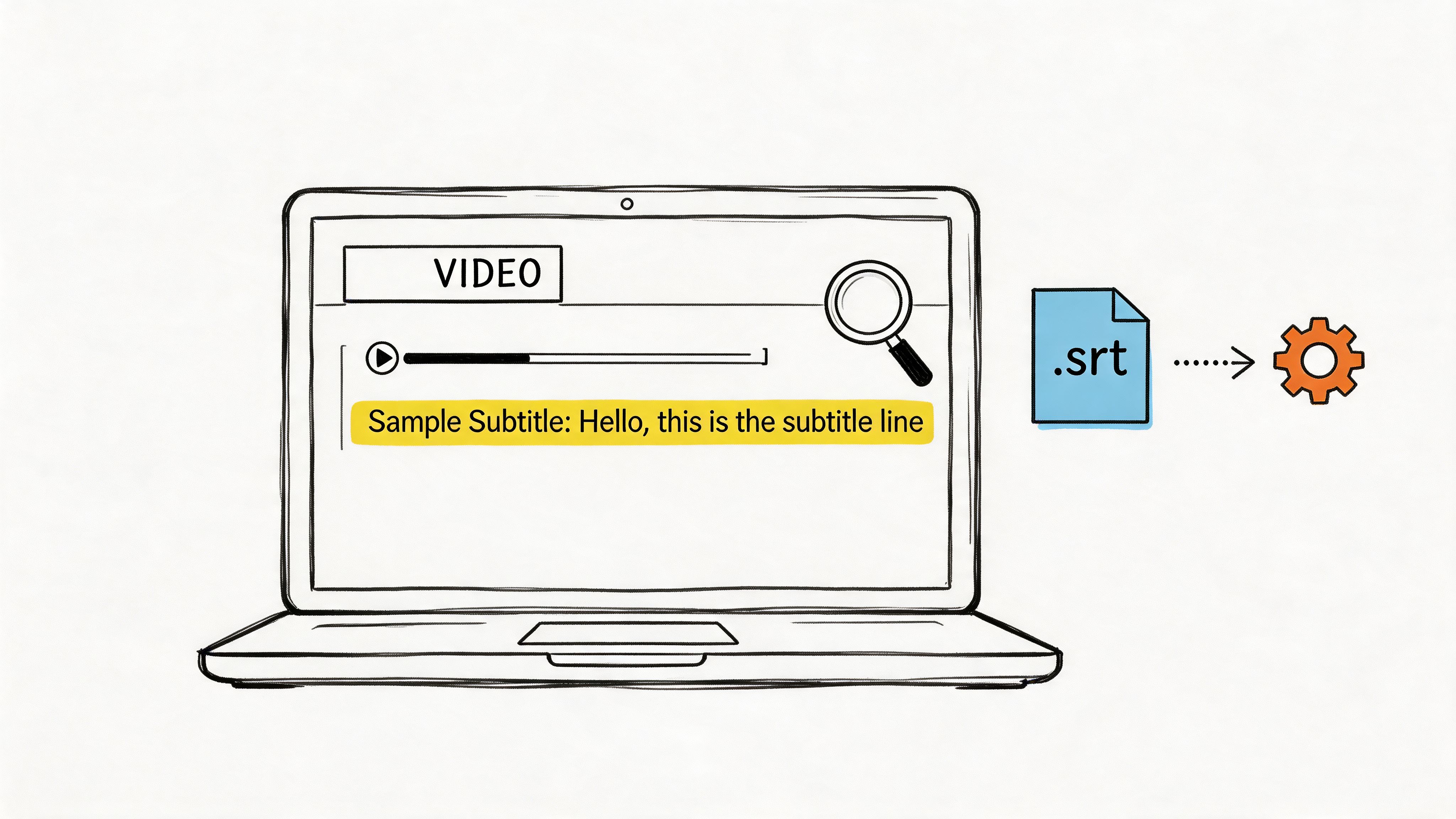

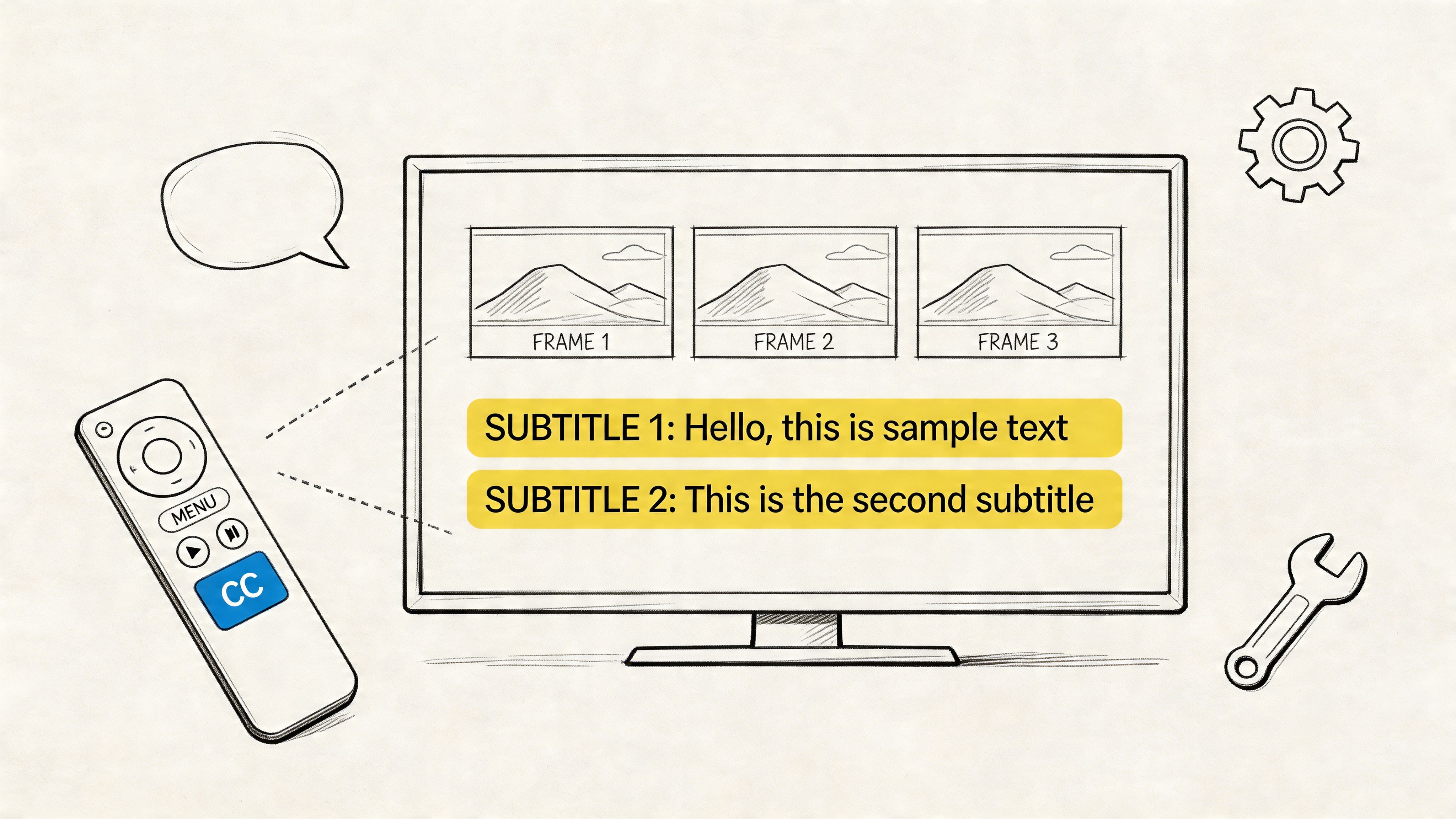

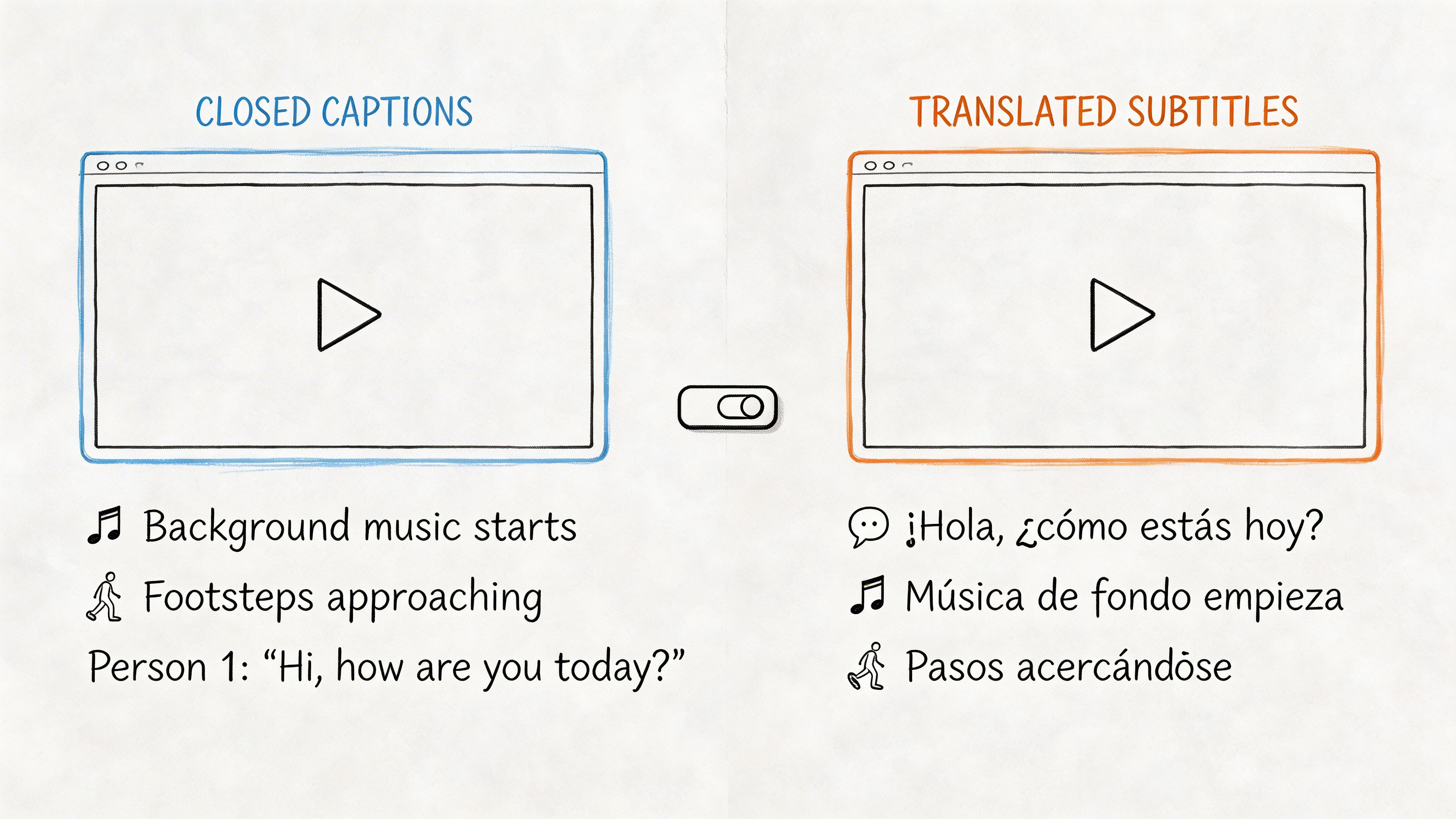

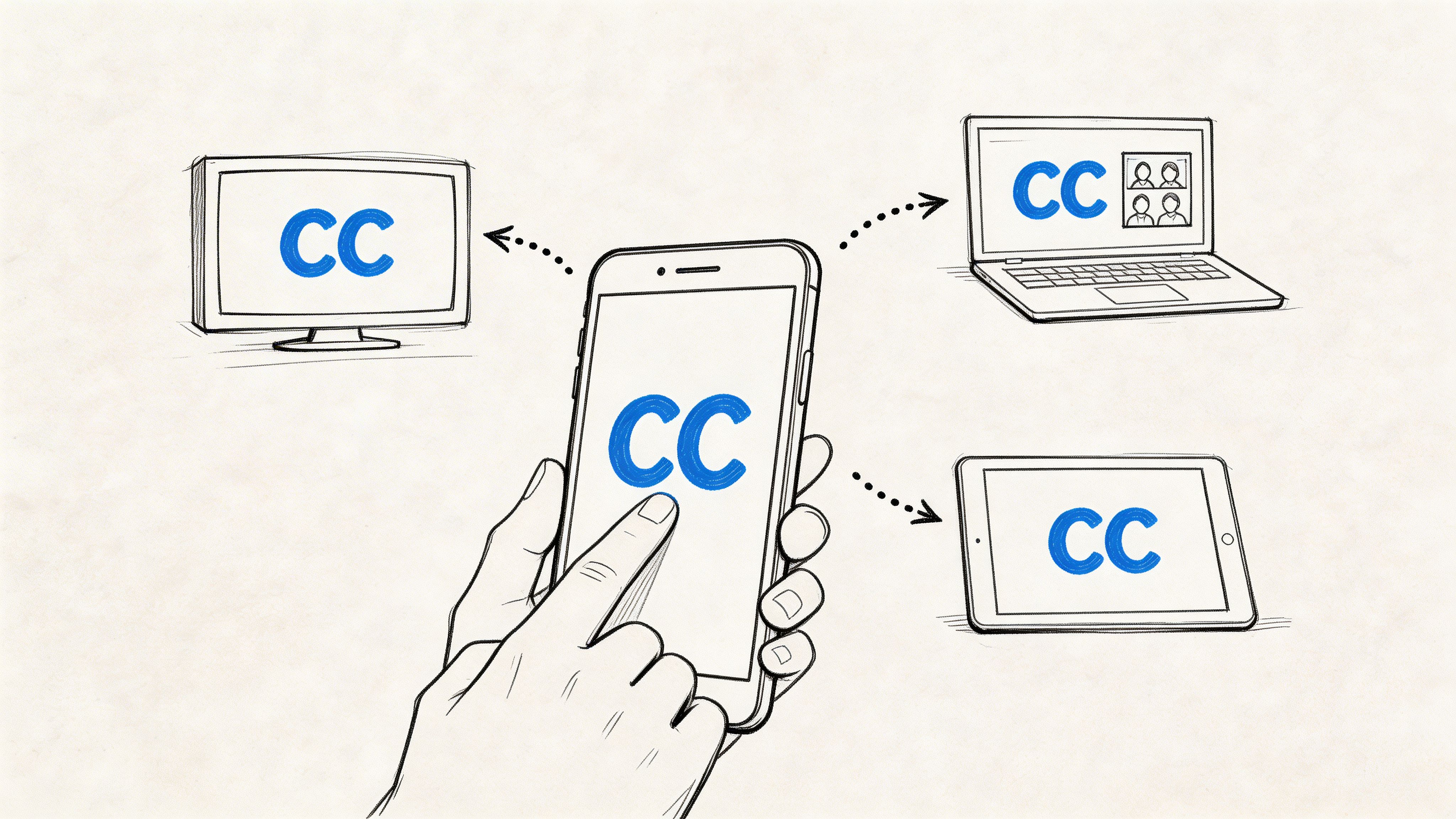

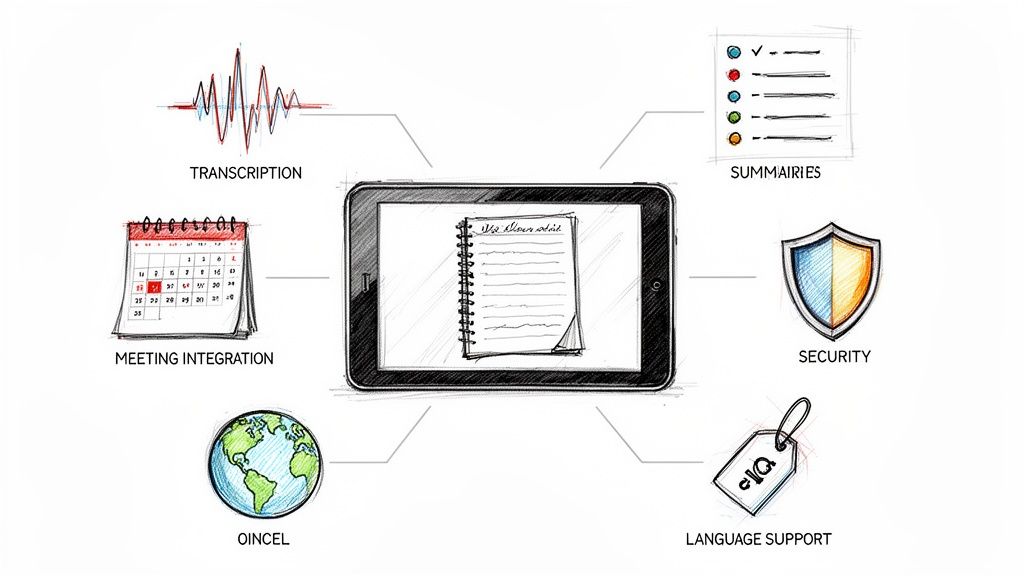

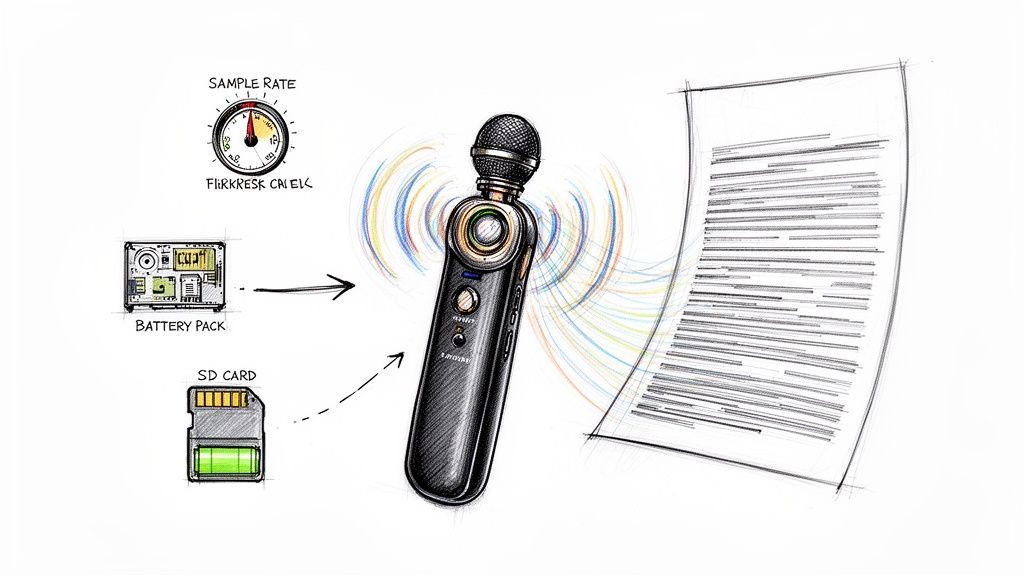

Transcript Search: Finding Moments in Conversations

One of the newest frontiers for IR is searching through spoken words. Tools like HypeScribe are a perfect example. They use an advanced retrieval system to transform hours of recorded meetings into a fully searchable database. It’s a classic IR problem applied to a completely new type of content: human conversation.

A project manager can just type "Q3 marketing action items" and instantly find the exact moments in past meetings where the team discussed that very topic. The system is smart enough to understand context, so it pulls up the most important discussions first. This is a powerful demonstration of how a dedicated IR system can bring clarity to a specific, high-value source of information.

The Future of Information Retrieval and AI

The world of information retrieval is changing fast, and artificial intelligence is in the driver's seat. We are rapidly moving past the days of simple keyword matching and into an era where search is conversational, contextual, and deeply personal.

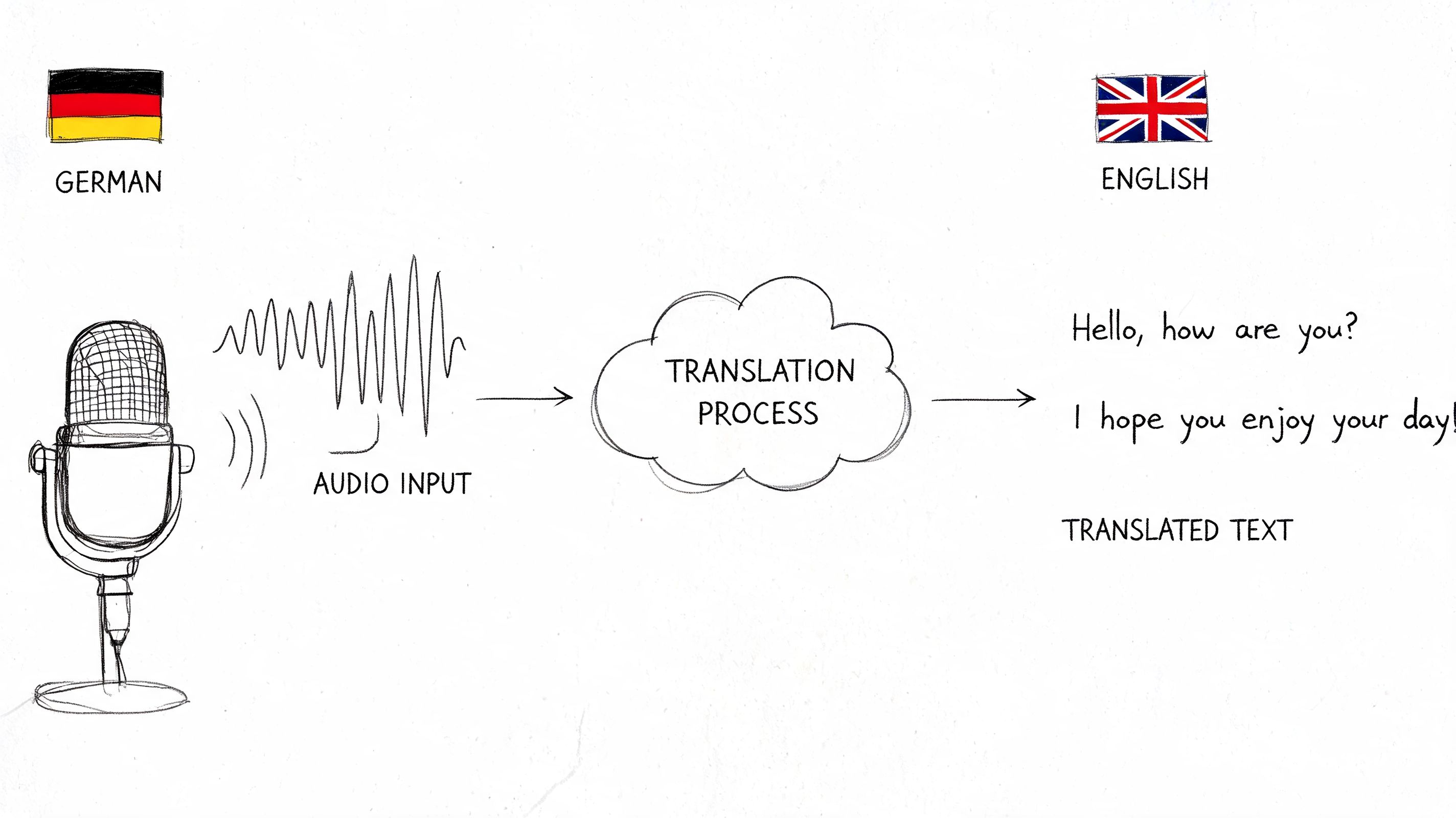

The very definition of what an information retrieval system is is evolving. It's no longer just about "finding documents with these words." The new goal is far more ambitious: "understand what I truly mean and give me the answer I need." The engine behind this shift is Large Language Models (LLMs)—the same technology powering the generative AI tools making headlines. They enable a much more intuitive way to search, where you can ask complex questions in plain language and get back a direct, synthesized answer, not just a list of links.

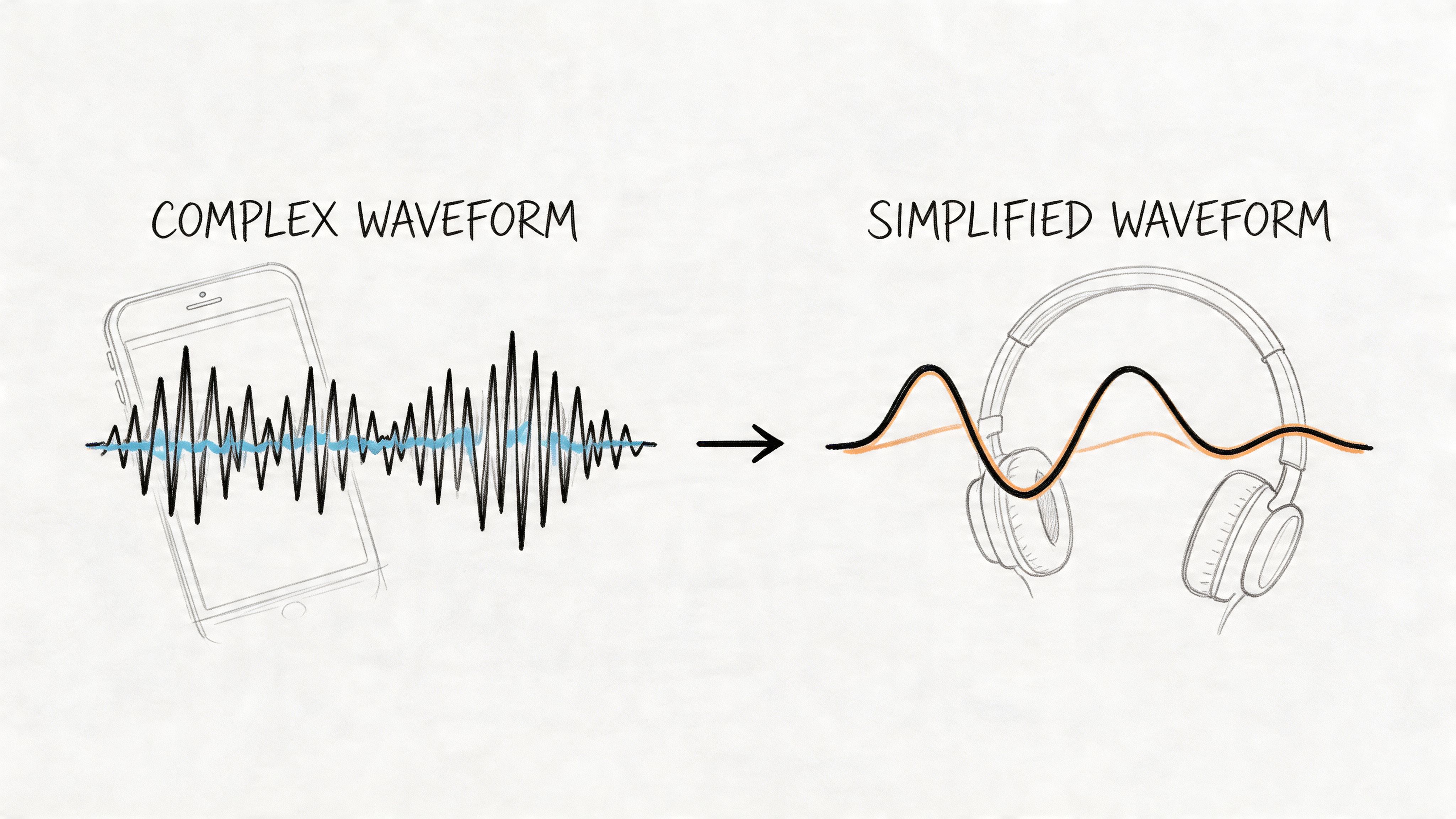

The Rise of Semantic and Multimodal Search

The next generation of IR is all about understanding meaning. Semantic search is quickly becoming the new standard. Instead of just matching keywords, the system grasps the concepts behind your words. It knows that a search for "managing team finances" is closely related to documents about "project budgeting," even if the exact phrases don't appear. This conceptual understanding is what makes a truly conversational experience with your data possible.

But the future isn't just about text. It's also multimodal. Think about snapping a picture of a plant to find out its species or humming a tune to identify a song. Multimodal search lets IR systems work with different kinds of inputs—images, audio, and video—to find relevant information in any format. For a tool like HypeScribe, this could mean searching a meeting archive with a voice command or even a screenshot from a presentation slide.

The ultimate goal is to make finding information as natural as talking to an expert. The system will understand your intent, no matter how you ask, and provide a direct, reliable answer drawn from its vast knowledge base.

To make this a reality, these advanced IR models need a constant supply of high-quality data. Techniques like scraping data for AI will be instrumental in collecting the diverse information needed to train the next generation of intelligent search systems.

Hyper-Personalization and Emerging Challenges

As IR systems get smarter, they're also getting more personal. Future systems will learn from your past searches and interactions to tailor results just for you. The system will know your role, your current projects, and your information-seeking habits, dynamically re-ranking results to surface what's most relevant to you right now. This is a fundamental piece of building a genuine conversation intelligence platform.

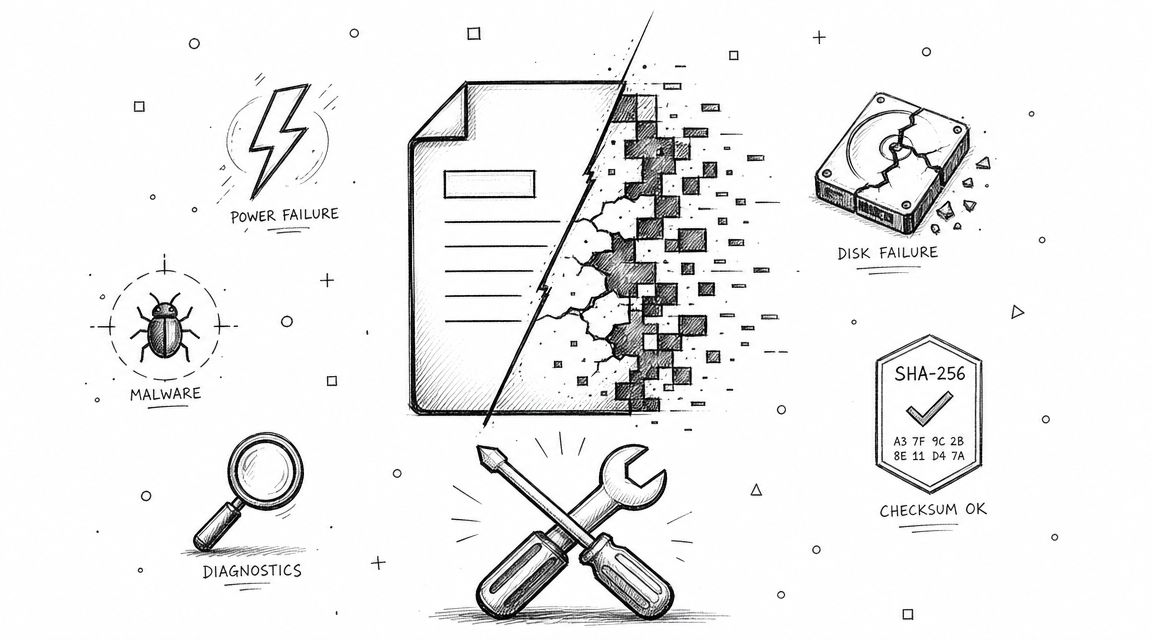

Of course, this exciting future brings its own set of hurdles. As AI gets better at both creating and ranking content, the risk of spreading misinformation—or "hallucinations"—also grows. Ensuring the accuracy and fairness of search results is a critical challenge. Developers must build in strong safeguards to fight bias and prioritize trustworthy, authoritative sources.

With the sheer volume of digital information growing every second, the need for smarter, more intuitive retrieval systems has never been greater. The line between asking a question and getting an answer is dissolving, and AI is leading the charge.

Frequently Asked Questions

Even after covering the basics, a few practical questions always seem to come up. Let's dig into some of the most common ones.

What Is the Difference Between an Information Retrieval System and a Database?

Think of a database as a meticulously organized vault. It’s perfect for structured data—things like customer records, inventory numbers, or bank transactions. To get something out, you need the exact key, like a specific customer ID. The system then returns that one, perfect match.

An information retrieval system, on the other hand, is more like a massive library designed for the beautiful mess of unstructured data—all those text documents, emails, and meeting transcripts. You don't ask for "Book #801.3." You ask a librarian for "books about ancient Roman engineering." The goal isn't an exact match, but a set of highly relevant results.

How Do Information Retrieval Systems Relate to SEO?

Search Engine Optimization (SEO) is the entire practice of getting your content to show up at the top of a search engine. And what is a search engine like Google? It’s simply the world's largest, most sophisticated information retrieval system.

In essence, SEO is the work you do to convince an IR system that your content is the most relevant and authoritative answer for a person's query. This involves signaling relevance through keywords, demonstrating authority with quality links, and meeting user intent—all core principles of information retrieval.

Can I Build a Simple Information Retrieval System?

You absolutely can. While building the next Google is out of the question for most, creating a powerful search feature for your own app, website, or internal document collection is surprisingly achievable.

You don't have to start from zero. Modern open-source tools give you all the core components you need. The most common choices include:

- Apache Lucene: A powerful and highly-regarded text search library written in Java. It’s the engine under the hood of many other search tools.

- Elasticsearch & OpenSearch: These are full-blown search engines built on top of Lucene. They provide user-friendly APIs and everything you need to index, query, and rank data at scale.

Using these frameworks, a development team can implement a robust IR system without reinventing the wheel.

Tired of losing track of critical decisions buried in hours of recordings? HypeScribe uses a sophisticated information retrieval system to turn your spoken conversations into a searchable, organized knowledge base. Find any moment, action item, or key takeaway across all your meetings in seconds. Experience the power of searchable conversations today at HypeScribe.