A Complete Guide to Managing Research Projects in 2026

You're probably reading this because your research project already feels heavier than the proposal made it sound. Notes sit in three folders and two cloud drives. Interview decisions live inside meeting recordings nobody has time to revisit. One collaborator thinks the study includes an extra subgroup. Another assumes that change was never approved. The work is moving, but the project isn't fully under control.

That's the main challenge in managing research projects. The science may be sound, yet the operation around it can still break down. In practice, research projects fail less often from lack of effort than from fuzzy scope, weak documentation, handoff errors, and delayed decisions.

The good news is that research management is teachable. It isn't a personality trait. It's a set of habits, constraints, and systems that help a team turn a good idea into a finished study without losing the thread halfway through. What follows is the playbook I trust when a project has to survive remote collaboration, shifting inputs, ethics requirements, and the usual mess of real work.

Lay the Foundation Your Project Needs to Succeed

Most project chaos starts long before data collection. It starts when a team says yes to a broad idea and mistakes that for a plan. A vague project can look exciting in the kickoff phase because everyone can project their own priorities onto it. A few weeks later, that same vagueness turns into rework.

A strong foundation does three things. It narrows the problem, defines what counts as evidence, and makes the finish line visible. If your team can't explain those three points in plain language, the project is still underdesigned.

Start with scope before enthusiasm

Researchers often begin with a meaningful topic, only for the project to gradually absorb every adjacent question. That's how a focused study becomes a bloated one.

Use a short scope statement that answers these questions:

- What exact problem are we studying

- What is inside the project

- What is outside the project

- Who needs the findings

- What form will the final output take

The last two matter more than many teams admit. A study intended for a journal article is managed differently from one meant to inform a policy brief, product decision, or community intervention.

Practical rule: If a proposed activity doesn't help answer the core research question or produce the agreed output, it belongs on a parking lot list, not in the active project.

Write research questions that can actually be managed

A research question isn't just an intellectual prompt. It's a management tool. It determines who you need, what data you collect, how much time analysis will take, and how hard ethics review will be.

Good questions are answerable within the project's actual constraints. Weak ones sound important but require unlimited access, unlimited time, or a method your team can't realistically execute.

A useful test is to ask whether the question implies a clear dataset and a clear decision path. If it doesn't, refine it.

Here's a simple planning check:

| Planning element | Strong version | Weak version |

|---|---|---|

| Scope | Specific population, setting, timeframe | Broad topic with no boundary |

| Question | Can be answered with available data and method | Requires undefined evidence |

| Objective | Observable outcome with a deadline | Aspirational intent |

Set objectives that define done

Teams need SMART objectives, but not in a slogan-heavy way. Their value lies in operational clarity. An objective should tell the team what must be completed, by whom, and by when.

That includes intermediate deliverables. Don't only define the final report. Define the moments that prove the project is progressing: protocol approval, pilot completion, recruitment launch, coding framework freeze, first analysis memo, and final synthesis.

Technology is moving in this direction because teams are tired of improvising structure. The AI-in-project-management market is projected to reach $7.4 billion by 2029, reflecting demand for tools that solve core pain points in planning, coordination, and communication in projects, including research workflows, as noted in Chanty's project management statistics roundup.

For day-to-day setup, it helps to keep one canonical project brief and one note system. If your research notes are already fragmented, this guide on how to organize research notes is a practical place to tighten the workflow before the project grows further.

Define your nonnegotiables early

Before anyone starts “just collecting a bit of data,” lock these down:

- Primary question: The one question the project must answer.

- Core deliverable: Article, report, thesis chapter, internal recommendation, or presentation.

- Decision owner: The person who resolves ambiguity when interpretation differs.

- Change rule: How new requests enter the project, and who approves them.

Without those guardrails, every meeting becomes a renegotiation.

Assemble Your Team and Select Your Methods

Research projects go sideways when role design and method design happen separately. One team builds around available people. Another picks an elegant method and assumes the team will adapt. In practice, those choices have to be made together.

In multi-site work, this matters even more. The RN4CAST study, which involved 15 countries, found that stakeholder agency and cross-boundary collaboration were central to success, and that pre-launch alignment workshops plus centralized communication platforms boosted partner engagement by 60% and mitigated 70% of scope creep pitfalls, according to the RN4CAST research article.

Build the team around responsibilities, not titles

A common mistake is assuming job titles describe project work. They don't. “Co-investigator,” “research assistant,” or “analyst” tells you status. It doesn't tell you who owns recruitment, who decides when a codebook changes, or who checks transcription quality.

Use a responsibility map with five practical lanes:

- Scientific lead handles research integrity, protocol decisions, and final interpretation.

- Operations lead tracks milestones, dependencies, meeting outcomes, and follow-ups.

- Data lead owns file structure, naming rules, version control, and transfer procedures.

- Field or participant lead manages recruitment, scheduling, and participant communication.

- Analysis lead defines how raw material becomes findings.

In smaller teams, one person may hold several lanes. That's fine. What matters is visibility.

Teams don't need more meetings first. They need fewer assumptions.

Match method to question and constraints

Methodology should answer a practical question: what approach gives you credible findings within the project's real limits?

A simple decision frame helps:

| If your question asks | A stronger fit may be | Main trade-off |

|---|---|---|

| How people experience something | Qualitative interviews, focus groups, observation | Rich insight, slower analysis |

| How often or how much | Survey, structured dataset, quantitative design | Cleaner comparison, less nuance |

| Both pattern and meaning | Mixed methods | Stronger triangulation, heavier workload |

Method choice also shapes staffing. A qualitative project needs people who can interview, code, memo, and interpret. A quantitative project needs disciplined dataset handling and analysis competence. Mixed methods need both, plus stronger integration at synthesis.

If your project relies on interviews, focus groups, or open-ended responses, this walkthrough on how to analyze qualitative data is useful because it ties method choice directly to the practicalities of coding and interpretation.

Don't ignore the physical workflow

Remote and hybrid work can make teams forget that research still happens in physical environments. Sample handling, bench work, equipment layout, and storage all affect timing and error risk. For contract and collaborative lab environments, practical infrastructure choices matter. Teams planning shared wet-lab operations may find guidance in outfitting contract research labs with casework, especially when facility design affects handoffs, contamination control, or workflow efficiency.

Method feasibility isn't only about epistemology. It's also about space, equipment, staffing patterns, and where bottlenecks will appear.

Use a kickoff that resolves ambiguity

A proper kickoff should produce decisions, not just goodwill. By the end, every contributor should know:

- what they own,

- where project records live,

- how decisions are documented,

- what requires escalation,

- and what standards define acceptable work.

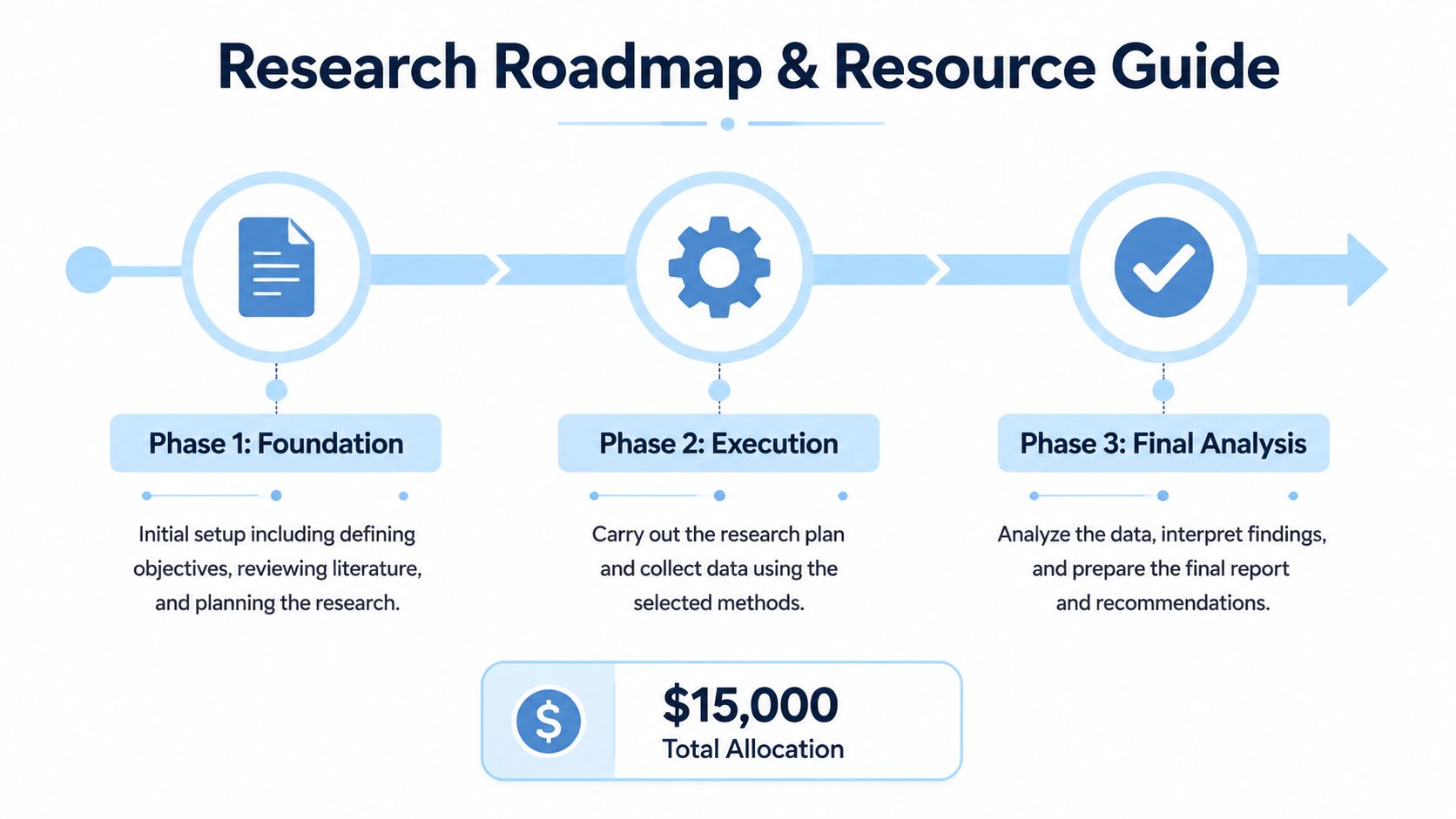

A quick visual explainer can help align mixed teams before the work deepens.

When teams skip this step, conflict shows up later as “method disagreement” when the underlying problem is role confusion.

Build Your Guardrails for Ethics and Data Management

Ethics and data management are often treated like admin work that delays the “real” project. That's backwards. They are the protective structure that keeps the project usable, defensible, and publishable.

A weak ethics process doesn't only create compliance risk. It also damages timelines because teams end up revising instruments, rewriting consent language, or reconstructing data handling decisions after collection has already started. That's expensive in time and credibility.

Write a data management plan that people will actually follow

A good data management plan isn't long. It's operational. It tells the team what happens to data from first capture to final archive.

At minimum, the plan should define:

- Collection rules: what formats you'll collect, who is allowed to collect them, and how files are named

- Storage rules: where master files live, who can access them, and which copies are working copies

- Processing rules: how recordings become transcripts, how cleaning is documented, and how versions are tracked

- Sharing rules: what can be shared internally, externally, and with participants or partners

- Retention and deletion rules: what gets preserved, what gets removed, and when

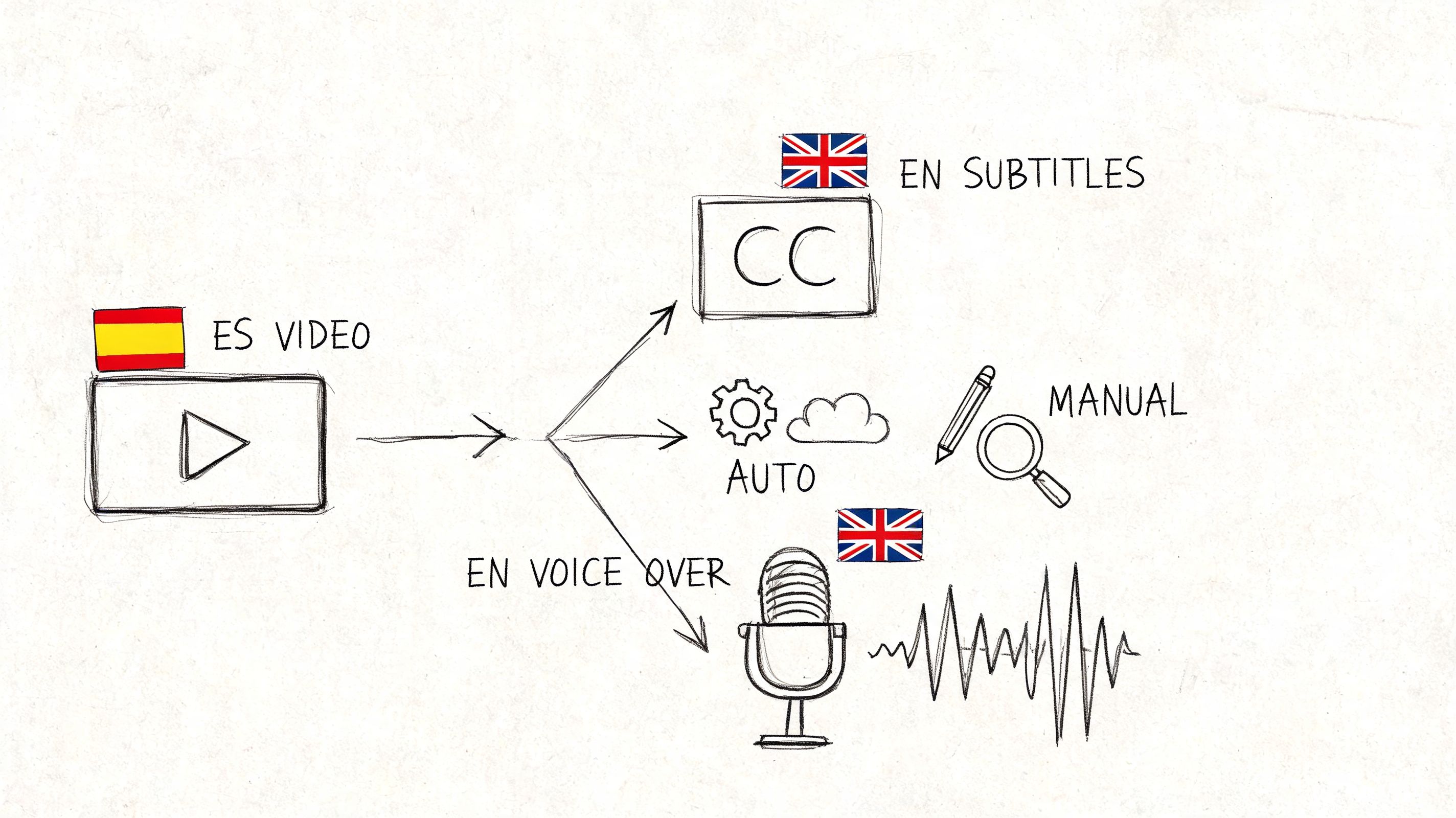

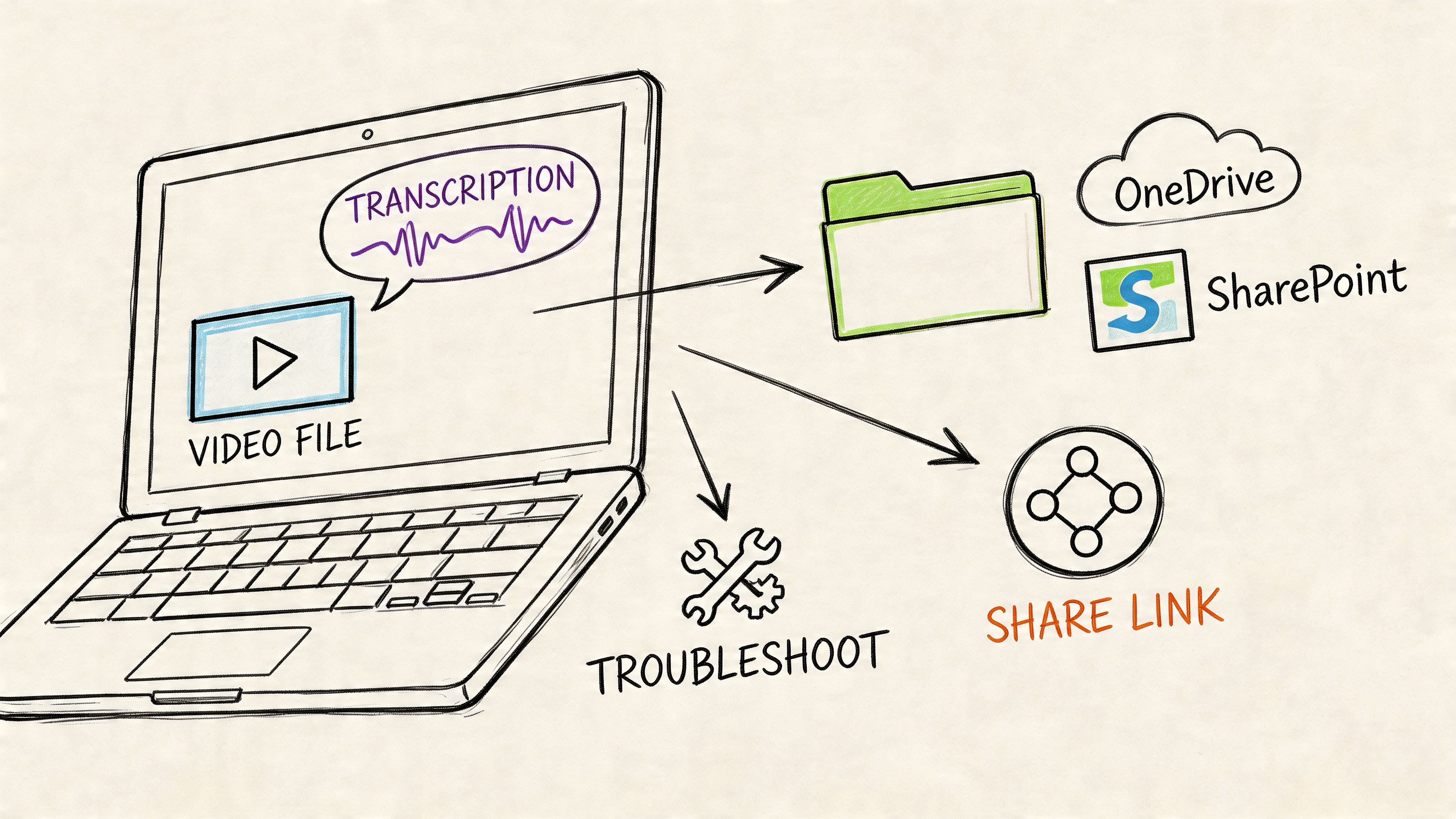

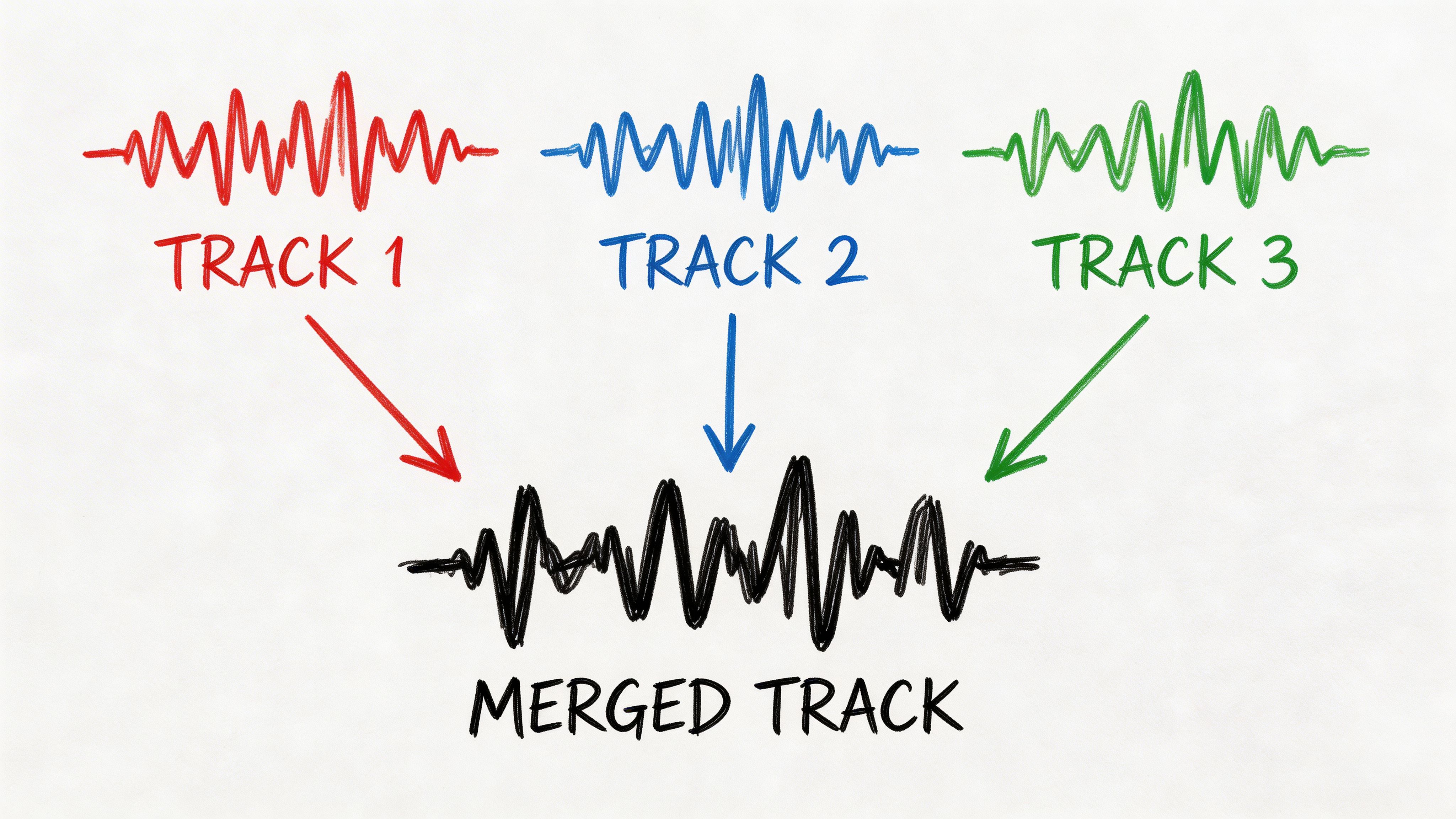

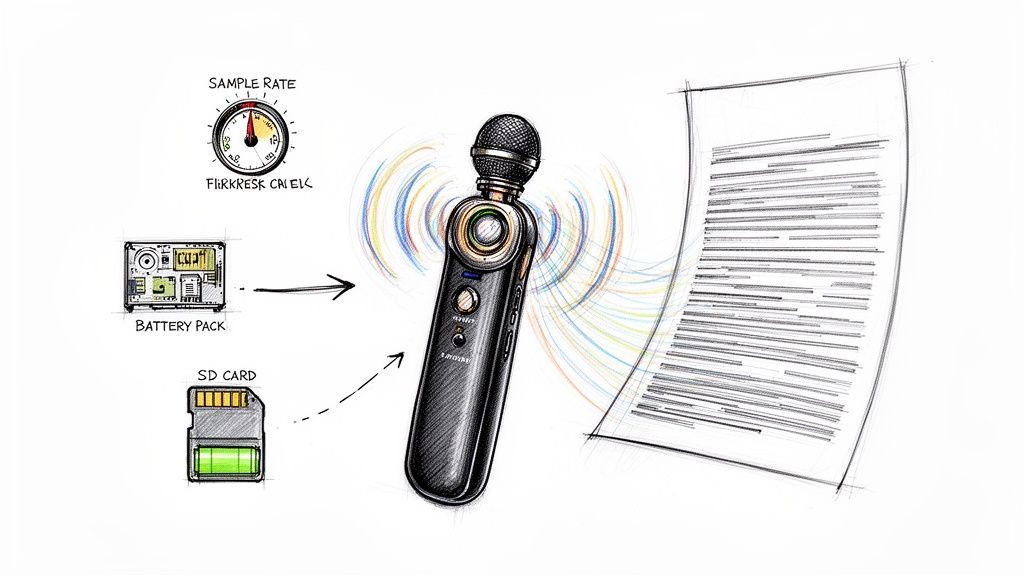

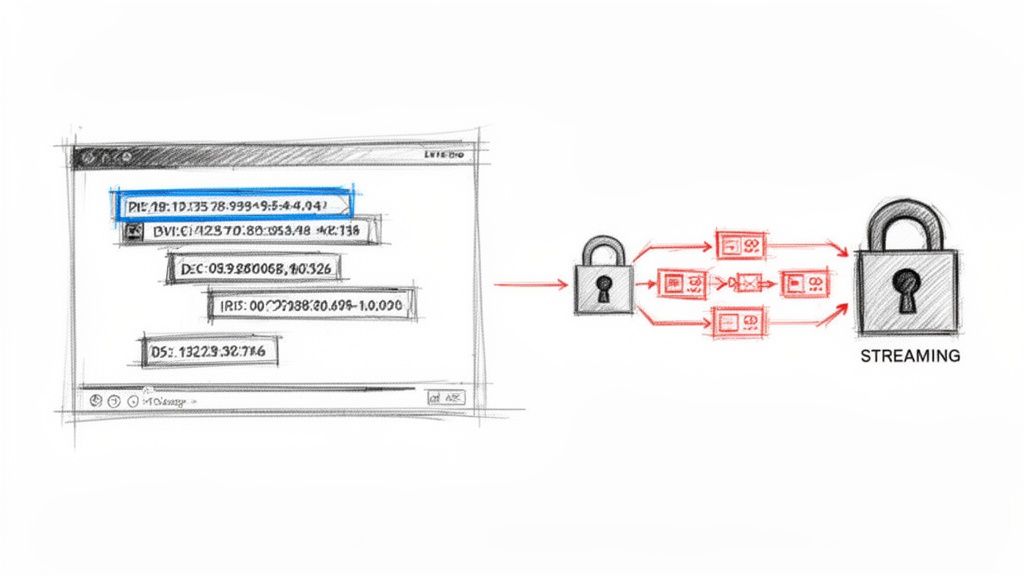

Sensitive data deserves extra discipline. Interview recordings, voice notes, and meeting captures often contain identifiers that teams underestimate. Treat raw audio as governed material, not casual reference.

Ethics planning is also stakeholder planning

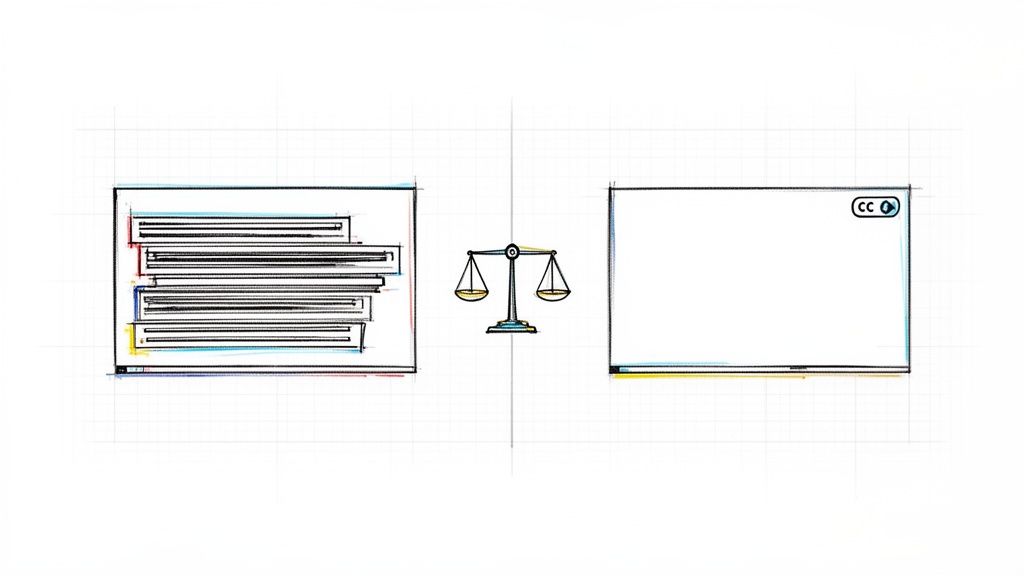

Research teams often struggle to incorporate stakeholder feedback without destabilizing scope. The burden usually falls on someone manually piecing together comments from calls, notes, and memory. That creates weak justification trails and makes it hard to show how community input shaped decisions. The better approach is to capture and document those conversations so there's a transparent audit trail of what was raised, what changed, and what did not, as discussed in this piece on project constraints and stakeholder feedback.

That principle matters most in participatory and community-engaged work. If you invite input, you need a repeatable way to log it, assess it, and respond to it. Otherwise, “engagement” becomes selective memory.

Field note: Ethics problems often start as documentation problems. If the rationale for a choice can't be recovered later, the team will argue about what was approved.

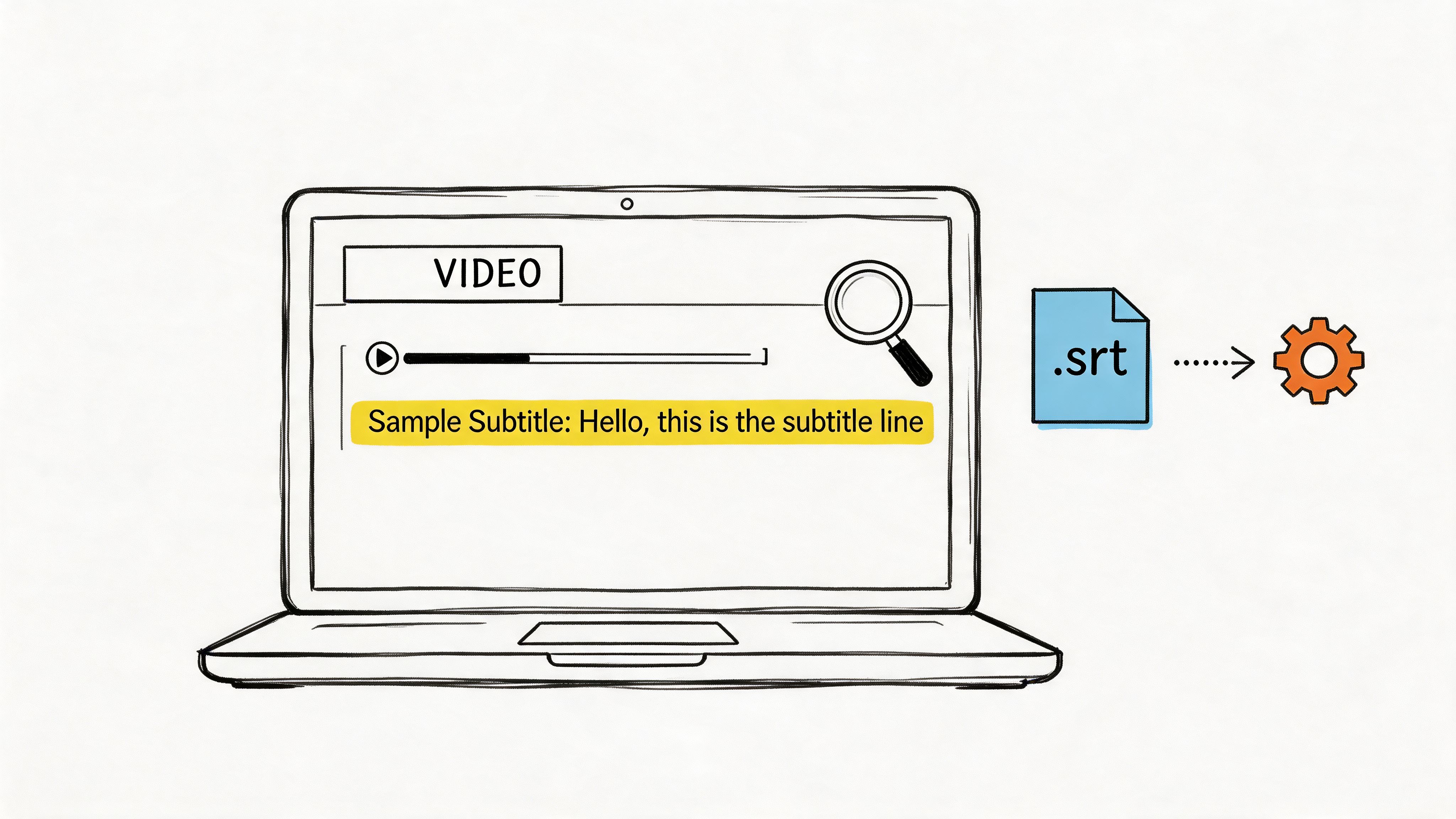

Build transparency into the workflow

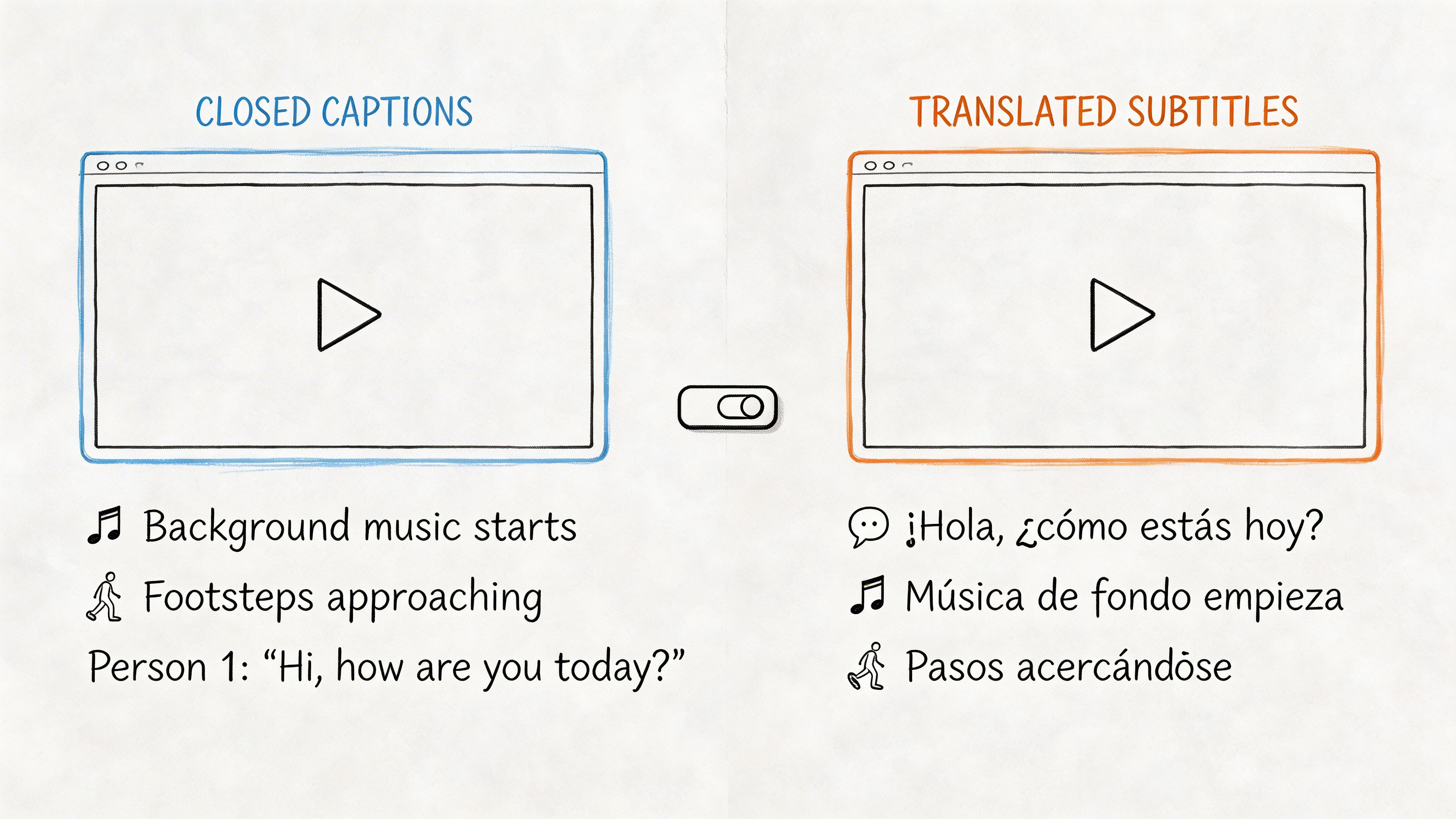

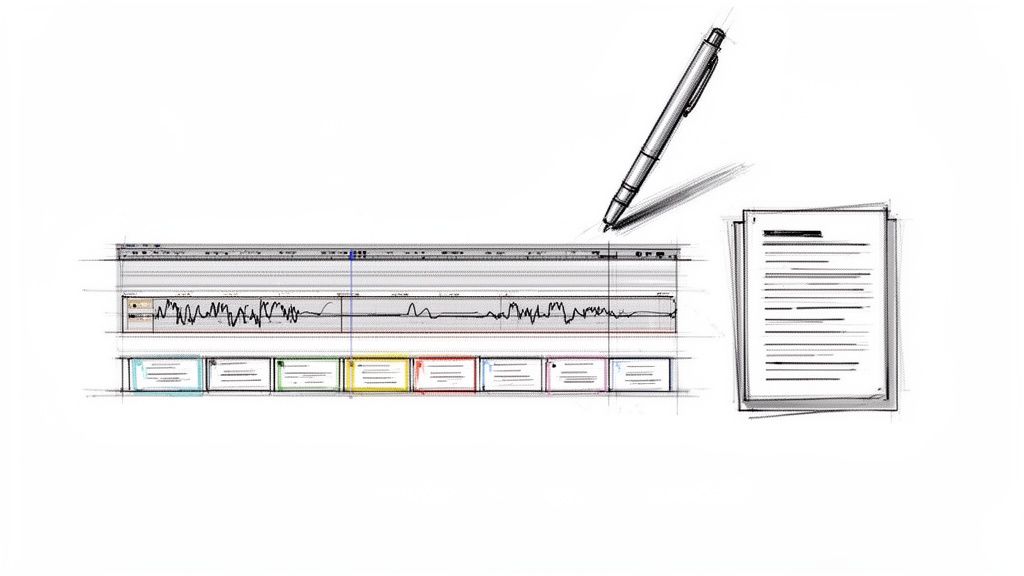

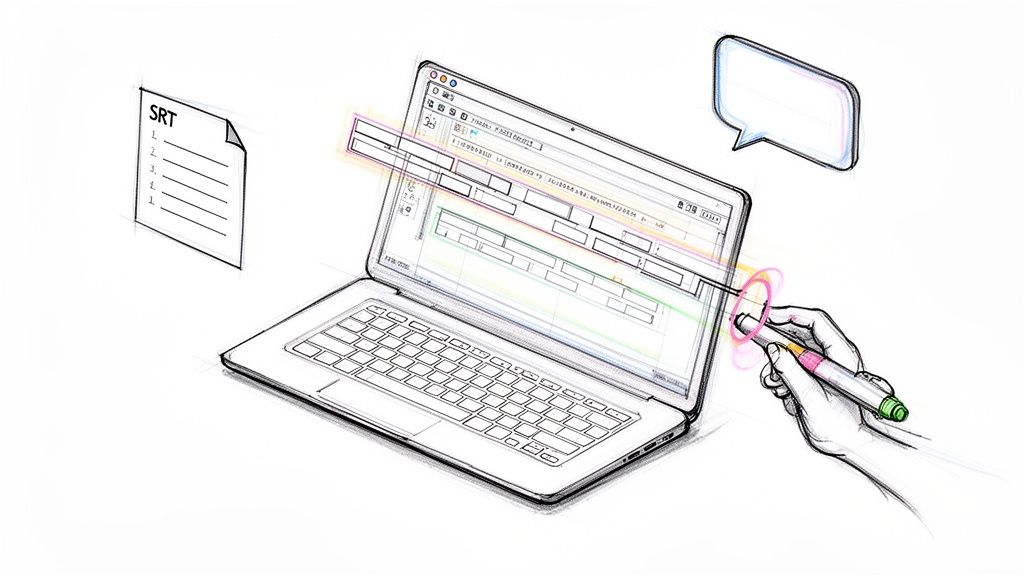

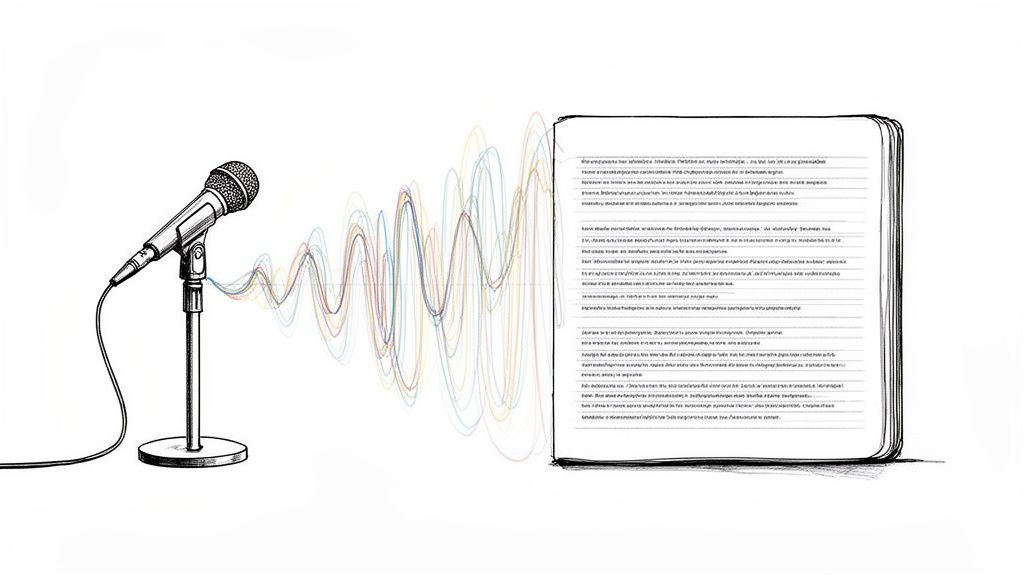

For qualitative and interview-heavy projects, transcription is part of governance, not just convenience. Searchable transcripts make it easier to review what was said, verify consent language, and preserve decision context during analysis. This guide to transcription in qualitative research is useful because it connects transcript quality with rigor, traceability, and analysis readiness.

A practical ethics workflow usually includes:

- Pre-review memo that identifies likely ethical pressure points.

- Consent packet that matches the actual data collection workflow.

- Data map that lists what is captured in each stage.

- Decision log for protocol changes, exceptions, and stakeholder requests.

That log matters more than people think. When a team has to explain why it altered a question guide, included a new subgroup, or changed a data-handling rule, a contemporaneous record saves weeks of reconstruction.

Create a Realistic Project Timeline and Budget

Most research timelines fail for one simple reason. They describe the work as if nothing will interrupt it. But real projects are full of waiting periods, revisions, participant no-shows, procurement delays, and analysis loops that take longer than expected.

A useful timeline doesn't predict a perfect path. It exposes dependencies and gives the team room to absorb friction without panic.

Build the schedule backward from fixed dates

Start with the immovable points. Grant deadlines, ethics approval targets, conference submissions, stakeholder reporting dates, contract milestones, or semester boundaries. Then work backward.

Break the project into a small number of phases with visible handoffs.

Use phases and milestones, not a giant task swamp

A simple structure is usually enough:

| Phase | What belongs here | Risk to watch |

|---|---|---|

| Foundation | protocol, ethics prep, instruments, staffing, file systems | underestimating setup time |

| Execution | recruitment, fieldwork, collection, cleaning | delays and scope drift |

| Final analysis | coding, synthesis, writing, revision | analysis bottlenecks |

Within each phase, define milestones that signal readiness to move forward. “Start interviews” is not a milestone. “Interview guide approved, consent files finalized, storage location confirmed, pilot completed” is.

Budget for the project you're actually running

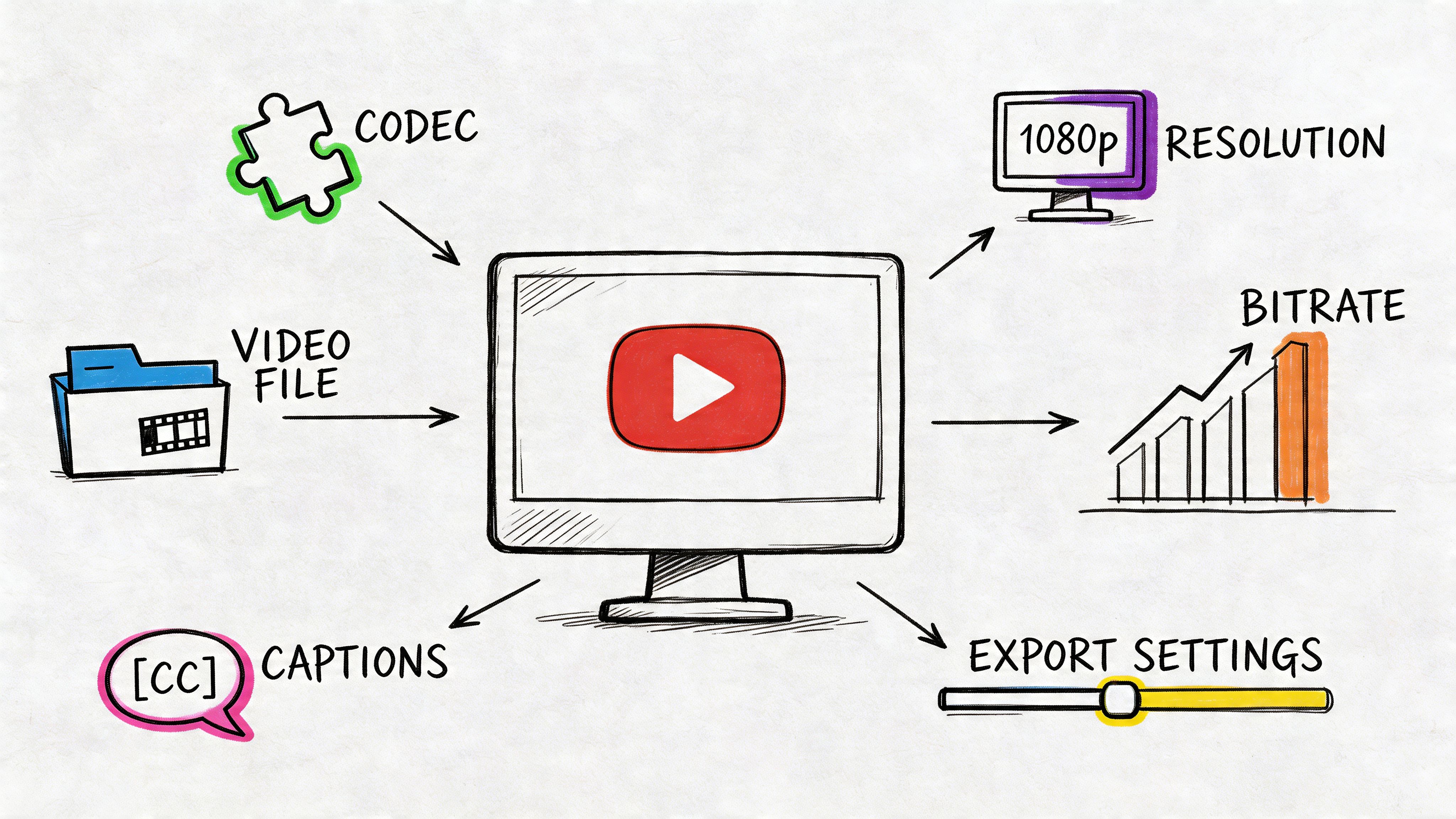

A budget should mirror the workload, not just the proposal categories. If the project depends on interviews, then transcription, review time, participant coordination, storage, and analysis labor belong in the plan. If it depends on remote teamwork, account for the administrative overhead of coordination and documentation.

The most neglected budget line is almost always rework. Teams assume they're budgeting for data collection. In reality, they should also budget for corrections, recontact, recoding, and clarification.

Use short planning cycles when uncertainty is high

Research teams sometimes resist agile practices because they sound like software jargon. That's a mistake. Short planning cycles are useful anywhere uncertainty is high.

According to the Walden analysis cited in the verified data, agile strategies can increase success rates from under 35% to over 64%, and two-week research sprints with daily stand-ups can reduce scope creep, a cause of 37% of project failures, by as much as 50% through continuous backlog refinement, as summarized in the Walden University dissertation source.

That doesn't mean every research project should run like a software team. It means you should review work in short intervals, surface blockers quickly, and keep a live backlog of tasks, risks, and pending decisions.

The timeline should reflect uncertainty openly. Buffer time isn't a sign of weak planning. It's a sign that somebody has managed research before.

Keep a basic risk register

You don't need enterprise software for this. A one-page register is enough if the team uses it.

Include:

- Risk description

- What would trigger it

- Likelihood and impact in plain language

- Preventive action

- Owner

- Fallback plan

Examples include delayed recruitment, low-quality recordings, instrument revisions after pilot work, collaborator availability, and stalled analysis due to coding disagreement. Once risks are named, they become manageable.

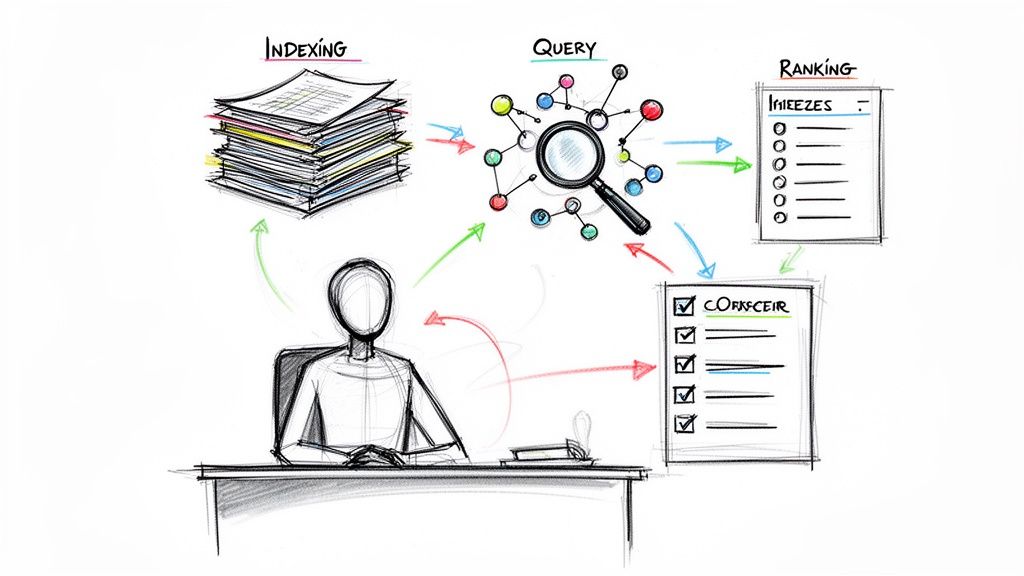

Monitor Progress and Use Smart Tools

Monday starts with a familiar problem in remote research teams. Three people leave a call with different understandings of what was decided, one interviewer saved notes locally, and nobody can say with confidence which analysis tasks are blocked versus merely delayed. At that point, the project does not have a productivity problem. It has a documentation problem.

Good monitoring fixes that. It gives the team a shared view of progress without turning researchers into full-time administrators.

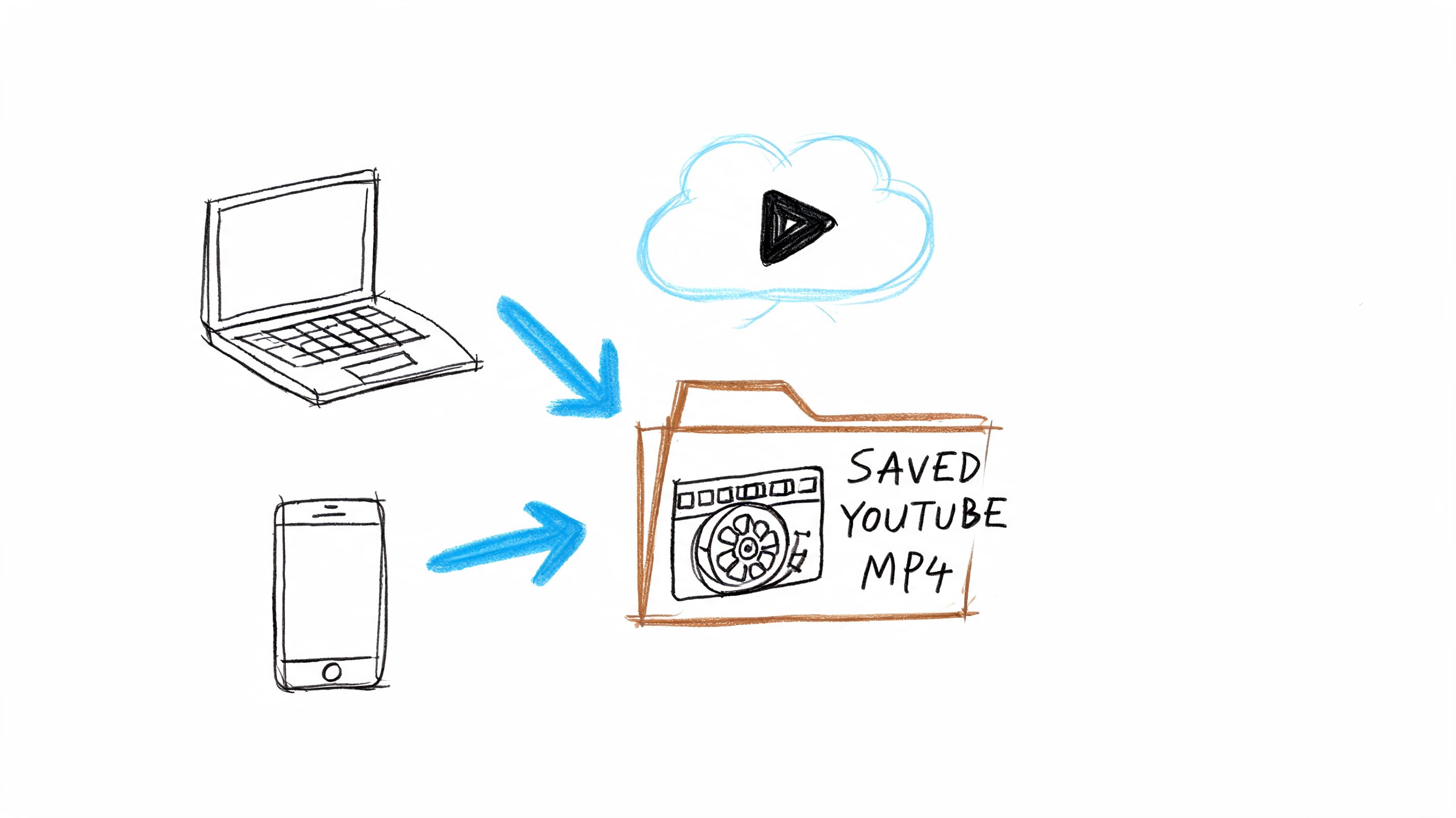

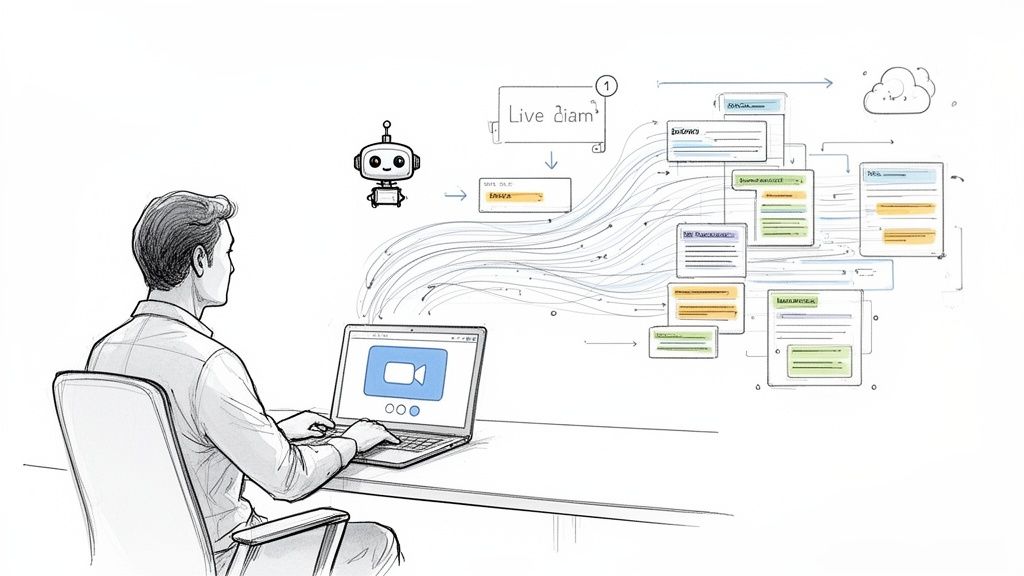

![]()

The best systems use artifacts the work already produces. Meeting notes, interview transcripts, coding memos, change logs, and action lists are not background paperwork. They are the operating record of the study, especially when the team is split across locations and time zones.

Track fewer things, more consistently

I prefer a short review rhythm, usually weekly or twice a month. The point is not to collect more updates. The point is to spot drift early, assign ownership, and keep decisions visible.

Each review should answer five questions:

- Milestone status: on track, at risk, or blocked

- Open decisions: what needs an owner and by when

- Action items: what was agreed and what is still outstanding

- Emerging risks: what changed since the last review

- Documentation health: whether records are searchable and current

That discipline pays off. According to Wimi's project management statistics roundup, organizations that invest in established project management practices lose far less money, and strong communication is one of the clearest contributors to project success. In research settings, that usually comes down to a simple question. Can the team see the same version of reality?

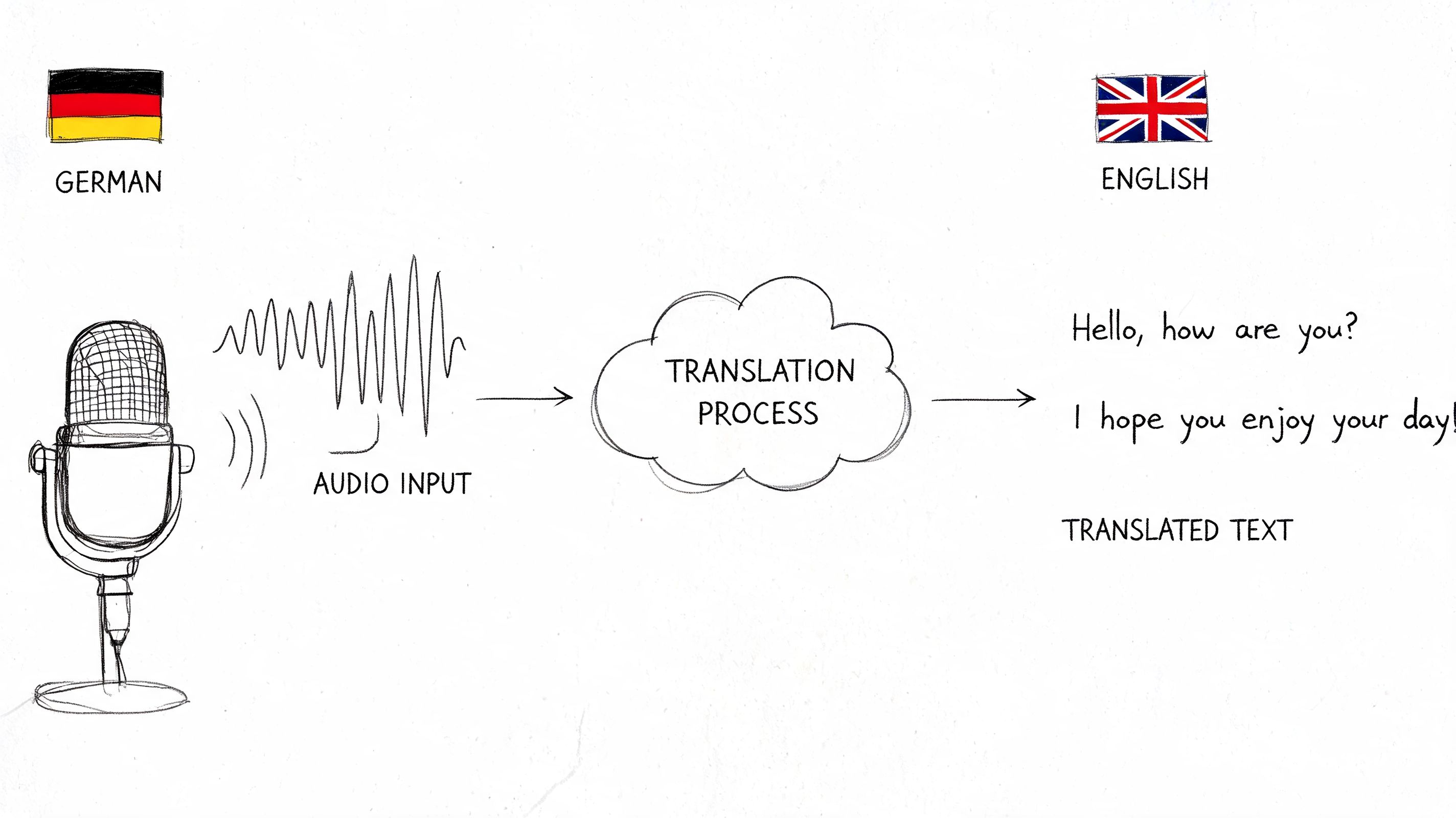

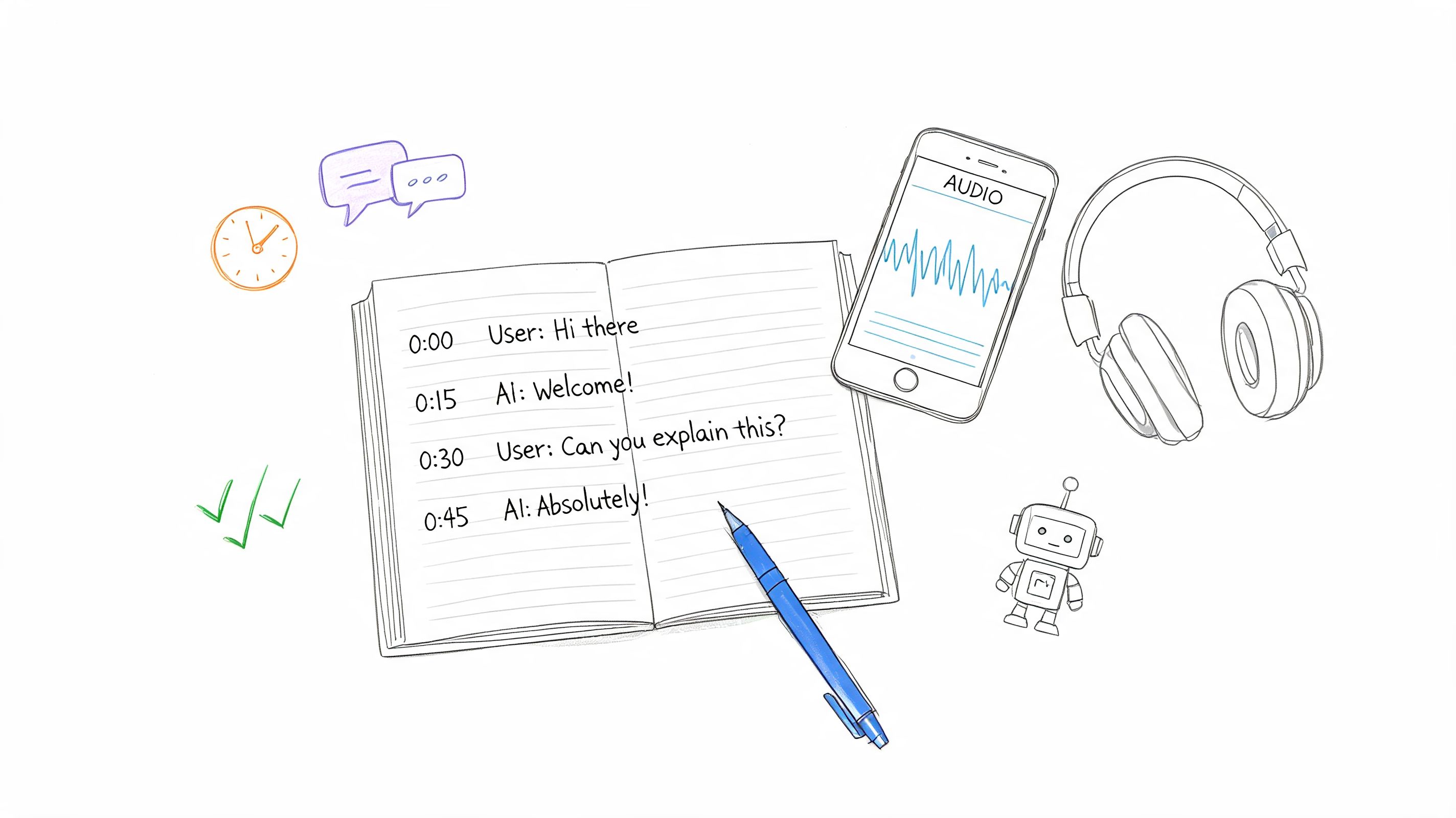

Make meetings produce records the team can use

Research meetings are often decision sessions disguised as conversations. If those decisions stay trapped in somebody's notebook or memory, the same issues get reopened in the next call.

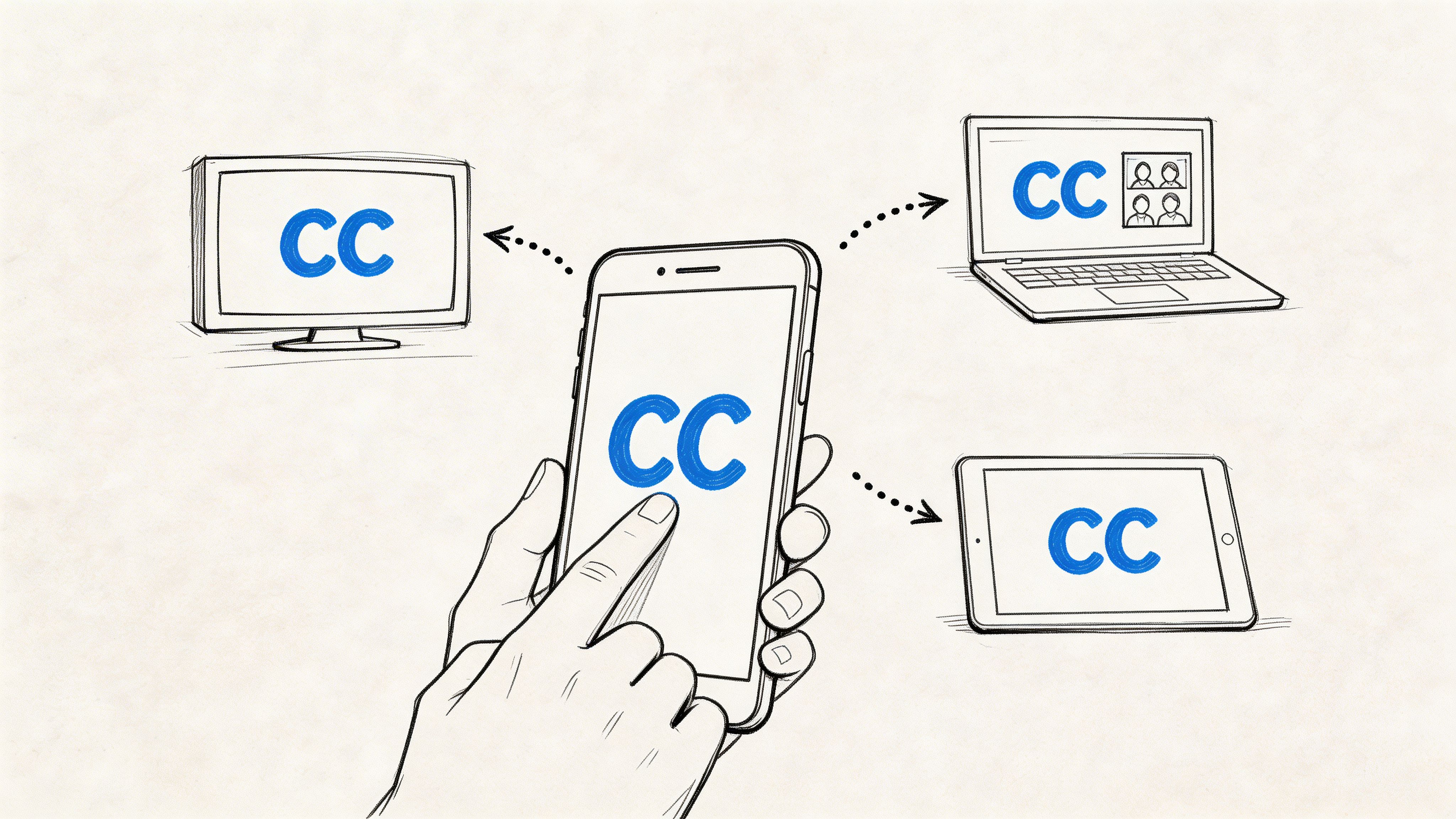

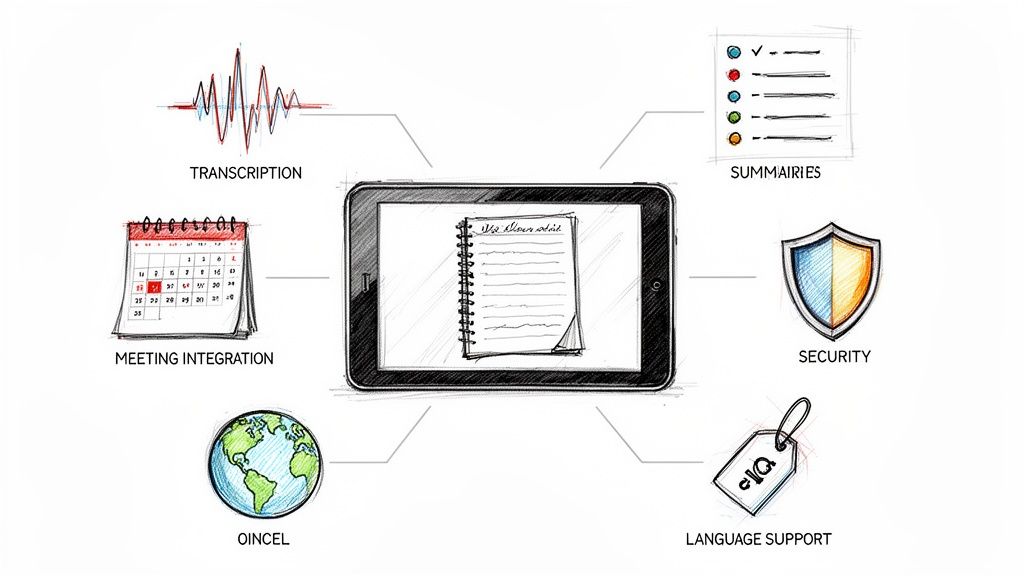

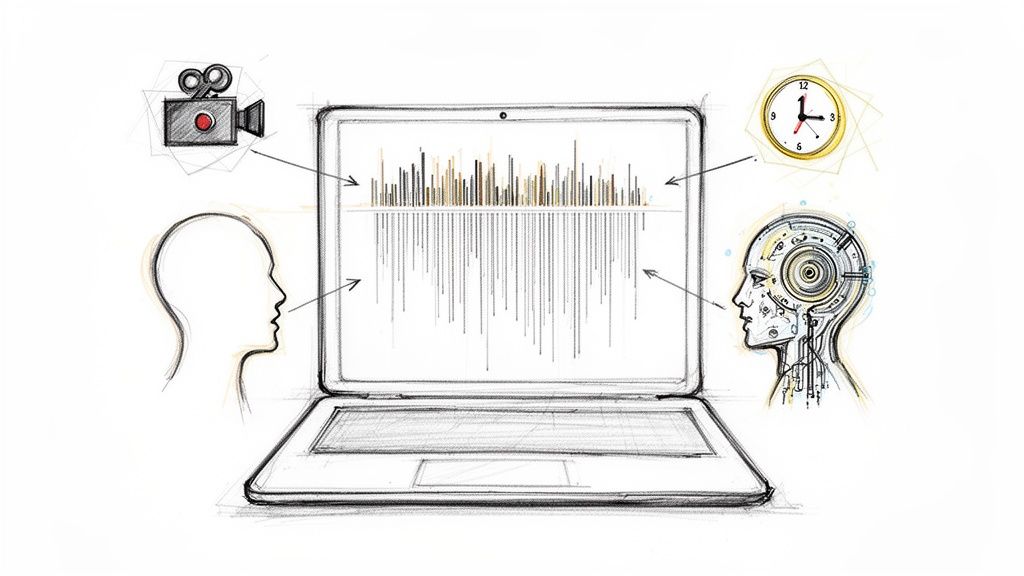

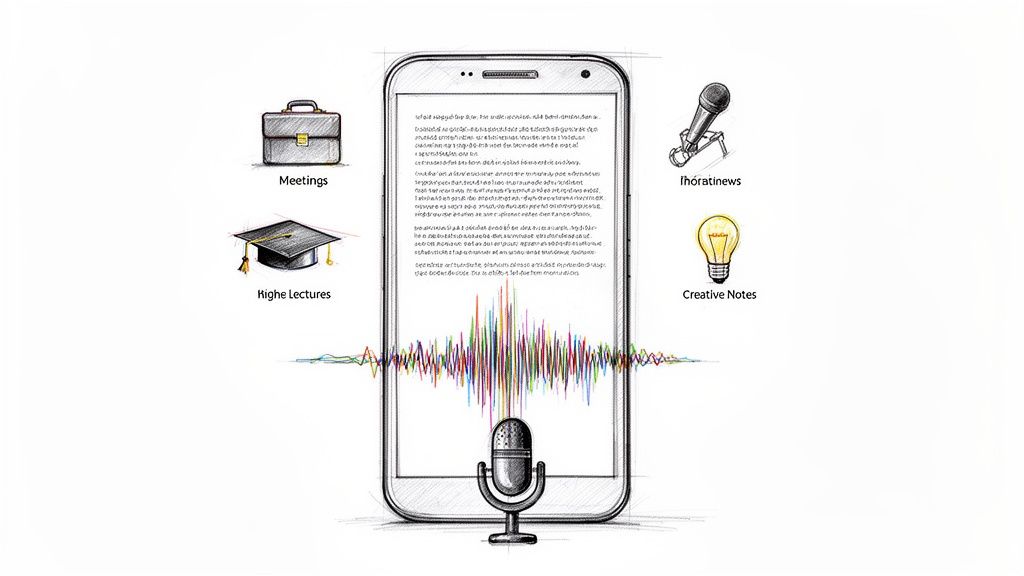

Transcription and automated note tools help solve a problem that older project management advice barely addressed. Hybrid teams need records that can be searched, shared, and checked later without waiting for one person to write up minutes. That matters for weekly check-ins, methods discussions, coding reviews, and stakeholder calls.

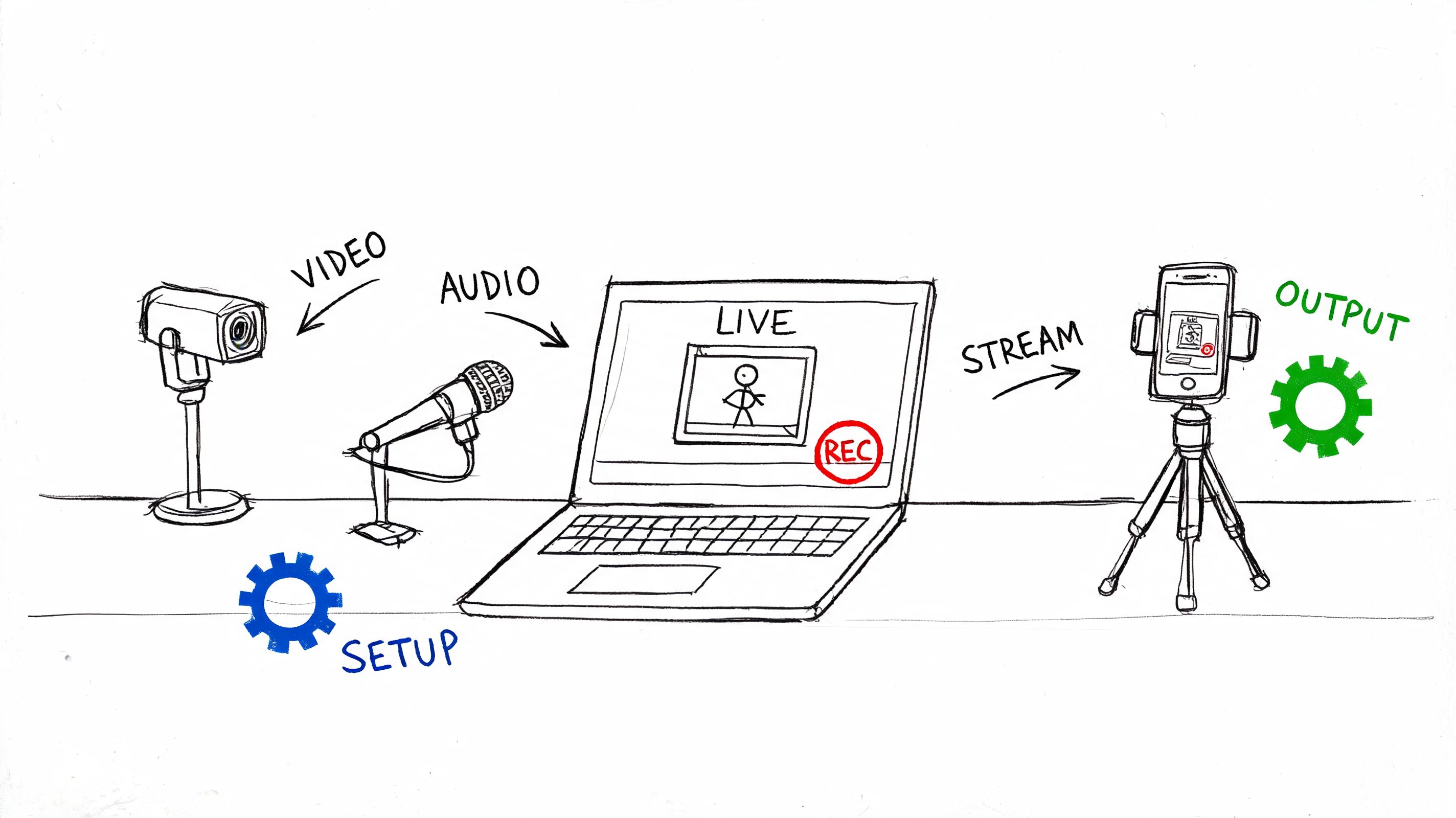

A practical setup looks like this:

| Workflow moment | Useful output | Why it matters |

|---|---|---|

| Weekly check-in | transcript and action list | preserves decisions and owners |

| Interview completion | time-stamped text | speeds review and coding |

| Analysis meeting | searchable summary | captures interpretation shifts |

| Stakeholder call | documented feedback log | supports accountability |

The gain is not convenience alone. It is continuity. In a hybrid project, searchable records reduce repeated debates, shorten handoffs, and make it easier to audit how the team reached a conclusion.

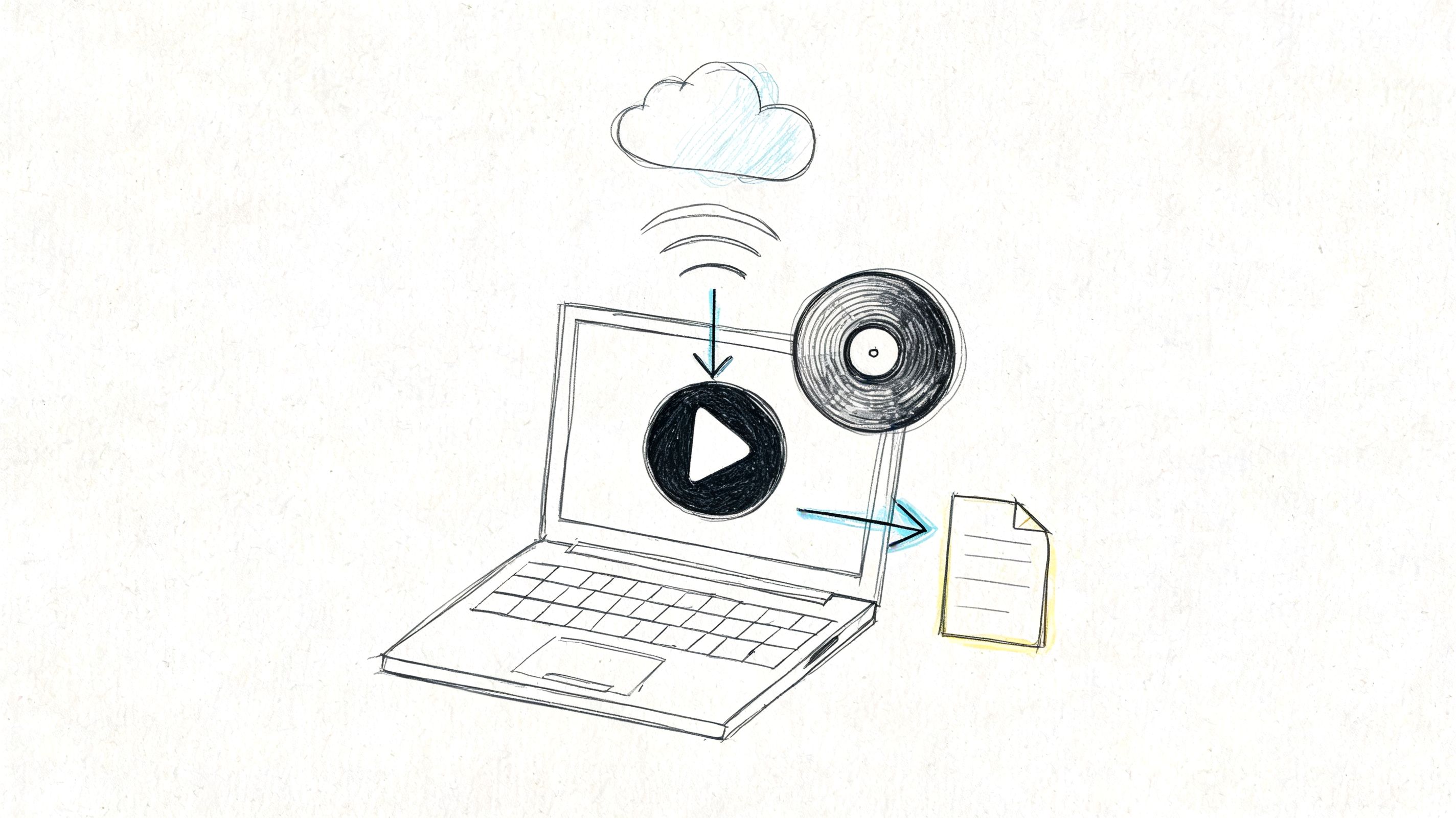

Build an archive that supports asynchronous work

Remote collaboration breaks down when documentation lives in five places and none of them match. A usable archive should let a researcher answer basic questions fast: What changed, who approved it, what version are we using, and what still needs a decision?

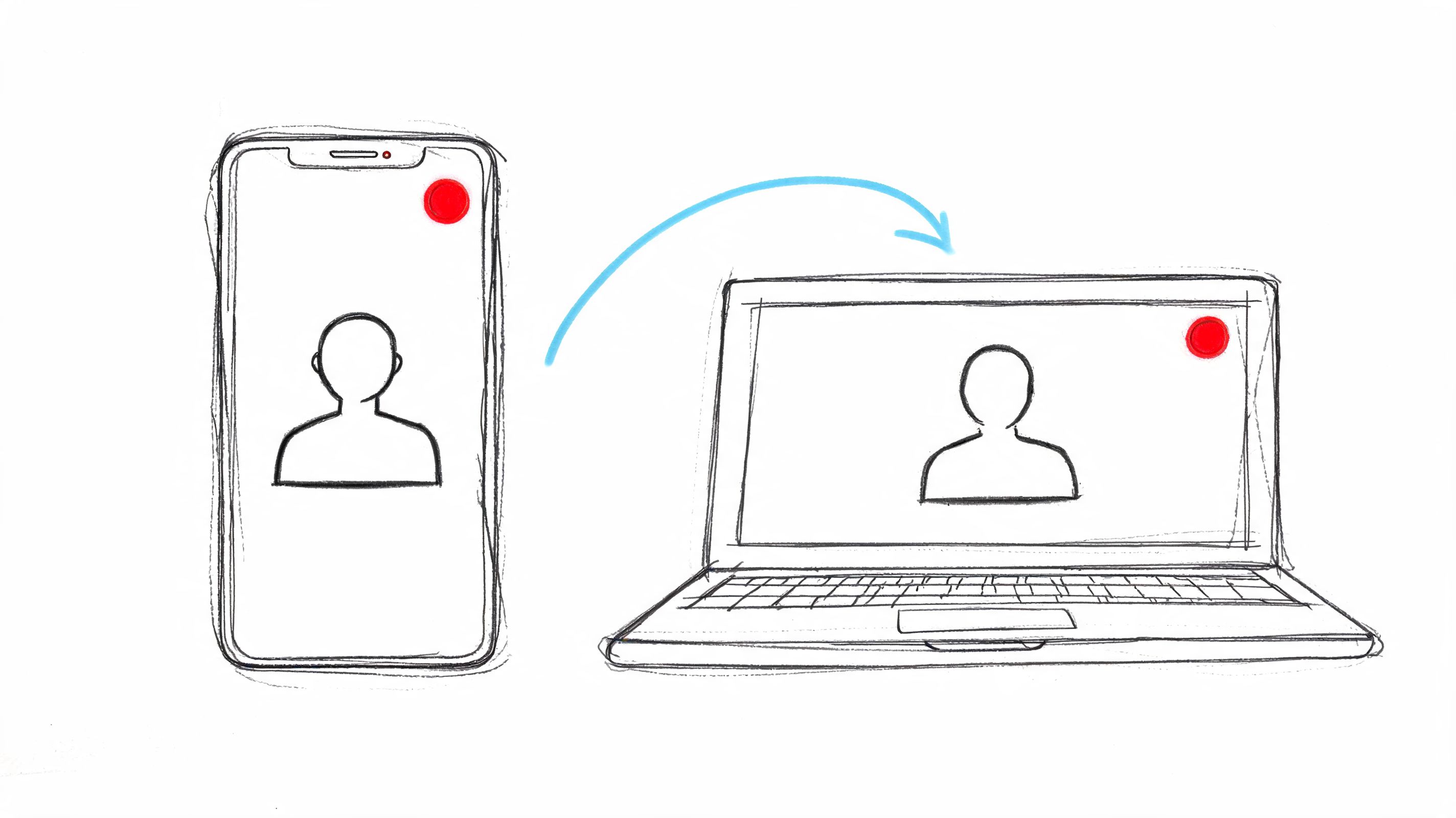

One workable approach is to use a tool that transcribes recorded meetings, generates summaries and action items, and turns spoken discussion into searchable project records. That setup is especially useful when interviews, analysis meetings, and supervisory check-ins happen across different schedules. People miss fewer details because the record does not depend on perfect note-taking.

If your project includes specialized scientific workflows, monitoring may also depend on domain-specific tools. Teams working in biotech contexts, for example, may need workflow visibility that connects experimental design, collaboration, and analysis through platforms such as DNA engineering and modeling software.

Management habit: If a decision affects scope, method, staffing, ethics, or delivery, it needs a retrievable record. If it cannot be found later, expect confusion, delay, or conflict.

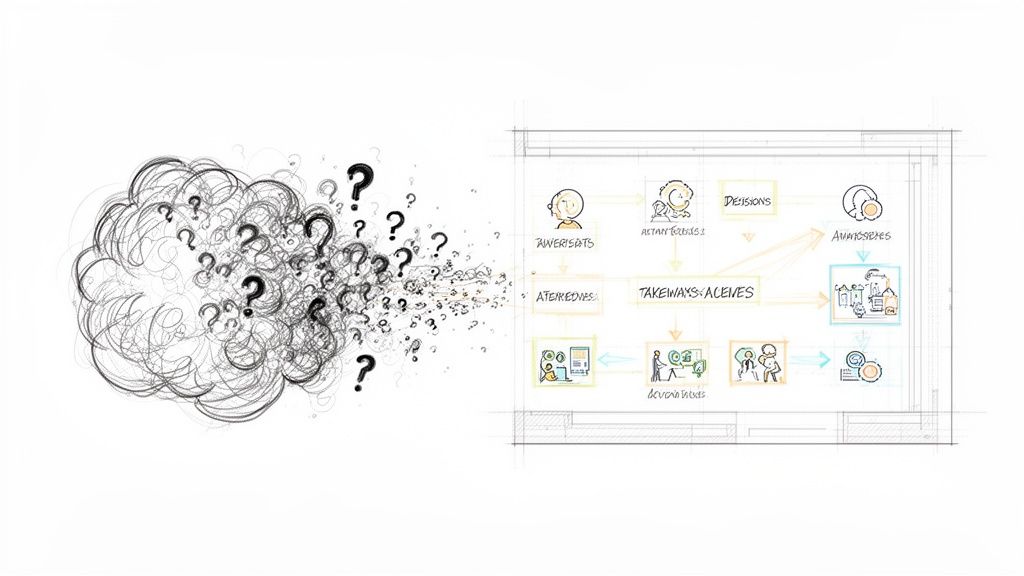

Don't confuse activity with progress

Busy teams can still drift. Full calendars, long meetings, and constant messaging often hide the underlying problem, which is that priorities have blurred and dependencies are piling up.

Healthy projects show a few consistent signals. Priorities stay stable long enough for work to finish. Owners are clear. Documentation is current. Analysis starts to converge.

Trouble looks different. Scope gets reinterpreted every week. Decision records are missing. Dependencies sit unresolved across multiple check-ins. Those are management warnings, not minor inconveniences.

Disseminate Your Findings for Maximum Impact

A research project isn't finished when the analysis is complete. It's finished when the findings reach the people who can use them, judge them, or build on them. Too many teams treat dissemination as a final administrative push, then wonder why strong work lands without impact.

The final chapter usually unfolds in a predictable way. Analysis produces patterns. Patterns become claims. Claims need evidence, narrative, and audience fit. That last part is where good projects often weaken.

Turn findings into outputs people can actually use

The same result can support several outputs, but each one needs a different shape.

A journal article needs a tight argument, method clarity, and a disciplined discussion section. A stakeholder report needs plain language, implications, and a transparent account of limitations. A conference presentation needs one strong throughline, not every detail the team collected.

I've seen research teams delay dissemination because they're waiting for one perfect master product. That rarely works. It's better to define a primary output and then derive secondary outputs from it.

Tell the story the evidence supports

By this point, the temptation is to pour everything in. Resist that. Good dissemination is selective. It shows the audience what changed in your understanding and why they should care.

Use a simple sequence:

- State the problem clearly

- Explain what you examined

- Present the most defensible findings

- Acknowledge limits without apology

- Translate the findings into implications

That sequence works for articles, presentations, policy briefs, and internal research reports. What changes is the level of technical detail.

A strong conclusion doesn't repeat the project. It interprets it.

Preserve the chain from raw material to public claim

Disciplined project management pays off in these moments. When reviewers, collaborators, or stakeholders ask how a conclusion was reached, you should be able to trace it back through coded material, memos, meeting decisions, and documented methodological choices.

That traceability helps in publication. It also helps when you need to produce derivative outputs later, such as a grant follow-up, a public summary, or a methods appendix for another audience. Teams that documented carefully throughout the project don't have to rebuild the chain of reasoning from scratch.

Close the loop with participants and partners

Not every project ends with a journal. Some should end with a briefing, a workshop, a return summary, or a partner-facing memo that explains what was learned and what happens next. This matters most in applied and community-engaged work, where trust depends on whether people can see how their time and input contributed to a concrete outcome.

A practical dissemination checklist is short:

- Audience: who needs this version

- Format: article, deck, brief, memo, poster, or presentation

- Core message: one sentence the audience should remember

- Evidence base: which figures, quotations, or analyses support it

- Follow-up path: what discussion or action should happen next

The last deliverable in managing research projects is often not the paper. It's the decision, conversation, or next study that the paper makes possible.

If your research team needs cleaner meeting records, searchable transcripts, and faster action-item capture across interviews, check-ins, and stakeholder calls, HypeScribe is a practical tool to add structure without adding more admin.